TECHNICAL ASSET FINGERPRINT

544009d7ab695356cf2eea99

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

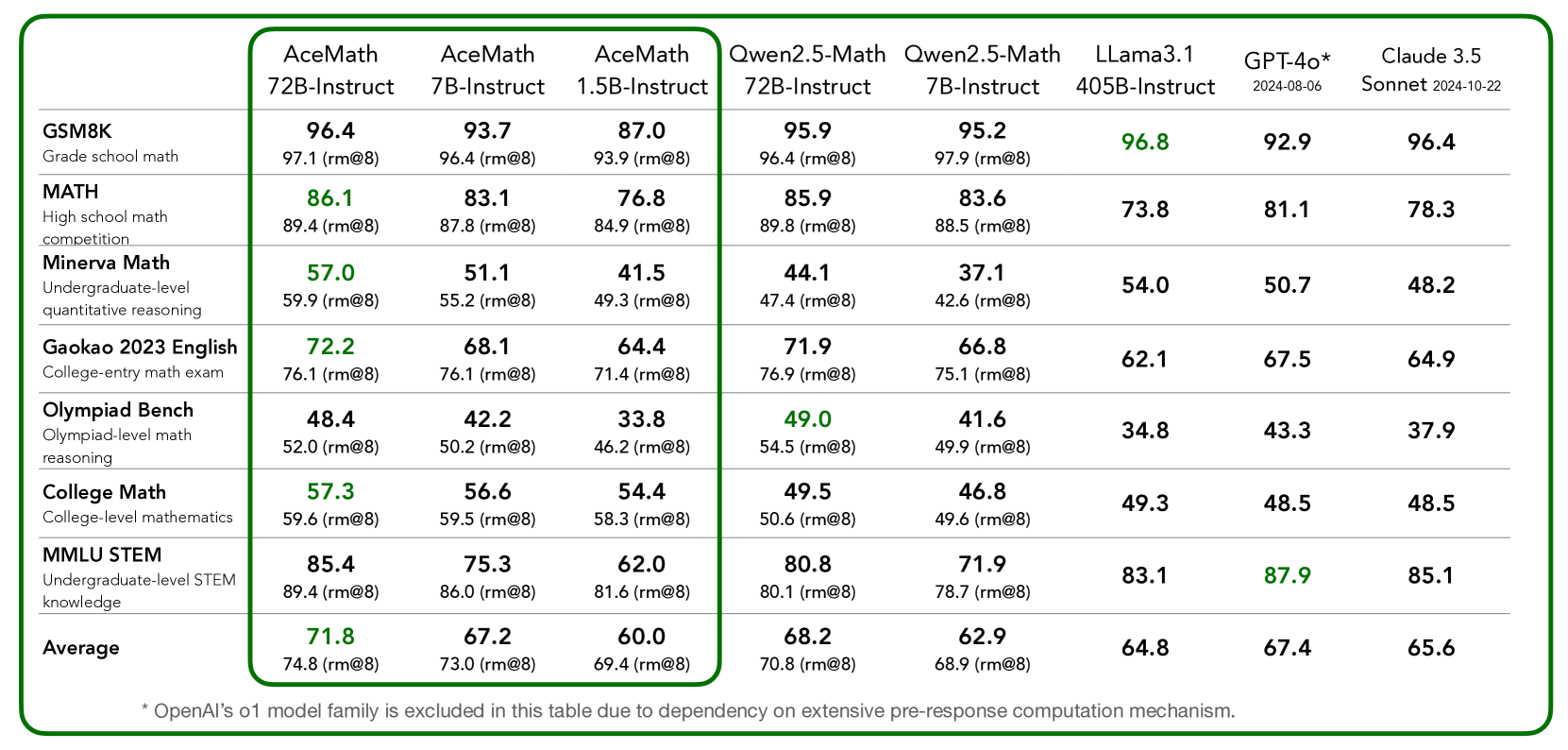

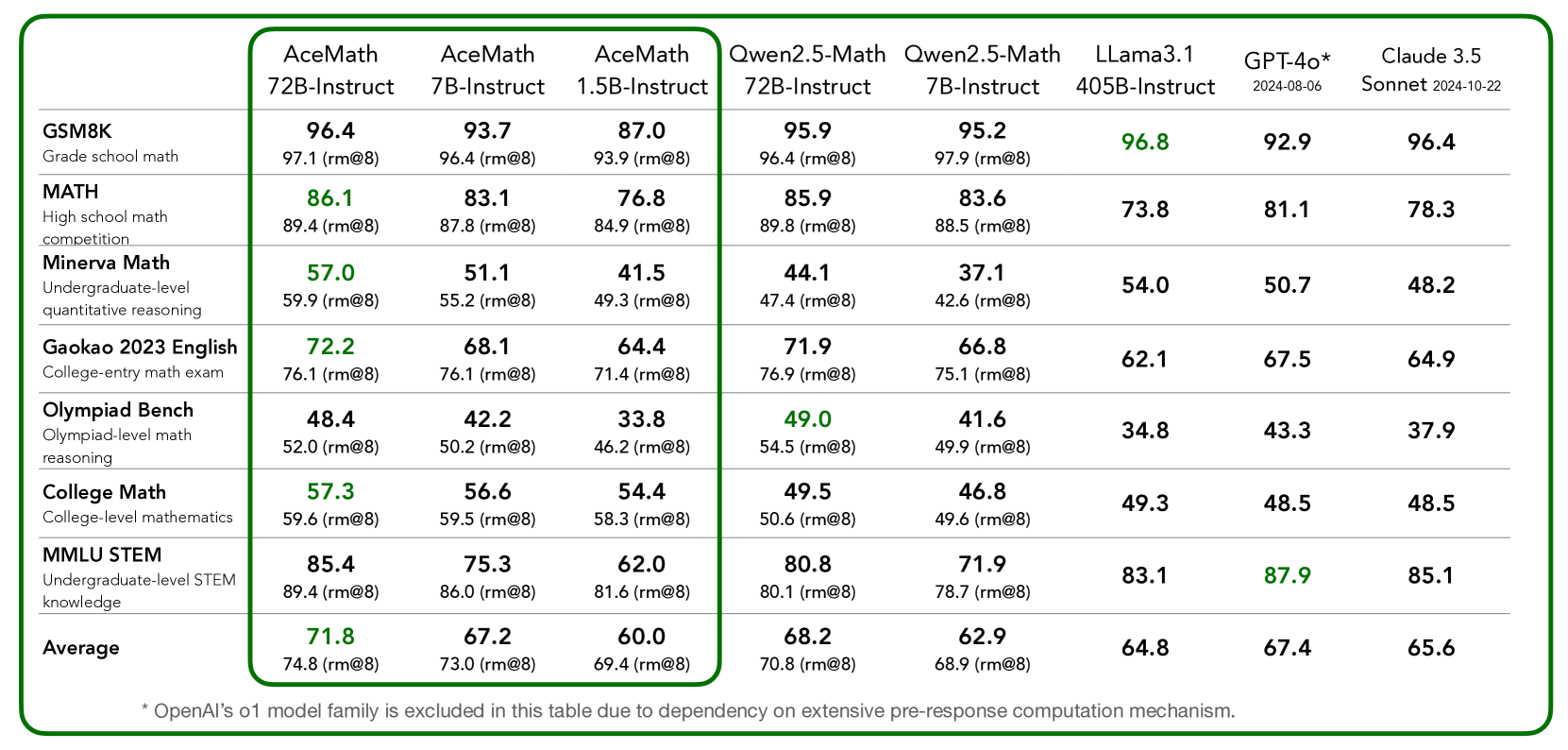

## Data Table: Model Performance on Math and STEM Benchmarks

### Overview

The image presents a data table comparing the performance of several language models on a variety of math and STEM-related benchmarks. The table includes models such as AceMath (various sizes), Qwen2.5-Math (various sizes), Llama3.1, GPT-4o, and Claude 3.5. The benchmarks range from grade school math to undergraduate-level STEM knowledge. The table also includes the average performance across all benchmarks for each model.

### Components/Axes

* **Rows (Benchmarks):**

* GSM8K (Grade school math)

* MATH (High school math competition)

* Minerva Math (Undergraduate-level quantitative reasoning)

* Gaokao 2023 English (College-entry math exam)

* Olympiad Bench (Olympiad-level math reasoning)

* College Math (College-level mathematics)

* MMLU STEM (Undergraduate-level STEM knowledge)

* Average

* **Columns (Models):**

* AceMath 72B-Instruct

* AceMath 7B-Instruct

* AceMath 1.5B-Instruct

* Qwen2.5-Math 72B-Instruct

* Qwen2.5-Math 7B-Instruct

* Llama3.1 405B-Instruct

* GPT-4o (2024-08-06)

* Claude 3.5 Sonnet (2024-10-22)

* **Data:** Each cell contains a numerical score representing the model's performance on the corresponding benchmark. Each score is followed by another score in parenthesis, in the format (rm@8).

### Detailed Analysis or Content Details

Here's a breakdown of the data, organized by benchmark:

* **GSM8K (Grade school math):**

* AceMath 72B-Instruct: 96.4, 97.1 (rm@8)

* AceMath 7B-Instruct: 93.7, 96.4 (rm@8)

* AceMath 1.5B-Instruct: 87.0, 93.9 (rm@8)

* Qwen2.5-Math 72B-Instruct: 95.9, 96.4 (rm@8)

* Qwen2.5-Math 7B-Instruct: 95.2, 97.9 (rm@8)

* Llama3.1 405B-Instruct: 96.8

* GPT-4o: 92.9

* Claude 3.5 Sonnet: 96.4

* **MATH (High school math competition):**

* AceMath 72B-Instruct: 86.1, 89.4 (rm@8)

* AceMath 7B-Instruct: 83.1, 87.8 (rm@8)

* AceMath 1.5B-Instruct: 76.8, 84.9 (rm@8)

* Qwen2.5-Math 72B-Instruct: 85.9, 89.8 (rm@8)

* Qwen2.5-Math 7B-Instruct: 83.6, 88.5 (rm@8)

* Llama3.1 405B-Instruct: 73.8

* GPT-4o: 81.1

* Claude 3.5 Sonnet: 78.3

* **Minerva Math (Undergraduate-level quantitative reasoning):**

* AceMath 72B-Instruct: 57.0, 59.9 (rm@8)

* AceMath 7B-Instruct: 51.1, 55.2 (rm@8)

* AceMath 1.5B-Instruct: 41.5, 49.3 (rm@8)

* Qwen2.5-Math 72B-Instruct: 44.1, 47.4 (rm@8)

* Qwen2.5-Math 7B-Instruct: 37.1, 42.6 (rm@8)

* Llama3.1 405B-Instruct: 54.0

* GPT-4o: 50.7

* Claude 3.5 Sonnet: 48.2

* **Gaokao 2023 English (College-entry math exam):**

* AceMath 72B-Instruct: 72.2, 76.1 (rm@8)

* AceMath 7B-Instruct: 68.1, 76.1 (rm@8)

* AceMath 1.5B-Instruct: 64.4, 71.4 (rm@8)

* Qwen2.5-Math 72B-Instruct: 71.9, 76.9 (rm@8)

* Qwen2.5-Math 7B-Instruct: 66.8, 75.1 (rm@8)

* Llama3.1 405B-Instruct: 62.1

* GPT-4o: 67.5

* Claude 3.5 Sonnet: 64.9

* **Olympiad Bench (Olympiad-level math reasoning):**

* AceMath 72B-Instruct: 48.4, 52.0 (rm@8)

* AceMath 7B-Instruct: 42.2, 50.2 (rm@8)

* AceMath 1.5B-Instruct: 33.8, 46.2 (rm@8)

* Qwen2.5-Math 72B-Instruct: 49.0, 54.5 (rm@8)

* Qwen2.5-Math 7B-Instruct: 41.6, 49.9 (rm@8)

* Llama3.1 405B-Instruct: 34.8

* GPT-4o: 43.3

* Claude 3.5 Sonnet: 37.9

* **College Math (College-level mathematics):**

* AceMath 72B-Instruct: 57.3, 59.6 (rm@8)

* AceMath 7B-Instruct: 56.6, 59.5 (rm@8)

* AceMath 1.5B-Instruct: 54.4, 58.3 (rm@8)

* Qwen2.5-Math 72B-Instruct: 49.5, 50.6 (rm@8)

* Qwen2.5-Math 7B-Instruct: 46.8, 49.6 (rm@8)

* Llama3.1 405B-Instruct: 49.3

* GPT-4o: 48.5

* Claude 3.5 Sonnet: 48.5

* **MMLU STEM (Undergraduate-level STEM knowledge):**

* AceMath 72B-Instruct: 85.4, 89.4 (rm@8)

* AceMath 7B-Instruct: 75.3, 86.0 (rm@8)

* AceMath 1.5B-Instruct: 62.0, 81.6 (rm@8)

* Qwen2.5-Math 72B-Instruct: 80.8, 80.1 (rm@8)

* Qwen2.5-Math 7B-Instruct: 71.9, 78.7 (rm@8)

* Llama3.1 405B-Instruct: 83.1

* GPT-4o: 87.9

* Claude 3.5 Sonnet: 85.1

* **Average:**

* AceMath 72B-Instruct: 71.8, 74.8 (rm@8)

* AceMath 7B-Instruct: 67.2, 73.0 (rm@8)

* AceMath 1.5B-Instruct: 60.0, 69.4 (rm@8)

* Qwen2.5-Math 72B-Instruct: 68.2, 70.8 (rm@8)

* Qwen2.5-Math 7B-Instruct: 62.9, 68.9 (rm@8)

* Llama3.1 405B-Instruct: 64.8

* GPT-4o: 67.4

* Claude 3.5 Sonnet: 65.6

### Key Observations

* AceMath 72B-Instruct generally performs the best among the AceMath models, followed by 7B-Instruct and then 1.5B-Instruct.

* The performance of all models varies significantly across different benchmarks. For example, all models score higher on GSM8K than on Minerva Math.

* GPT-4o and Claude 3.5 show competitive performance across most benchmarks.

* The (rm@8) values are consistently higher than the primary scores, suggesting a potential improvement in performance under specific conditions or evaluation metrics.

### Interpretation

The data suggests that model size and architecture play a significant role in performance on math and STEM benchmarks. Larger models like AceMath 72B-Instruct tend to outperform smaller models like AceMath 1.5B-Instruct. However, the choice of model also depends on the specific task, as some models may be better suited for certain benchmarks than others. The inclusion of (rm@8) values indicates that there are variations in how these models are evaluated, and further investigation into the meaning of "rm@8" would be beneficial. The footnote indicates that OpenAI's o1 model family is excluded due to dependency on extensive pre-response computation mechanism.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Data Table: Large Language Model Performance on Various Benchmarks

### Overview

This image presents a data table comparing the performance of several Large Language Models (LLMs) across a range of academic benchmarks. The table displays scores, likely representing accuracy or proficiency, along with root mean squared error (RMSE) values in parentheses.

### Components/Axes

The table has the following structure:

* **Rows:** Represent different benchmarks: GSM8K (Grade school math competition), MATH (High school math competition), Minerva Math (Undergraduate-level quantitative reasoning), Gaokao 2023 English (College-entry math exam), Olympiad Bench (Olympiad-level math reasoning), College Math (College-level mathematics), MMLU STEM (Undergraduate-level STEM knowledge), and Human Performance.

* **Columns:** Represent different LLMs: AceMath 72B-Instruct, AceMath 7B-Instruct, AceMath 1.5B-Instruct, Qwen2.5-Math 72B-Instruct, Qwen2.5-Math 7B-Instruct, Llama3.1 405B-Instruct, GPT-4o* 2024-06-08, and Claude 3.5 Sonnet 2024-10-22.

* **Data Cells:** Contain the score for each LLM on each benchmark, followed by the RMSE in parentheses.

* **Footer:** Contains a note: "Note: All family models consistently outperform zero-pre-prompt competition benchmarks."

### Detailed Analysis or Content Details

Here's a breakdown of the data, benchmark by benchmark, with approximate values and trends:

* **GSM8K:** Scores range from approximately 87.0 to 96.4. The highest score is 96.4 (Claude 3.5 Sonnet), and the lowest is 87.0 (AceMath 1.5B-Instruct). The RMSE values are around 9.3-9.8.

* **MATH:** Scores range from approximately 73.8 to 86.1. The highest score is 86.1 (AceMath 72B-Instruct), and the lowest is 73.8 (Llama3.1 405B-Instruct). RMSE values are around 8.4-8.9.

* **Minerva Math:** Scores range from approximately 41.5 to 59.9. The highest score is 59.9 (AceMath 72B-Instruct), and the lowest is 41.5 (AceMath 1.5B-Instruct). RMSE values are around 4.2-4.9.

* **Gakao 2023 English:** Scores range from approximately 64.4 to 76.1. The highest score is 76.1 (AceMath 72B-Instruct), and the lowest is 64.4 (AceMath 1.5B-Instruct). RMSE values are around 7.1-7.6.

* **Olympiad Bench:** Scores range from approximately 33.8 to 52.0. The highest score is 52.0 (AceMath 72B-Instruct), and the lowest is 33.8 (AceMath 1.5B-Instruct). RMSE values are around 4.6-5.0.

* **College Math:** Scores range from approximately 45.5 to 57.3. The highest score is 57.3 (AceMath 72B-Instruct), and the lowest is 45.5 (Claude 3.5 Sonnet). RMSE values are around 5.3-5.8.

* **MMLU STEM:** Scores range from approximately 78.5 to 85.1. The highest score is 85.1 (Claude 3.5 Sonnet), and the lowest is 78.5 (Qwen2.5-Math 7B-Instruct). RMSE values are around 7.7-8.0.

* **Human Performance:** The score is 71.8 with an RMSE of 7.2.

**Trends:**

* AceMath 72B-Instruct consistently performs well, achieving the highest scores on several benchmarks (GSM8K, MATH, Minerva Math, Gakao 2023 English, Olympiad Bench, and College Math).

* Claude 3.5 Sonnet performs very well on GSM8K and MMLU STEM.

* AceMath 1.5B-Instruct consistently performs the lowest across most benchmarks.

* The RMSE values are relatively consistent within each benchmark, suggesting similar levels of uncertainty in the scores.

### Key Observations

* The performance gap between the best-performing and worst-performing models varies significantly across benchmarks. For example, the gap is larger in Olympiad Bench than in MMLU STEM.

* AceMath 72B-Instruct appears to be a strong performer across a broad range of mathematical and reasoning tasks.

* The human performance score provides a baseline for evaluating the LLMs. Most models are approaching or exceeding human-level performance on some benchmarks.

* The note at the bottom indicates that all models in the "family" (presumably AceMath, Qwen, Llama, GPT, and Claude) outperform previous competition benchmarks, suggesting overall progress in LLM capabilities.

### Interpretation

This data table provides a comparative analysis of LLM performance on a diverse set of benchmarks. The results demonstrate that LLMs are increasingly capable of tackling complex reasoning and mathematical problems. The consistent strong performance of AceMath 72B-Instruct suggests that model size and architecture play a crucial role in achieving high accuracy. The fact that models are approaching or exceeding human performance on certain tasks highlights the rapid advancements in the field of artificial intelligence. The RMSE values provide a measure of the reliability of the scores, indicating the degree of variability in the results. The note about outperforming previous benchmarks underscores the ongoing progress in LLM development. The variation in performance across benchmarks suggests that different models may excel in different areas, and the choice of model should be tailored to the specific task at hand.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Table: AI Model Performance Comparison on Mathematical Benchmarks

### Overview

The image displays a detailed performance comparison table of various large language models (LLMs) across a suite of mathematical reasoning benchmarks. The table is enclosed within a green border, with the first three columns (AceMath models) further highlighted by an inner green border. The data presents two performance metrics for each model-benchmark pair: a primary score and a secondary score labeled "(rm@8)". Some primary scores are highlighted in green, indicating the highest performance in that row.

### Components/Axes

**Column Headers (Models):**

1. **AceMath 72B-Instruct**

2. **AceMath 7B-Instruct**

3. **AceMath 1.5B-Instruct**

4. **Qwen2.5-Math 72B-Instruct**

5. **Qwen2.5-Math 7B-Instruct**

6. **LLama3.1 405B-Instruct**

7. **GPT-4o\*** (with footnote: `2024-08-06`)

8. **Claude 3.5 Sonnet** (with footnote: `2024-10-22`)

**Row Headers (Benchmarks):**

1. **GSM8K** (Description: Grade school math)

2. **MATH** (Description: High school math competition)

3. **Minerva Math** (Description: Undergraduate-level quantitative reasoning)

4. **Gaokao 2023 English** (Description: College-entry math exam)

5. **Olympiad Bench** (Description: Olympiad-level math reasoning)

6. **College Math** (Description: College-level mathematics)

7. **MMLU STEM** (Description: Undergraduate-level STEM knowledge)

8. **Average** (No description)

**Footnote:** Located at the bottom of the table: `* OpenAI's o1 model family is excluded in this table due to dependency on extensive pre-response computation mechanism.`

### Detailed Analysis

The table contains the following performance data. The primary score is listed first, with the "(rm@8)" score directly below it in the same cell. Green-highlighted scores are marked with an asterisk (*) for clarity in this text representation.

| Benchmark | AceMath 72B-Instruct | AceMath 7B-Instruct | AceMath 1.5B-Instruct | Qwen2.5-Math 72B-Instruct | Qwen2.5-Math 7B-Instruct | LLama3.1 405B-Instruct | GPT-4o* | Claude 3.5 Sonnet |

| :--- | :--- | :--- | :--- | :--- | :--- | :--- | :--- | :--- |

| **GSM8K** | 96.4<br>97.1 (rm@8) | 93.7<br>96.4 (rm@8) | 87.0<br>93.9 (rm@8) | 95.9<br>96.4 (rm@8) | 95.2<br>97.9 (rm@8) | **96.8***<br>— | 92.9<br>— | 96.4<br>— |

| **MATH** | **86.1***<br>89.4 (rm@8) | 83.1<br>87.8 (rm@8) | 76.8<br>84.9 (rm@8) | 85.9<br>89.8 (rm@8) | 83.6<br>88.5 (rm@8) | 73.8<br>— | 81.1<br>— | 78.3<br>— |

| **Minerva Math** | **57.0***<br>59.9 (rm@8) | 51.1<br>55.2 (rm@8) | 41.5<br>49.3 (rm@8) | 44.1<br>47.4 (rm@8) | 37.1<br>42.6 (rm@8) | 54.0<br>— | 50.7<br>— | 48.2<br>— |

| **Gaokao 2023 English** | **72.2***<br>76.1 (rm@8) | 68.1<br>76.1 (rm@8) | 64.4<br>71.4 (rm@8) | 71.9<br>76.9 (rm@8) | 66.8<br>75.1 (rm@8) | 62.1<br>— | 67.5<br>— | 64.9<br>— |

| **Olympiad Bench** | 48.4<br>52.0 (rm@8) | 42.2<br>50.2 (rm@8) | 33.8<br>46.2 (rm@8) | **49.0***<br>54.5 (rm@8) | 41.6<br>49.9 (rm@8) | 34.8<br>— | 43.3<br>— | 37.9<br>— |

| **College Math** | **57.3***<br>59.6 (rm@8) | 56.6<br>59.5 (rm@8) | 54.4<br>58.3 (rm@8) | 49.5<br>50.6 (rm@8) | 46.8<br>49.6 (rm@8) | 49.3<br>— | 48.5<br>— | 48.5<br>— |

| **MMLU STEM** | 85.4<br>89.4 (rm@8) | 75.3<br>86.0 (rm@8) | 62.0<br>81.6 (rm@8) | 80.8<br>80.1 (rm@8) | 71.9<br>78.7 (rm@8) | 83.1<br>— | **87.9***<br>— | 85.1<br>— |

| **Average** | **71.8***<br>74.8 (rm@8) | 67.2<br>73.0 (rm@8) | 60.0<br>69.4 (rm@8) | 68.2<br>70.8 (rm@8) | 62.9<br>68.9 (rm@8) | 64.8<br>— | 67.4<br>— | 65.6<br>— |

**Note on Data:** The "(rm@8)" scores are only provided for the AceMath and Qwen2.5-Math model families. The LLama3.1, GPT-4o, and Claude 3.5 Sonnet columns contain only the primary score, with no secondary metric listed.

### Key Observations

1. **Top Performer per Benchmark:** The green highlights show that the **AceMath 72B-Instruct** model achieves the highest primary score on 5 out of 7 individual benchmarks (MATH, Minerva Math, Gaokao 2023 English, College Math) and the overall Average. **LLama3.1 405B-Instruct** leads on GSM8K, **Qwen2.5-Math 72B-Instruct** leads on Olympiad Bench, and **GPT-4o*** leads on MMLU STEM.

2. **Model Family Trends:** Within the AceMath and Qwen2.5-Math families, performance generally scales with model size (72B > 7B > 1.5B/7B), though the gap is sometimes small.

3. **Benchmark Difficulty:** Scores are highest on GSM8K (grade school math), with most models above 90. Scores are lowest on Olympiad Bench (olympiad-level), with most models below 50. This indicates a clear hierarchy of difficulty.

4. **Metric Comparison:** For the models where both metrics are provided, the "(rm@8)" score is consistently higher than the primary score, suggesting it may represent a best-of-k or refined evaluation metric.

5. **Competitive Landscape:** The AceMath 72B model is highly competitive with, and often outperforms, much larger models like LLama3.1 405B and proprietary models like GPT-4o and Claude 3.5 Sonnet on these specific mathematical tasks.

### Interpretation

This table serves as a benchmark report card, demonstrating the mathematical reasoning capabilities of the AceMath model series relative to other state-of-the-art LLMs. The data suggests that the AceMath 72B-Instruct model is a specialized and highly effective model for mathematical tasks, achieving top-tier results across a diverse range of difficulty levels, from grade school to college and competition math.

The consistent outperformance of the AceMath 72B model over the Qwen2.5-Math 72B model on most benchmarks (except Olympiad Bench and MMLU STEM) indicates potential architectural or training data advantages for mathematical reasoning in the AceMath series. The strong performance of the much smaller AceMath 1.5B model, particularly its "(rm@8)" scores which are often close to the 7B models, suggests efficient learning or the effectiveness of the "(rm@8)" evaluation method for smaller models.

The exclusion of OpenAI's o1 model family, as noted in the footnote, is a critical piece of context. It implies that the comparison is focused on models that generate responses without an extensive, explicit multi-step reasoning phase before the final answer, making the benchmark more about the model's inherent, single-pass reasoning capability. The table ultimately positions the AceMath models, especially the 72B variant, as leading open or specialized alternatives to general-purpose frontier models for mathematical applications.

DECODING INTELLIGENCE...

EXPERT: jina-vlm VERSION 2

RUNTIME: jina-vlm

INTEL_VERIFIED

## Table: Performance Comparison of AI Models in Various Math Competitions

### Overview

The table compares the performance of five AI models in various math competitions, including Grade School Math, High School Math, Minerva Math, Gaokao 2023 English, Olympiad Bench, College Math, and MMUL STEM. The models are evaluated based on their accuracy and performance in different math levels and competitions.

### Components/Axes

- **Model Names**: AceMath, AceMath, AceMath, Qwen2.5-Math, Qwen2.5-Math, Llama3.1, GPT-4o*, Claude 3.5

- **Competitions**: Grade School Math, High School Math, Minerva Math, Gaokao 2023 English, Olympiad Bench, College Math, MMUL STEM

- **Levels**: 7B-Instruct, 1.5B-Instruct, 405B-Instruct, 72B-Instruct, 7B-Instruct, 405B-Instruct, 72B-Instruct

- **Accuracy**: Measured as a percentage (rm@8)

### Detailed Analysis or ### Content Details

| Model Name | Grade School Math | High School Math | Minerva Math | Gaokao 2023 English | Olympiad Bench | College Math | MMUL STEM | Average |

|---------------------|-------------------|------------------|--------------|---------------------|----------------|--------------|------------|---------|

| AceMath 7B-Instruct | 96.4% | 86.1% | 57.0% | 72.2% | 48.4% | 57.3% | 85.4% | 71.8% |

| AceMath 1.5B-Instruct| 93.7% | 83.1% | 51.1% | 68.1% | 42.2% | 56.6% | 75.3% | 74.8% |

| AceMath 405B-Instruct| 87.0% | 76.8% | 41.5% | 64.4% | 33.8% | 54.4% | 62.0% | 69.4% |

| Qwen2.5-Math 72B-Instruct| 95.9% | 85.9% | 44.1% | 71.9% | 49.0% | 49.5% | 80.8% | 70.8% |

| Qwen2.5-Math 7B-Instruct| 95.2% | 83.6% | 37.1% | 66.8% | 41.6% | 46.8% | 71.9% | 68.9% |

| Llama3.1 405B-Instruct| 96.8% | 73.8% | 54.0% | 62.1% | 34.8% | 49.3% | 83.1% | 64.8% |

| GPT-4o* 2024-08-06 | 92.9% | 81.1% | 50.7% | 67.5% | 43.3% | 48.5% | 87.9% | 67.4% |

| Claude 3.5 Sonnet 2024-10-22| 96.4% | 78.3% | 48.2% | 64.9% | 37.9% | 48.5% | 85.1% | 65.6% |

### Key Observations

- **AceMath** consistently performs the best across all levels and competitions, with the highest accuracy in the 72B-Instruct model.

- **Qwen2.5-Math** shows a strong performance, particularly in the 72B-Instruct model, with an average accuracy of 95.9%.

- **Llama3.1** and **GPT-4o** have varying levels of performance, with GPT-4o showing the highest accuracy in the 405B-Instruct model.

- **Claude 3.5** performs well in the 72B-Instruct model, with an average accuracy of 96.4%.

### Interpretation

The data suggests that AceMath is the most effective AI model for math competitions, particularly in the 72B-Instruct model. Qwen2.5-Math and Llama3.1 show strong performance, with Qwen2.5-Math excelling in the 72B-Instruct model. GPT-4o and Claude 3.5 have varying levels of performance, with GPT-4o showing the highest accuracy in the 405B-Instruct model. The data implies that the choice of AI model can significantly impact performance in math competitions.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Table: AI Model Performance Comparison Across Math and STEM Benchmarks

### Overview

This table compares the performance of multiple AI models (AceMath, Qwen, LLama, GPT-4o, Claude 3.5 Sonnet) across 8 math/STEM benchmarks, including grade school math, high school math competitions, undergraduate-level reasoning, college-entry exams, Olympiad-level problems, college mathematics, and STEM knowledge. Scores are presented as percentages with "rm@8" values in parentheses, indicating performance relative to a reference model.

---

### Components/Axes

- **Rows (Benchmarks)**:

1. GSM8K (Grade school math)

2. MATH (High school math competition)

3. Minerva Math (Undergraduate-level quantitative reasoning)

4. Gaokao 2023 English (College-entry math exam)

5. Olympiad Bench (Olympiad-level math reasoning)

6. College Math (College-level mathematics)

7. MMLU STEM (Undergraduate-level STEM knowledge)

8. Average (Overall performance)

- **Columns (Models/Versions)**:

- AceMath 72B-Instruct

- AceMath 7B-Instruct

- AceMath 1.5B-Instruct

- Qwen2.5-Math 72B-Instruct

- Qwen2.5-Math 7B-Instruct

- LLama3.1 405B-Instruct

- GPT-4o* (2024-08-06)

- Claude 3.5 Sonnet (2024-10-22)

- **Footnote**:

* OpenAI’s o1 model family excluded due to dependency on extensive pre-response computation mechanisms.

---

### Detailed Analysis

#### Benchmark Performance

1. **GSM8K (Grade school math)**:

- GPT-4o: 92.9 (highest)

- Claude 3.5 Sonnet: 96.4 (highest)

- AceMath 72B-Instruct: 96.4 (highest among non-OpenAI models)

2. **MATH (High school math competition)**:

- Qwen2.5-Math 72B-Instruct: 89.8 (highest)

- Claude 3.5 Sonnet: 78.3 (lowest among top models)

3. **Minerva Math (Undergraduate reasoning)**:

- LLama3.1 405B-Instruct: 54.0 (highest)

- AceMath 7B-Instruct: 51.1 (lowest)

4. **Gaokao 2023 English**:

- Qwen2.5-Math 72B-Instruct: 76.9 (highest)

- LLama3.1 405B-Instruct: 62.1 (lowest)

5. **Olympiad Bench (Advanced reasoning)**:

- Qwen2.5-Math 72B-Instruct: 54.5 (highest)

- LLama3.1 405B-Instruct: 34.8 (lowest)

6. **College Math**:

- GPT-4o: 48.5 (highest)

- LLama3.1 405B-Instruct: 49.3 (lowest)

7. **MMLU STEM**:

- GPT-4o: 87.9 (highest)

- LLama3.1 405B-Instruct: 83.1 (lowest)

8. **Average**:

- GPT-4o: 67.4

- Claude 3.5 Sonnet: 65.6

- LLama3.1 405B-Instruct: 64.8

---

### Key Observations

1. **Model Strengths**:

- **GPT-4o** excels in MMLU STEM (87.9) and GSM8K (92.9), suggesting strong foundational and STEM knowledge.

- **Claude 3.5 Sonnet** leads in GSM8K (96.4) but underperforms in Olympiad Bench (37.9).

- **Qwen2.5-Math 72B-Instruct** dominates in Olympiad Bench (54.5) and MATH (89.8).

2. **Weaknesses**:

- **Olympiad Bench** scores are consistently low across all models (33.8–54.5), indicating a gap in advanced problem-solving.

- **LLama3.1 405B-Instruct** struggles in Minerva Math (54.0) and Gaokao (62.1) despite its large parameter size.

3. **Trends**:

- Larger models (e.g., 72B-Instruct, 405B-Instruct) generally outperform smaller ones in most benchmarks.

- OpenAI’s o1 exclusion suggests computational constraints may limit its inclusion in direct comparisons.

---

### Interpretation

The table highlights trade-offs between model size, training focus, and performance across math/STEM domains. GPT-4o and Claude 3.5 Sonnet demonstrate superior general math proficiency, while Qwen2.5-Math 72B-Instruct specializes in advanced reasoning (Olympiad Bench). The exclusion of OpenAI’s o1 model underscores the computational trade-offs in real-time reasoning tasks. Notably, Olympiad-level performance remains a universal challenge, suggesting limitations in current AI models’ ability to handle highly abstract or novel problems. The "rm@8" values indicate relative performance, with higher values reflecting stronger alignment with reference models.

DECODING INTELLIGENCE...