## Diagram: Pathway to Practical AI Interpretability

### Overview

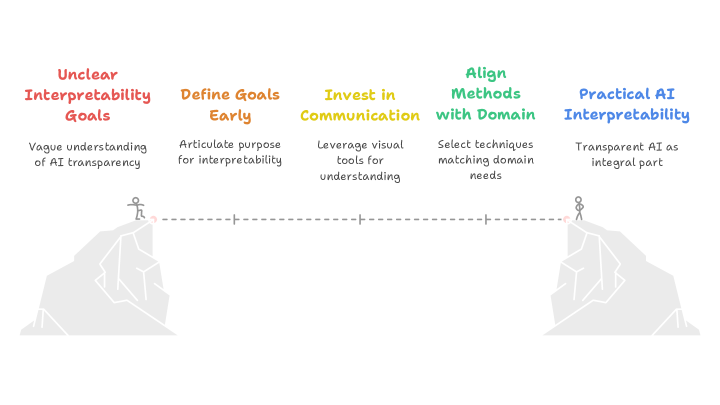

The image is a conceptual diagram illustrating a five-stage progression from an initial state of unclear goals to the achievement of practical AI interpretability. The stages are presented as a linear pathway, visualized as a dashed line connecting two cliffs, with a stick figure at each end representing the starting and ending points of the journey.

### Components/Axes

The diagram consists of five distinct stages, each presented as a column of text. Each stage has a colored title and a descriptive subtitle. The stages are arranged horizontally from left to right, indicating a sequential process.

**Stage 1 (Far Left):**

* **Title (Red):** "Unclear Interpretability Goals"

* **Subtitle:** "Vague understanding of AI transparency"

* **Visual:** A stick figure stands on the edge of a left-side cliff, looking across a chasm.

**Stage 2:**

* **Title (Orange):** "Define Goals Early"

* **Subtitle:** "Articulate purpose for interpretability"

**Stage 3 (Center):**

* **Title (Yellow):** "Invest in Communication"

* **Subtitle:** "Leverage visual tools for understanding"

**Stage 4:**

* **Title (Green):** "Align Methods with Domain"

* **Subtitle:** "Select techniques matching domain needs"

**Stage 5 (Far Right):**

* **Title (Blue):** "Practical AI Interpretability"

* **Subtitle:** "Transparent AI as integral part"

* **Visual:** A stick figure stands on the edge of a right-side cliff, having crossed the chasm.

**Connecting Element:** A horizontal dashed line runs between the two stick figures, passing through the center of the diagram. It has small vertical tick marks aligned with each of the five text stages, symbolizing the path or journey connecting the stages.

### Detailed Analysis

The diagram presents a clear, linear model for achieving AI interpretability. The progression is defined by specific actions at each stage:

1. **Starting Point (Unclear Goals):** Characterized by a "vague understanding." This is the problem state.

2. **Action 1 (Define Goals):** The first corrective step is to "articulate purpose," moving from vagueness to clarity.

3. **Action 2 (Invest in Communication):** The focus shifts to methodology, specifically using "visual tools" to bridge the gap between technical systems and human understanding.

4. **Action 3 (Align Methods):** This stage emphasizes context-awareness, requiring the selection of interpretability techniques that are appropriate for the specific "domain needs" (e.g., healthcare, finance, autonomous systems).

5. **End State (Practical Interpretability):** The goal is achieved when transparency is no longer an add-on but an "integral part" of the AI system.

The visual metaphor of crossing a chasm between two cliffs reinforces the idea that this is a significant journey requiring deliberate steps to overcome an initial gap in understanding and implementation.

### Key Observations

* **Color Coding:** The titles use a distinct color spectrum (Red -> Orange -> Yellow -> Green -> Blue), which may symbolize progression from a warning/problem state (red) to a resolved/goal state (blue).

* **Spatial Layout:** The linear, left-to-right arrangement is a universal visual metaphor for progress and sequence. The central placement of "Invest in Communication" may suggest it is a pivotal or bridging activity.

* **Symbolism:** The stick figures and cliffs are simple but effective symbols for the practitioner (the figure) and the challenge (the chasm of unclear goals). The dashed line represents the planned, step-wise pathway.

* **Text Hierarchy:** Each stage uses a clear two-line structure: a bold, colored imperative statement (the action) followed by a plain-text explanation (the rationale or outcome).

### Interpretation

This diagram is a prescriptive model for organizations or teams developing AI systems. It argues that **practical AI interpretability is not a technical feature to be added last-minute, but the result of a deliberate, structured process that begins with strategic goal-setting.**

The core message is that transparency is achieved through a combination of **early planning** ("Define Goals"), **effective communication design** ("Invest in Communication"), and **contextual technical selection** ("Align Methods"). The journey from a "vague understanding" to having transparency as an "integral part" requires crossing a conceptual gap, which this five-stage pathway is designed to facilitate.

The model places significant emphasis on the human and process elements (defining purpose, communication, domain alignment) alongside the technical, suggesting that the barriers to AI interpretability are as much about clarity of intent and cross-disciplinary collaboration as they are about algorithms. The final state—"Transparent AI as integral part"—implies a mature stage of AI development where explainability is seamlessly woven into the system's design and operation, rather than being a separate, burdensome compliance task.