\n

## Diagram: AI Interpretability Journey

### Overview

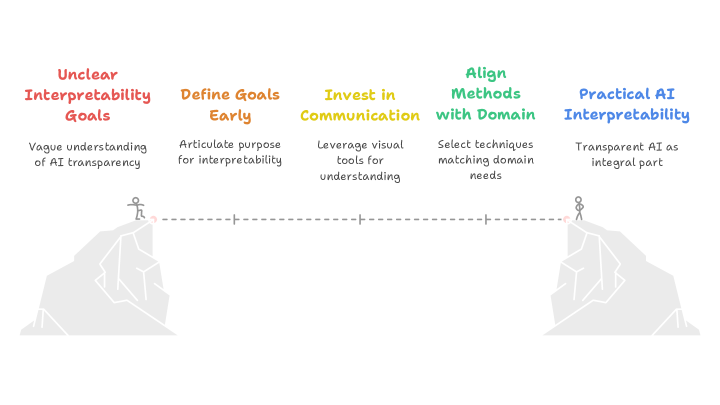

The image is a diagram illustrating a progression towards "Practical AI Interpretability" from "Unclear Interpretability Goals". It depicts a journey across a gap, represented by mountain peaks, with stages of development along the way. The diagram uses a linear flow from left to right, with text descriptions accompanying each stage.

### Components/Axes

The diagram consists of five main sections, arranged horizontally:

1. **Unclear Interpretability Goals:** (Red) - "Vague understanding of AI transparency"

2. **Define Goals Early:** (Orange) - "Articulate purpose for interpretability"

3. **Invest in Communication:** (Yellow) - "Leverage visual tools for understanding"

4. **Align Methods with Domain:** (Green) - "Select techniques matching domain needs"

5. **Practical AI Interpretability:** (Blue) - "Transparent AI as integral part"

A dashed line connects the two mountain peaks, representing the journey. Small figures are positioned on each peak, indicating the starting and ending points.

### Detailed Analysis or Content Details

The diagram presents a sequential process.

* **Stage 1 (Red):** "Unclear Interpretability Goals" is characterized by a "Vague understanding of AI transparency."

* **Stage 2 (Orange):** Moving to the right, the next stage is "Define Goals Early," which involves "Articulate purpose for interpretability."

* **Stage 3 (Yellow):** The third stage, "Invest in Communication," focuses on "Leverage visual tools for understanding."

* **Stage 4 (Green):** The fourth stage, "Align Methods with Domain," emphasizes "Select techniques matching domain needs."

* **Stage 5 (Blue):** The final stage, "Practical AI Interpretability," results in "Transparent AI as integral part."

The dashed line connecting the peaks suggests a progression or transition between these stages. The figures on the peaks are small and stylized, representing individuals or teams undertaking this journey.

### Key Observations

The diagram highlights the importance of a structured approach to AI interpretability. It emphasizes that moving from vague goals to practical implementation requires defining objectives, investing in communication, and aligning methods with the specific domain. The color coding visually reinforces the progression, moving from red (problem) to blue (solution).

### Interpretation

This diagram illustrates a conceptual framework for achieving AI interpretability. It suggests that interpretability isn't simply a technical problem but requires careful planning, communication, and domain expertise. The "gap" represented by the mountains symbolizes the challenges in bridging the gap between complex AI models and human understanding. The diagram implies that a deliberate and phased approach, as outlined in the stages, is crucial for successfully integrating transparent AI into practical applications. The use of visual metaphors (mountains, journey) makes the concept accessible and emphasizes the effort required to achieve the desired outcome. The diagram doesn't provide quantitative data, but rather a qualitative roadmap for improving AI interpretability. It's a high-level overview intended to guide strategy and decision-making.