## Diagram: Stages of Achieving Practical AI Interpretability

### Overview

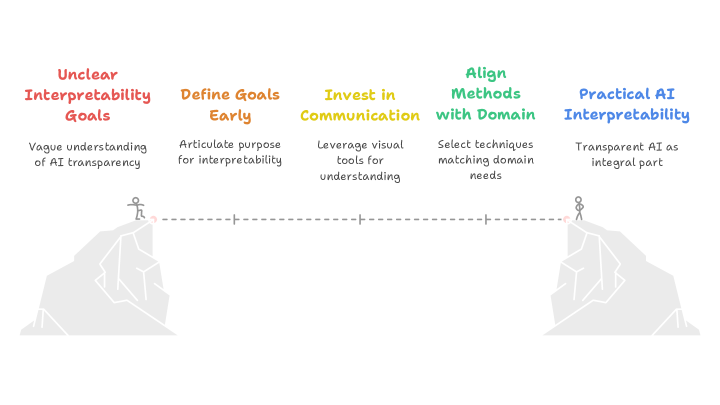

The diagram illustrates a five-stage progression from "Unclear Interpretability Goals" to "Practical AI Interpretability," represented as a journey across a chasm between two mountains. Two climbers are shown at the start and end points, connected by a dotted line. Each stage is color-coded and labeled with descriptive text.

### Components/Axes

- **Visual Elements**:

- Two stylized mountains (left and right) with climbers.

- Dotted line connecting start (left mountain) and end (right mountain).

- Five color-coded stages with labels and subtitles.

- **Color Legend**:

- Red: Unclear Interpretability Goals

- Orange: Define Goals Early

- Yellow: Invest in Communication

- Green: Align Methods with Domain

- Blue: Practical AI Interpretability

### Detailed Analysis

1. **Unclear Interpretability Goals (Red)**

- Label: "Vague understanding of AI transparency"

- Position: Start of the dotted line (left mountain base).

2. **Define Goals Early (Orange)**

- Label: "Articulate purpose for interpretability"

- Position: First milestone on the dotted line.

3. **Invest in Communication (Yellow)**

- Label: "Leverage visual tools for understanding"

- Position: Midpoint of the dotted line.

4. **Align Methods with Domain (Green)**

- Label: "Select techniques matching domain needs"

- Position: Second-to-last milestone on the dotted line.

5. **Practical AI Interpretability (Blue)**

- Label: "Transparent AI as integral part"

- Position: End of the dotted line (right mountain base).

- **Climbers**:

- Start climber: Small figure at the left mountain base.

- End climber: Small figure at the right mountain peak.

- Dotted line: Represents the journey between stages.

### Key Observations

- The stages are sequentially ordered from left to right, forming a logical progression.

- Color coding follows a gradient from red (problem) to blue (solution), suggesting increasing clarity.

- The climbers’ positions emphasize the effort required to bridge the gap between vague goals and practical implementation.

### Interpretation

The diagram metaphorically represents the challenges and steps required to operationalize AI interpretability. It underscores the necessity of:

1. **Clarifying objectives** (red → orange) to avoid ambiguity.

2. **Investing in communication tools** (yellow) to bridge the gap between technical teams and stakeholders.

3. **Domain alignment** (green) to ensure relevance and applicability.

4. **Integrating transparency** (blue) as a core component of AI systems.

The climbers symbolize the human effort and strategic planning needed to navigate the complexities of AI development. The dotted line implies that progress is incremental and requires deliberate, structured steps. The absence of intermediate obstacles suggests that the journey is linear but demanding, with each stage building on the previous one.