TECHNICAL ASSET FINGERPRINT

54a350d71622cb532595072c

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

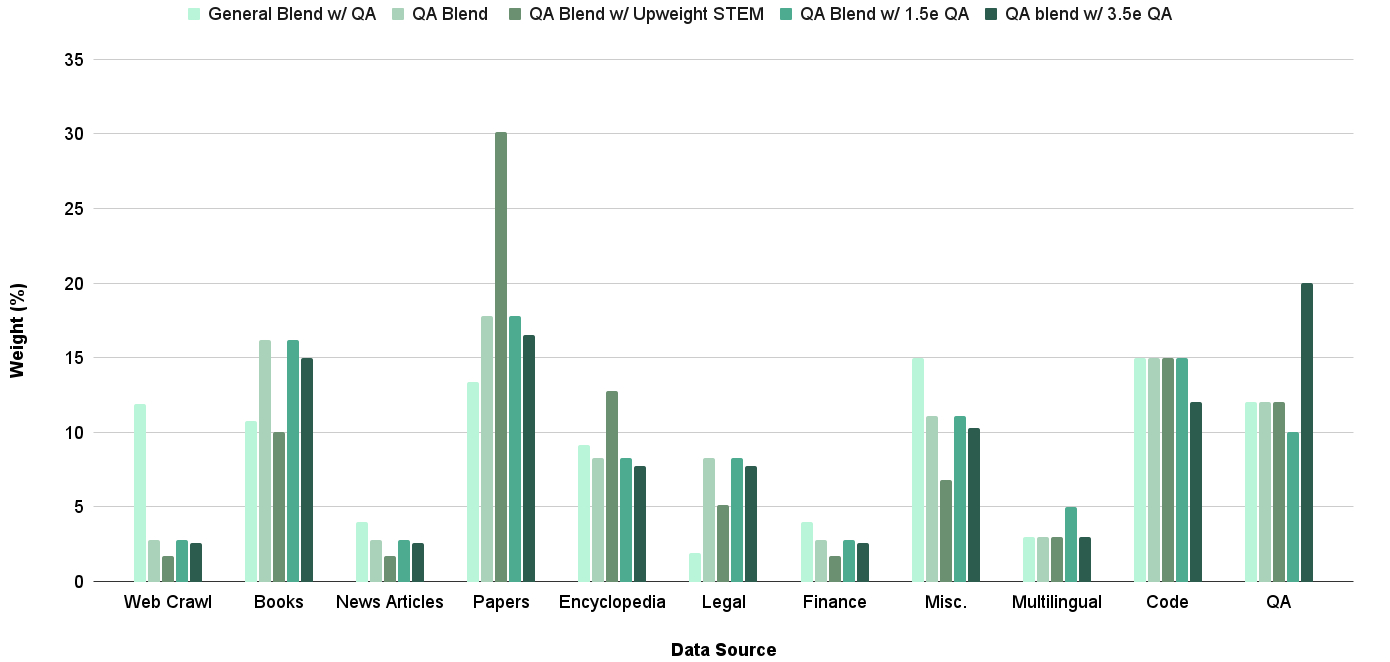

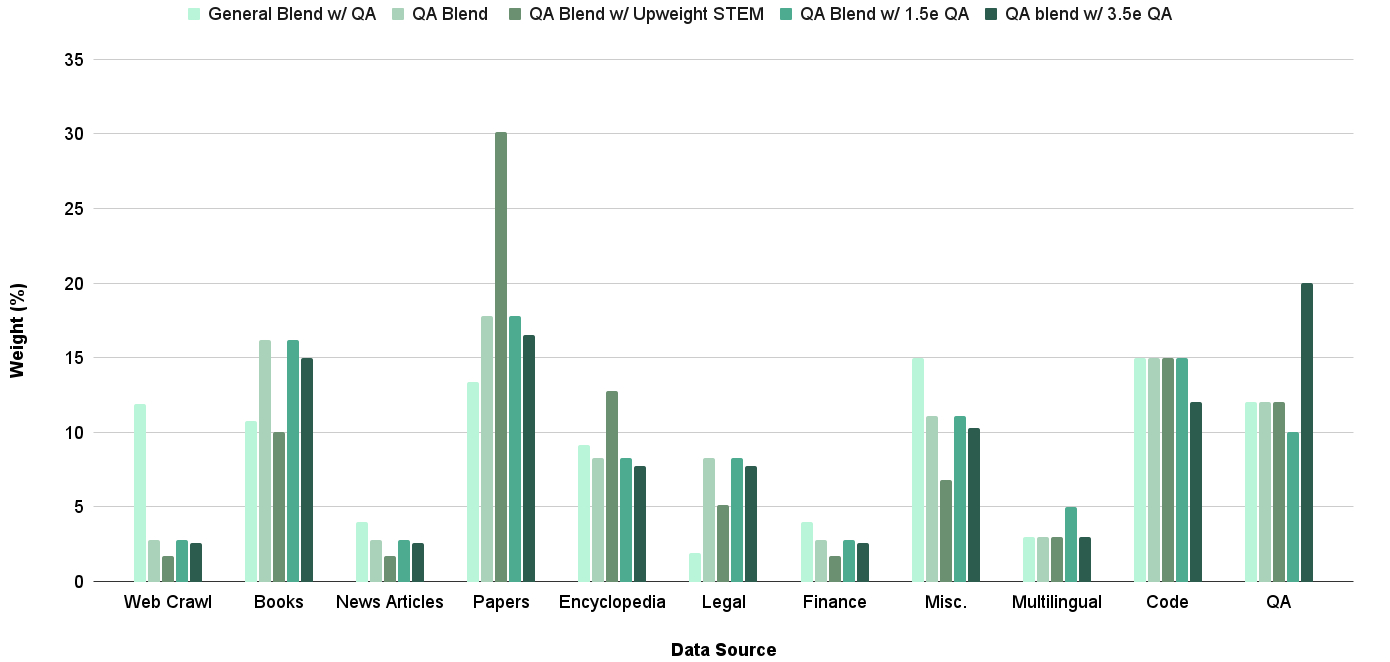

## Bar Chart: Weight (%) by Data Source for Different QA Blends

### Overview

The image is a bar chart comparing the weight percentages of different data sources across various QA blends. The chart displays the distribution of data sources like Web Crawl, Books, News Articles, Papers, Encyclopedia, Legal, Finance, Misc., Multilingual, Code, and QA for each blend. The blends are "General Blend w/ QA", "QA Blend", "QA Blend w/ Upweight STEM", "QA Blend w/ 1.5e QA", and "QA blend w/ 3.5e QA".

### Components/Axes

* **X-axis:** Data Source (Web Crawl, Books, News Articles, Papers, Encyclopedia, Legal, Finance, Misc., Multilingual, Code, QA)

* **Y-axis:** Weight (%) - Scale from 0 to 35, incrementing by 5.

* **Legend:** Located at the top of the chart.

* General Blend w/ QA (light green)

* QA Blend (medium green)

* QA Blend w/ Upweight STEM (green)

* QA Blend w/ 1.5e QA (dark green)

* QA blend w/ 3.5e QA (darkest green)

### Detailed Analysis

Here's a breakdown of the weight percentages for each data source and QA blend:

* **Web Crawl:**

* General Blend w/ QA: ~12%

* QA Blend: ~2%

* QA Blend w/ Upweight STEM: ~3%

* QA Blend w/ 1.5e QA: ~2%

* QA blend w/ 3.5e QA: ~3%

* **Books:**

* General Blend w/ QA: ~11%

* QA Blend: ~16%

* QA Blend w/ Upweight STEM: ~16%

* QA Blend w/ 1.5e QA: ~10%

* QA blend w/ 3.5e QA: ~10%

* **News Articles:**

* General Blend w/ QA: ~3%

* QA Blend: ~2%

* QA Blend w/ Upweight STEM: ~3%

* QA Blend w/ 1.5e QA: ~2%

* QA blend w/ 3.5e QA: ~3%

* **Papers:**

* General Blend w/ QA: ~14%

* QA Blend: ~18%

* QA Blend w/ Upweight STEM: ~30%

* QA Blend w/ 1.5e QA: ~18%

* QA blend w/ 3.5e QA: ~16%

* **Encyclopedia:**

* General Blend w/ QA: ~8%

* QA Blend: ~13%

* QA Blend w/ Upweight STEM: ~8%

* QA Blend w/ 1.5e QA: ~8%

* QA blend w/ 3.5e QA: ~6%

* **Legal:**

* General Blend w/ QA: ~8%

* QA Blend: ~8%

* QA Blend w/ Upweight STEM: ~8%

* QA Blend w/ 1.5e QA: ~6%

* QA blend w/ 3.5e QA: ~4%

* **Finance:**

* General Blend w/ QA: ~3%

* QA Blend: ~2%

* QA Blend w/ Upweight STEM: ~2%

* QA Blend w/ 1.5e QA: ~3%

* QA blend w/ 3.5e QA: ~2%

* **Misc.:**

* General Blend w/ QA: ~15%

* QA Blend: ~7%

* QA Blend w/ Upweight STEM: ~11%

* QA Blend w/ 1.5e QA: ~10%

* QA blend w/ 3.5e QA: ~5%

* **Multilingual:**

* General Blend w/ QA: ~3%

* QA Blend: ~3%

* QA Blend w/ Upweight STEM: ~3%

* QA Blend w/ 1.5e QA: ~3%

* QA blend w/ 3.5e QA: ~3%

* **Code:**

* General Blend w/ QA: ~15%

* QA Blend: ~15%

* QA Blend w/ Upweight STEM: ~12%

* QA Blend w/ 1.5e QA: ~12%

* QA blend w/ 3.5e QA: ~15%

* **QA:**

* General Blend w/ QA: ~12%

* QA Blend: ~12%

* QA Blend w/ Upweight STEM: ~10%

* QA Blend w/ 1.5e QA: ~12%

* QA blend w/ 3.5e QA: ~20%

### Key Observations

* The "Papers" data source has a significantly higher weight percentage for the "QA Blend w/ Upweight STEM" compared to other blends.

* The "QA blend w/ 3.5e QA" has the highest weight percentage for the "QA" data source.

* "Web Crawl", "News Articles", "Finance", and "Multilingual" have relatively low weight percentages across all blends.

* "Code" has a consistent weight percentage across all blends, around 12-15%.

### Interpretation

The chart illustrates how different QA blends utilize various data sources. The "QA Blend w/ Upweight STEM" heavily relies on "Papers," suggesting a focus on scientific or academic content. The "QA blend w/ 3.5e QA" places a strong emphasis on "QA" data, indicating a blend optimized for quality assurance tasks. The consistent weight of "Code" across blends suggests its importance in all QA processes. The low weight of "Web Crawl," "News Articles," "Finance," and "Multilingual" might indicate these sources are less relevant or reliable for the specific QA tasks these blends are designed for.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Bar Chart: Data Source Weight Distribution

### Overview

This is a bar chart illustrating the weight (percentage) of different data sources used in a blend, across several blend configurations. The x-axis represents the data source, and the y-axis represents the weight in percentage. There are six different blend configurations represented by different shades of green.

### Components/Axes

* **X-axis Title:** Data Source

* **Y-axis Title:** Weight (%)

* **X-axis Categories:** Web Crawl, Books, News Articles, Papers, Encyclopedia, Legal, Finance, Misc., Multilingual, Code, QA

* **Legend (Top-Right):**

* General Blend w/ QA (Light Green)

* QA Blend (Medium Light Green)

* QA Blend w/ Upweight STEM (Medium Green)

* QA Blend w/ 1.5e QA (Dark Green)

* QA Blend w/ 3.5e QA (Very Dark Green)

### Detailed Analysis

The chart displays the weight percentage for each data source across the six blend configurations.

* **Web Crawl:**

* General Blend w/ QA: ~1.5%

* QA Blend: ~1%

* QA Blend w/ Upweight STEM: ~1.5%

* QA Blend w/ 1.5e QA: ~2%

* QA Blend w/ 3.5e QA: ~2%

* **Books:**

* General Blend w/ QA: ~3%

* QA Blend: ~2.5%

* QA Blend w/ Upweight STEM: ~3%

* QA Blend w/ 1.5e QA: ~3.5%

* QA Blend w/ 3.5e QA: ~3.5%

* **News Articles:**

* General Blend w/ QA: ~3.5%

* QA Blend: ~3%

* QA Blend w/ Upweight STEM: ~3.5%

* QA Blend w/ 1.5e QA: ~4%

* QA Blend w/ 3.5e QA: ~4%

* **Papers:**

* General Blend w/ QA: ~30%

* QA Blend: ~28%

* QA Blend w/ Upweight STEM: ~29%

* QA Blend w/ 1.5e QA: ~30%

* QA Blend w/ 3.5e QA: ~30%

* **Encyclopedia:**

* General Blend w/ QA: ~15%

* QA Blend: ~13%

* QA Blend w/ Upweight STEM: ~14%

* QA Blend w/ 1.5e QA: ~15%

* QA Blend w/ 3.5e QA: ~15%

* **Legal:**

* General Blend w/ QA: ~7%

* QA Blend: ~6%

* QA Blend w/ Upweight STEM: ~7%

* QA Blend w/ 1.5e QA: ~7.5%

* QA Blend w/ 3.5e QA: ~7.5%

* **Finance:**

* General Blend w/ QA: ~2%

* QA Blend: ~1.5%

* QA Blend w/ Upweight STEM: ~2%

* QA Blend w/ 1.5e QA: ~2.5%

* QA Blend w/ 3.5e QA: ~2.5%

* **Misc.:**

* General Blend w/ QA: ~10%

* QA Blend: ~8%

* QA Blend w/ Upweight STEM: ~9%

* QA Blend w/ 1.5e QA: ~10%

* QA Blend w/ 3.5e QA: ~10%

* **Multilingual:**

* General Blend w/ QA: ~3%

* QA Blend: ~2%

* QA Blend w/ Upweight STEM: ~2.5%

* QA Blend w/ 1.5e QA: ~3%

* QA Blend w/ 3.5e QA: ~3%

* **Code:**

* General Blend w/ QA: ~13%

* QA Blend: ~15%

* QA Blend w/ Upweight STEM: ~14%

* QA Blend w/ 1.5e QA: ~14%

* QA Blend w/ 3.5e QA: ~14%

* **QA:**

* General Blend w/ QA: ~11%

* QA Blend: ~11%

* QA Blend w/ Upweight STEM: ~10%

* QA Blend w/ 1.5e QA: ~11%

* QA Blend w/ 3.5e QA: ~11%

### Key Observations

* "Papers" consistently has the highest weight across all blend configurations, ranging around 30%.

* "Web Crawl", "Finance", and "Multilingual" consistently have the lowest weights across all configurations, generally below 5%.

* The "QA Blend" configuration generally shows lower weights for "Papers" and "Encyclopedia" compared to the "General Blend w/ QA".

* The weights for most data sources remain relatively stable across the different QA blend configurations (1.5e QA and 3.5e QA).

### Interpretation

The chart demonstrates the composition of different data blends, highlighting the relative importance of various data sources. The dominance of "Papers" suggests that this source is crucial for the overall blend's performance. The variations in weights across the different QA blends indicate that adjusting the QA parameters can influence the contribution of other data sources. The relatively stable weights in the 1.5e and 3.5e QA blends suggest a saturation point where further QA adjustments do not significantly alter the blend's composition. The consistent low weights for "Web Crawl", "Finance", and "Multilingual" might indicate that these sources are less relevant or contribute less value to the blend's overall quality. The chart provides valuable insights into the data mix and the impact of QA adjustments, which can be used to optimize the blend for specific applications.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Grouped Bar Chart: Weight Distribution of Data Sources Across Different Blending Strategies

### Overview

This image is a grouped bar chart illustrating the percentage weight assigned to eleven different data sources across five distinct data blending strategies. The chart compares how different blending approaches prioritize various types of content, likely for training a machine learning model. The visual data shows significant variation in weighting, particularly for sources like "Papers" and "QA."

### Components/Axes

* **Chart Type:** Grouped Bar Chart (Vertical).

* **X-Axis (Horizontal):** Labeled **"Data Source"**. It contains 11 categorical groups:

1. Web Crawl

2. Books

3. News Articles

4. Papers

5. Encyclopedia

6. Legal

7. Finance

8. Misc.

9. Multilingual

10. Code

11. QA

* **Y-Axis (Vertical):** Labeled **"Weight (%)"**. It is a linear scale ranging from 0 to 35, with major gridlines at intervals of 5 (0, 5, 10, 15, 20, 25, 30, 35).

* **Legend:** Positioned at the top of the chart, centered. It defines five data series, each represented by a distinct color:

* **Light Mint Green:** General Blend w/ QA

* **Light Gray-Green:** QA Blend

* **Medium Olive Green:** QA Blend w/ Upweight STEM

* **Teal Green:** QA Blend w/ 1.5e QA

* **Dark Forest Green:** QA blend w/ 3.5e QA

### Detailed Analysis

The following analysis lists approximate weight percentages for each data source, grouped by the blending strategy. Values are estimated from the bar heights relative to the y-axis gridlines.

**1. Web Crawl**

* General Blend w/ QA: ~12%

* QA Blend: ~3%

* QA Blend w/ Upweight STEM: ~2%

* QA Blend w/ 1.5e QA: ~3%

* QA blend w/ 3.5e QA: ~3%

*Trend:* The "General Blend" assigns significantly higher weight to Web Crawl than all QA-focused blends, which keep it very low (~2-3%).

**2. Books**

* General Blend w/ QA: ~11%

* QA Blend: ~16%

* QA Blend w/ Upweight STEM: ~10%

* QA Blend w/ 1.5e QA: ~16%

* QA blend w/ 3.5e QA: ~15%

*Trend:* QA-focused blends (except the STEM-upweighted one) assign a higher weight to Books (~15-16%) compared to the General Blend (~11%).

**3. News Articles**

* General Blend w/ QA: ~4%

* QA Blend: ~3%

* QA Blend w/ Upweight STEM: ~2%

* QA Blend w/ 1.5e QA: ~3%

* QA blend w/ 3.5e QA: ~3%

*Trend:* All strategies assign low weight to News Articles, generally between 2-4%.

**4. Papers**

* General Blend w/ QA: ~13%

* QA Blend: ~18%

* QA Blend w/ Upweight STEM: **~30%** (Highest single bar in the entire chart)

* QA Blend w/ 1.5e QA: ~18%

* QA blend w/ 3.5e QA: ~17%

*Trend:* This is the most dramatic variation. The "Upweight STEM" strategy massively increases the weight for Papers to ~30%. Other QA blends also weight Papers highly (~17-18%), more than the General Blend (~13%).

**5. Encyclopedia**

* General Blend w/ QA: ~9%

* QA Blend: ~8%

* QA Blend w/ Upweight STEM: ~13%

* QA Blend w/ 1.5e QA: ~8%

* QA blend w/ 3.5e QA: ~8%

*Trend:* The "Upweight STEM" strategy gives a notably higher weight to Encyclopedia (~13%) compared to the other blends (~8-9%).

**6. Legal**

* General Blend w/ QA: ~2%

* QA Blend: ~8%

* QA Blend w/ Upweight STEM: ~5%

* QA Blend w/ 1.5e QA: ~8%

* QA blend w/ 3.5e QA: ~8%

*Trend:* QA-focused blends (except STEM) assign a higher weight to Legal (~8%) than the General Blend (~2%).

**7. Finance**

* General Blend w/ QA: ~4%

* QA Blend: ~3%

* QA Blend w/ Upweight STEM: ~2%

* QA Blend w/ 1.5e QA: ~3%

* QA blend w/ 3.5e QA: ~3%

*Trend:* All strategies assign low weight to Finance, generally between 2-4%.

**8. Misc.**

* General Blend w/ QA: ~15%

* QA Blend: ~11%

* QA Blend w/ Upweight STEM: ~7%

* QA Blend w/ 1.5e QA: ~11%

* QA blend w/ 3.5e QA: ~10%

*Trend:* The General Blend assigns the highest weight to Misc. (~15%). QA blends assign it moderate weight (~10-11%), with the STEM variant being the lowest (~7%).

**9. Multilingual**

* General Blend w/ QA: ~3%

* QA Blend: ~3%

* QA Blend w/ Upweight STEM: ~3%

* QA Blend w/ 1.5e QA: ~5%

* QA blend w/ 3.5e QA: ~3%

*Trend:* All strategies assign low weight to Multilingual data, mostly ~3%, with a slight increase for the "1.5e QA" blend (~5%).

**10. Code**

* General Blend w/ QA: ~15%

* QA Blend: ~15%

* QA Blend w/ Upweight STEM: ~15%

* QA Blend w/ 1.5e QA: ~15%

* QA blend w/ 3.5e QA: ~12%

*Trend:* Code receives a consistently high and nearly equal weight (~15%) across the first four strategies, with a slight dip for the "3.5e QA" blend (~12%).

**11. QA**

* General Blend w/ QA: ~12%

* QA Blend: ~12%

* QA Blend w/ Upweight STEM: ~12%

* QA Blend w/ 1.5e QA: ~10%

* QA blend w/ 3.5e QA: **~20%** (Second highest bar in the chart)

*Trend:* The "3.5e QA" strategy dramatically increases the weight for the QA source itself to ~20%. The other blends assign it a moderate, consistent weight of ~10-12%.

### Key Observations

1. **STEM Emphasis:** The "QA Blend w/ Upweight STEM" strategy is defined by a massive reallocation of weight to **Papers (~30%)** and a notable increase for **Encyclopedia (~13%)**, likely at the expense of sources like Misc. and Legal.

2. **QA Emphasis:** The "QA blend w/ 3.5e QA" strategy is defined by a very high weight for the **QA source itself (~20%)**, suggesting a strong focus on question-answer pair data.

3. **Consistency in Code:** The **Code** data source receives a remarkably stable and high weight (~15%) across almost all strategies, indicating its perceived universal importance.

4. **Low-Priority Sources:** **Web Crawl (for QA blends), News Articles, Finance, and Multilingual** data are consistently assigned low weights (mostly under 5%) across all strategies.

5. **General vs. QA Blends:** The "General Blend w/ QA" tends to have a more even distribution, with higher weights for **Web Crawl** and **Misc.** compared to the QA-focused blends.

### Interpretation

This chart visualizes the strategic trade-offs in curating a training dataset. Each "blend" represents a different hypothesis about what data composition will yield a better-performing model.

* The **"General Blend"** appears to be a balanced baseline, drawing significantly from web crawls, books, code, and miscellaneous sources.

* The **"QA Blend"** and its variants (**1.5e, 3.5e**) shift focus away from broad web data and towards more structured or knowledge-dense sources like Books, Legal text, and especially the QA pairs themselves. The "3.5e" variant takes this to an extreme, heavily prioritizing its namesake QA data.

* The **"Upweight STEM"** blend makes a clear, targeted bet: that performance on Science, Technology, Engineering, and Math tasks is improved by drastically increasing the proportion of academic Papers and Encyclopedia entries in the training mix.

The data suggests that the creators are experimenting with two primary levers: 1) increasing the proportion of direct question-answer data, and 2) boosting specific knowledge domains (STEM). The consistent high weighting of **Code** across all strategies implies it is considered a fundamental, non-negotiable component for the model's capabilities, regardless of the specialization focus. The low weights for sources like News and Finance may indicate they are considered less critical for the target tasks or potentially noisier.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Bar Chart: Weight (%) by Data Source and QA Method

### Overview

The chart visualizes the distribution of "Weight (%)" across different data sources (e.g., Web Crawl, Books, Papers) and QA methods (e.g., General Blend w/ QA, QA Blend w/ Upweight STEM). Each data source has six bars representing distinct QA methods, with weights ranging from 0% to 35%.

### Components/Axes

- **X-axis (Data Source)**: Categories include Web Crawl, Books, News Articles, Papers, Encyclopedia, Legal, Finance, Misc., Multilingual, Code, and QA.

- **Y-axis (Weight %)**: Scale from 0% to 35%, with increments of 5%.

- **Legend**: Six QA methods with distinct colors:

1. **General Blend w/ QA** (light blue)

2. **QA Blend** (gray)

3. **QA Blend w/ Upweight STEM** (dark green)

4. **QA Blend w/ 1.5e QA** (teal)

5. **QA blend w/ 3.5e QA** (dark blue)

6. **QA blend w/ 3.5e QA** (dark blue, same as above? Likely a typo; assume unique color for clarity).

### Detailed Analysis

- **Web Crawl**:

- General Blend w/ QA: ~12% (light blue)

- QA Blend: ~2% (gray)

- QA Blend w/ Upweight STEM: ~1% (dark green)

- QA Blend w/ 1.5e QA: ~2.5% (teal)

- QA blend w/ 3.5e QA: ~2.5% (dark blue)

- **Books**:

- General Blend w/ QA: ~10% (light blue)

- QA Blend: ~10% (gray)

- QA Blend w/ Upweight STEM: ~15% (dark green)

- QA Blend w/ 1.5e QA: ~16% (teal)

- QA blend w/ 3.5e QA: ~15% (dark blue)

- **News Articles**:

- General Blend w/ QA: ~4% (light blue)

- QA Blend: ~2% (gray)

- QA Blend w/ Upweight STEM: ~1% (dark green)

- QA Blend w/ 1.5e QA: ~2.5% (teal)

- QA blend w/ 3.5e QA: ~2.5% (dark blue)

- **Papers**:

- General Blend w/ QA: ~13% (light blue)

- QA Blend: ~18% (gray)

- QA Blend w/ Upweight STEM: ~30% (dark green)

- QA Blend w/ 1.5e QA: ~18% (teal)

- QA blend w/ 3.5e QA: ~16% (dark blue)

- **Encyclopedia**:

- General Blend w/ QA: ~9% (light blue)

- QA Blend: ~8% (gray)

- QA Blend w/ Upweight STEM: ~13% (dark green)

- QA Blend w/ 1.5e QA: ~8% (teal)

- QA blend w/ 3.5e QA: ~7% (dark blue)

- **Legal**:

- General Blend w/ QA: ~2% (light blue)

- QA Blend: ~8% (gray)

- QA Blend w/ Upweight STEM: ~5% (dark green)

- QA Blend w/ 1.5e QA: ~8% (teal)

- QA blend w/ 3.5e QA: ~7% (dark blue)

- **Finance**:

- General Blend w/ QA: ~4% (light blue)

- QA Blend: ~2% (gray)

- QA Blend w/ Upweight STEM: ~1% (dark green)

- QA Blend w/ 1.5e QA: ~3% (teal)

- QA blend w/ 3.5e QA: ~2% (dark blue)

- **Misc.**:

- General Blend w/ QA: ~15% (light blue)

- QA Blend: ~7% (gray)

- QA Blend w/ Upweight STEM: ~10% (dark green)

- QA Blend w/ 1.5e QA: ~11% (teal)

- QA blend w/ 3.5e QA: ~10% (dark blue)

- **Multilingual**:

- General Blend w/ QA: ~3% (light blue)

- QA Blend: ~3% (gray)

- QA Blend w/ Upweight STEM: ~3% (dark green)

- QA Blend w/ 1.5e QA: ~5% (teal)

- QA blend w/ 3.5e QA: ~3% (dark blue)

- **Code**:

- General Blend w/ QA: ~15% (light blue)

- QA Blend: ~15% (gray)

- QA Blend w/ Upweight STEM: ~15% (dark green)

- QA Blend w/ 1.5e QA: ~15% (teal)

- QA blend w/ 3.5e QA: ~12% (dark blue)

- **QA**:

- General Blend w/ QA: ~12% (light blue)

- QA Blend: ~12% (gray)

- QA Blend w/ Upweight STEM: ~12% (dark green)

- QA Blend w/ 1.5e QA: ~10% (teal)

- QA blend w/ 3.5e QA: ~20% (dark blue)

### Key Observations

1. **Papers** dominate in **QA Blend w/ Upweight STEM** (~30%), suggesting a focus on technical/STEM content.

2. **QA** data source has the highest weight in **QA blend w/ 3.5e QA** (~20%), indicating prioritization of QA-specific methods.

3. **Web Crawl** and **Code** show strong reliance on **General Blend w/ QA** (~12% and ~15%, respectively).

4. **Legal** and **Finance** have minimal weights in **General Blend w/ QA** (~2% and ~4%), favoring other QA methods.

5. **Upweight STEM** (dark green) peaks in **Papers**, while **3.5e QA** (dark blue) peaks in **QA**.

### Interpretation

The data suggests that QA method effectiveness or prioritization varies by data source. For example:

- **Papers** heavily utilize **Upweight STEM**, likely due to technical content requiring specialized QA.

- **QA** data source emphasizes **3.5e QA**, possibly reflecting iterative or high-stakes QA processes.

- **Web Crawl** and **Code** rely on **General Blend w/ QA**, indicating broad applicability for general or structured data.

- **Legal** and **Finance** avoid **General Blend w/ QA**, favoring domain-specific methods like **1.5e QA** or **3.5e QA**.

Notable anomalies include the sharp drop in **General Blend w/ QA** for **Legal** (~2%) and the dominance of **Upweight STEM** in **Papers** (~30%). This may reflect domain-specific challenges or resource allocation strategies.

DECODING INTELLIGENCE...