TECHNICAL ASSET FINGERPRINT

54c152c84febea722e527796

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

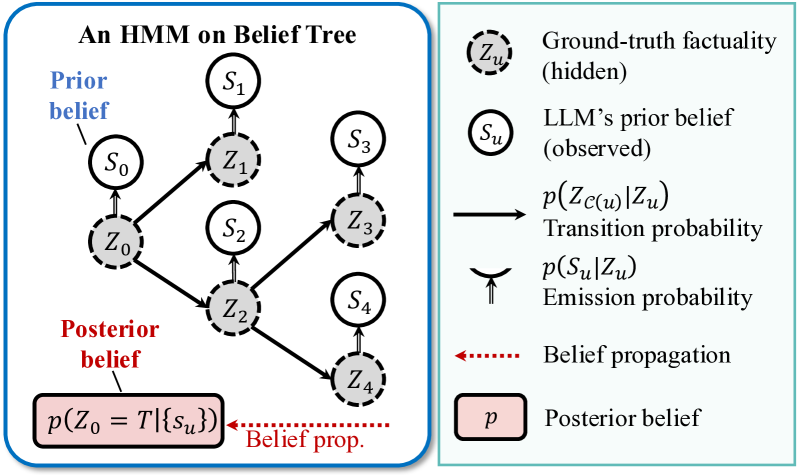

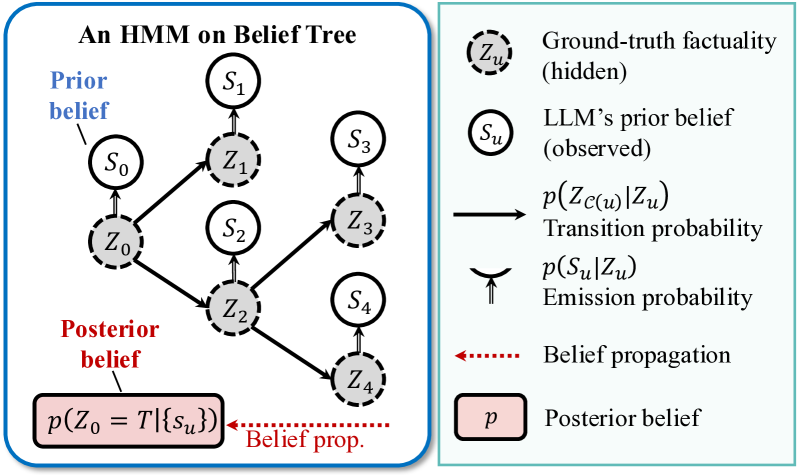

## Diagram: An HMM on Belief Tree

### Overview

The image is a technical diagram illustrating a Hidden Markov Model (HMM) structured as a belief tree. It visually represents the probabilistic relationship between hidden ground-truth states and observed beliefs (likely from a Large Language Model), and how belief propagation updates a posterior belief. The diagram is divided into two main sections: a graphical model on the left and a legend on the right.

### Components/Axes

The diagram contains no traditional chart axes. Its components are nodes, arrows, and explanatory text.

**1. Main Diagram (Left Panel - Blue Border):**

* **Title:** "An HMM on Belief Tree" (centered at the top).

* **Nodes:** Two types of circular nodes.

* **Solid-line circles (S nodes):** Labeled `S₀`, `S₁`, `S₂`, `S₃`, `S₄`. `S₀` is annotated with blue text "Prior belief" pointing to it.

* **Dashed-line circles (Z nodes):** Labeled `Z₀`, `Z₁`, `Z₂`, `Z₃`, `Z₄`. These are shaded grey.

* **Arrows:**

* **Solid black arrows:** Connect Z nodes to other Z nodes (e.g., `Z₀` to `Z₁` and `Z₂`; `Z₂` to `Z₃` and `Z₄`).

* **Double-lined upward arrows:** Connect each Z node to its corresponding S node (e.g., `Z₀` to `S₀`; `Z₁` to `S₁`).

* **Posterior Belief Box:** A pink rectangular box at the bottom-left containing the formula `p(Z₀ = T|{s_u})`. It is labeled with red text "Posterior belief" above it.

* **Belief Propagation Arrow:** A red dotted arrow points from the right side of the diagram towards the posterior belief box, labeled "Belief prop.".

**2. Legend (Right Panel - Green Border):**

A vertical list defining the symbols used in the main diagram.

* **Top Entry:** A dashed-line circle labeled `Zᵤ` with the description "Ground-truth factuality (hidden)".

* **Second Entry:** A solid-line circle labeled `Sᵤ` with the description "LLM's prior belief (observed)".

* **Third Entry:** A solid black right-pointing arrow `→` with the description "p(Z_{c(u)}|Zᵤ) Transition probability".

* **Fourth Entry:** A double-lined upward arrow `⇈` (visually similar to `↑↑`) with the description "p(Sᵤ|Zᵤ) Emission probability".

* **Fifth Entry:** A red dotted left-pointing arrow `⇠` (visually similar to `←` but dotted) with the description "Belief propagation".

* **Bottom Entry:** A pink rectangle labeled `p` with the description "Posterior belief".

### Detailed Analysis

**Tree Structure and Flow:**

The diagram depicts a tree with a root and three levels of depth.

1. **Root:** The hidden state `Z₀` is the root. It emits the observed belief `S₀` (the "Prior belief").

2. **First Branch:** `Z₀` transitions to two child hidden states: `Z₁` and `Z₂`.

* `Z₁` emits `S₁`.

* `Z₂` emits `S₂`.

3. **Second Branch:** `Z₂` further transitions to two child hidden states: `Z₃` and `Z₄`.

* `Z₃` emits `S₃`.

* `Z₄` emits `S₄`.

**Symbol Cross-Reference:**

* The **dashed circle** in the legend (`Zᵤ`) matches all `Z` nodes in the tree (`Z₀`-`Z₄`), representing hidden ground-truth states.

* The **solid circle** in the legend (`Sᵤ`) matches all `S` nodes in the tree (`S₀`-`S₄`), representing observed LLM beliefs.

* The **solid arrow** in the legend matches the arrows connecting `Z` nodes (e.g., `Z₀` → `Z₁`), representing the transition probability between hidden states.

* The **double upward arrow** in the legend matches the arrows from `Z` to `S` nodes (e.g., `Z₀` ⇈ `S₀`), representing the emission probability (how a hidden state generates an observation).

* The **red dotted arrow** in the legend matches the "Belief prop." arrow in the main diagram, indicating the direction of belief propagation flow.

* The **pink rectangle** in the legend matches the posterior belief box in the main diagram.

**Text Transcription:**

All text in the image is in English. The precise transcriptions are:

* Title: "An HMM on Belief Tree"

* Annotations: "Prior belief", "Posterior belief", "Belief prop."

* Node Labels: `S₀`, `S₁`, `S₂`, `S₃`, `S₄`, `Z₀`, `Z₁`, `Z₂`, `Z₃`, `Z₄`

* Formula: `p(Z₀ = T|{s_u})`

* Legend Text: "Ground-truth factuality (hidden)", "LLM's prior belief (observed)", "p(Z_{c(u)}|Zᵤ) Transition probability", "p(Sᵤ|Zᵤ) Emission probability", "Belief propagation", "Posterior belief"

### Key Observations

1. **Hierarchical Belief Structure:** The model is not a linear chain but a tree, allowing a single prior belief (`S₀` from `Z₀`) to branch into multiple, potentially divergent lines of factual inquiry (`Z₁`, `Z₂`, etc.).

2. **Direction of Inference:** The "Belief prop." arrow points *from* the observed data (the collection of all `Sᵤ` nodes, denoted `{s_u}` in the formula) *back towards* the root hidden state `Z₀`. This visually represents the process of using all observed beliefs to update the estimate of the initial ground truth.

3. **Core Formula:** The posterior belief `p(Z₀ = T|{s_u})` is the central output. It represents the probability that the root ground-truth state `Z₀` is True (`T`), given all the observed LLM beliefs `{s_u}` from across the entire tree.

4. **Model Type:** It is explicitly an HMM, implying the hidden states (`Z`) follow a Markov property (the future depends only on the present), and observations (`S`) are conditionally independent given their hidden state.

### Interpretation

This diagram models a sophisticated process for assessing the factual accuracy of an LLM's outputs. It treats the LLM's statements (`S` nodes) not as direct truth, but as noisy observations emitted from an underlying, hidden factual reality (`Z` nodes).

The tree structure suggests a scenario where an initial claim or question (`Z₀`) leads to a series of related sub-claims or lines of reasoning. The LLM generates beliefs (`S₁`, `S₂`, etc.) about each of these sub-claims. The key insight is that these beliefs are interconnected through the hidden factual structure.

The "belief propagation" process is the investigative or reasoning step. It works backwards from the collection of all the LLM's statements to infer the most probable state of the original, root fact (`Z₀`). This is a powerful framework for fact-checking or consistency analysis: if the LLM's beliefs across a complex tree of implications are coherent, confidence in the root fact increases. Contradictions or improbable beliefs in the tree would propagate back to lower the posterior probability of `Z₀` being true.

In essence, the diagram provides a mathematical and visual framework for moving from a collection of potentially unreliable AI-generated statements to a probabilistic estimate of an underlying truth, accounting for the logical dependencies between those statements.

DECODING INTELLIGENCE...