TECHNICAL ASSET FINGERPRINT

54ed383a68e821da2650a35c

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

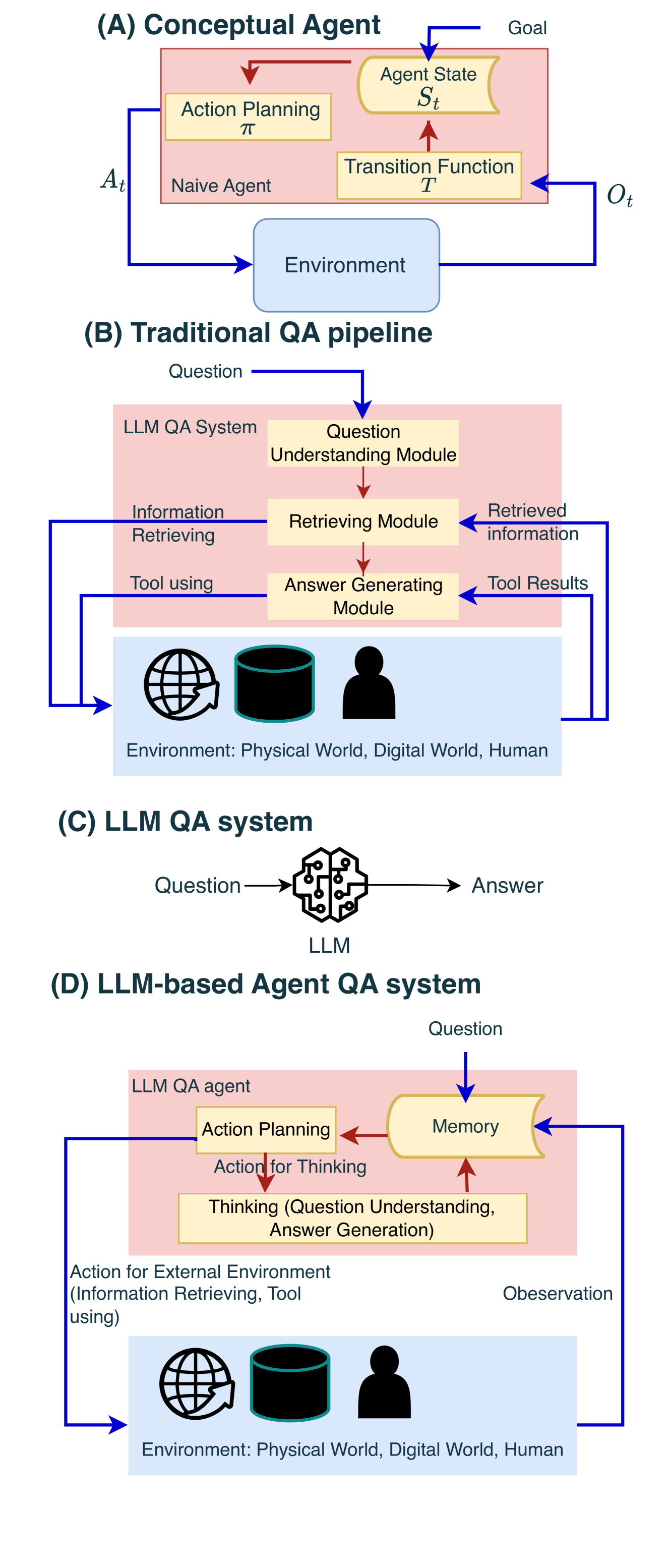

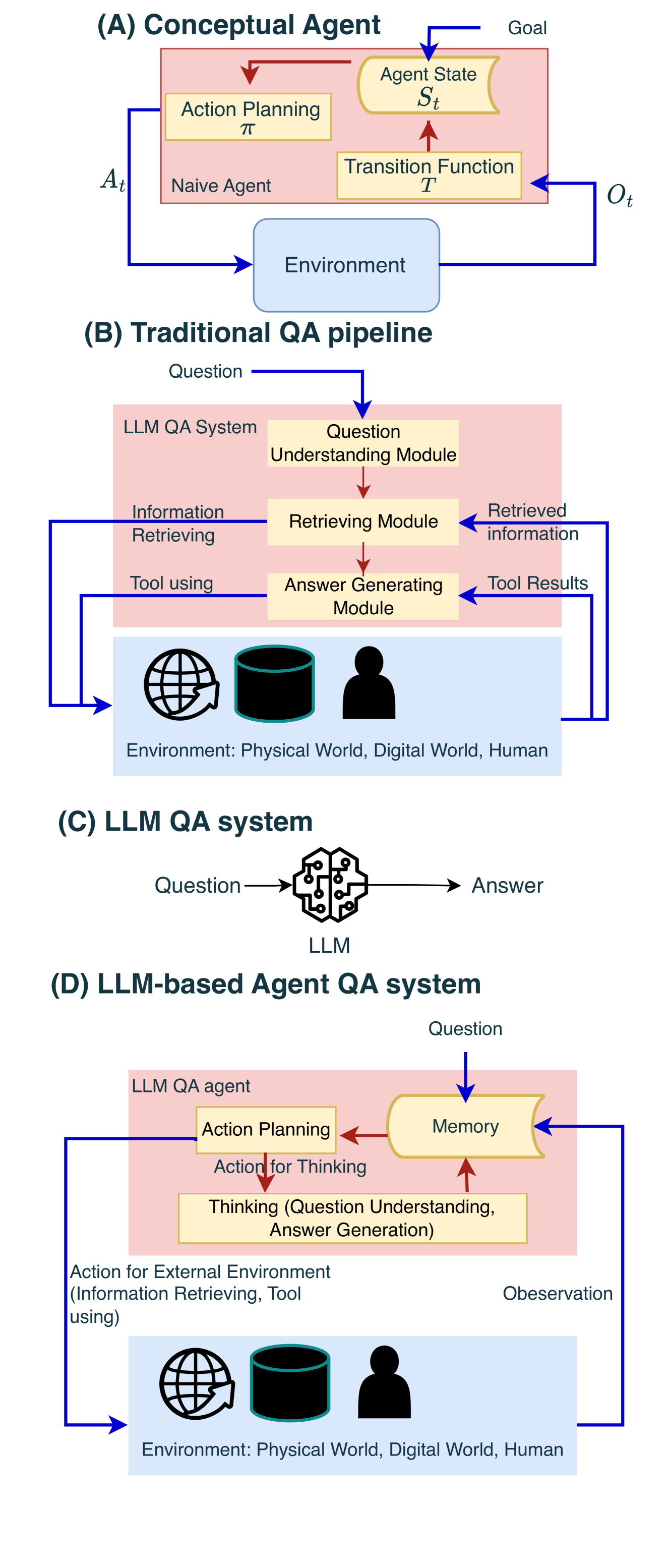

## Diagram: Comparison of AI Agent and Question-Answering System Architectures

### Overview

The image presents four distinct architectural diagrams, labeled (A) through (D), illustrating the evolution and components of AI agents and Question-Answering (QA) systems. It contrasts a classic conceptual agent model with traditional and modern LLM-based QA pipelines.

### Components/Axes

The image is divided into four main sections, each with a title and a flowchart-style diagram.

**Section (A): Conceptual Agent**

* **Title:** (A) Conceptual Agent

* **Main Container:** A pink rectangle labeled "Naive Agent".

* **Internal Components (within Naive Agent):**

* "Agent State" (labeled with mathematical symbol *S<sub>t</sub>*).

* "Action Planning" (labeled with mathematical symbol *π*).

* "Transition Function" (labeled with mathematical symbol *T*).

* **External Component:** A blue rectangle labeled "Environment".

* **Inputs/Outputs & Flow:**

* An arrow labeled "Goal" points into "Agent State".

* An arrow labeled *A<sub>t</sub>* (Action at time t) flows from "Action Planning" to the "Environment".

* An arrow labeled *O<sub>t</sub>* (Observation at time t) flows from the "Environment" to the "Transition Function".

* Internal red arrows show flow from "Transition Function" to "Agent State", and from "Agent State" to "Action Planning".

**Section (B): Traditional QA pipeline**

* **Title:** (B) Traditional QA pipeline

* **Main Container:** A pink rectangle labeled "LLM QA System".

* **Internal Modules (within LLM QA System, top to bottom):**

* "Question Understanding Module"

* "Retrieving Module"

* "Answer Generating Module"

* **External Environment:** A blue rectangle below containing three icons: a globe (Physical World), a database (Digital World), and a person silhouette (Human). It is labeled "Environment: Physical World, Digital World, Human".

* **Inputs, Outputs & Flow:**

* An arrow labeled "Question" enters the "Question Understanding Module".

* A red arrow flows from "Question Understanding Module" to "Retrieving Module".

* A red arrow flows from "Retrieving Module" to "Answer Generating Module".

* A blue arrow labeled "Information Retrieving" flows from the "Retrieving Module" to the external Environment.

* A blue arrow labeled "Retrieved information" flows from the Environment back to the "Retrieving Module".

* A blue arrow labeled "Tool using" flows from the "Answer Generating Module" to the external Environment.

* A blue arrow labeled "Tool Results" flows from the Environment back to the "Answer Generating Module".

**Section (C): LLM QA system**

* **Title:** (C) LLM QA system

* **Central Component:** An icon representing an LLM (Large Language Model), depicted as a stylized brain made of circuit lines.

* **Flow:**

* An arrow labeled "Question" points into the LLM icon.

* An arrow labeled "Answer" points out from the LLM icon.

**Section (D): LLM-based Agent QA system**

* **Title:** (D) LLM-based Agent QA system

* **Main Container:** A pink rectangle labeled "LLM QA agent".

* **Internal Components (within LLM QA agent):**

* "Memory" (in a yellow, scroll-shaped box).

* "Action Planning".

* "Thinking (Question Understanding, Answer Generation)".

* **External Environment:** Identical to section (B): a blue rectangle with globe, database, and person icons, labeled "Environment: Physical World, Digital World, Human".

* **Inputs, Outputs & Flow:**

* An arrow labeled "Question" points into "Memory".

* A red arrow flows from "Memory" to "Action Planning".

* A red arrow labeled "Action for Thinking" flows from "Action Planning" to the "Thinking" module.

* A red arrow flows from the "Thinking" module back to "Memory".

* A blue arrow labeled "Action for External Environment (Information Retrieving, Tool using)" flows from "Action Planning" to the external Environment.

* A blue arrow labeled "Observation" (note: misspelled as "Obeservation" in the image) flows from the Environment back to "Memory".

### Detailed Analysis

The diagrams are conceptual flowcharts, not data charts. Therefore, the analysis focuses on the components and their relationships.

* **Diagram (A)** models a classic reinforcement learning agent. The agent's internal state (*S<sub>t</sub>*) is updated by a transition function (*T*) based on observations (*O<sub>t</sub>*) from the environment. A policy (*π*) then plans the next action (*A<sub>t</sub>*) based on the state, aiming to achieve a goal.

* **Diagram (B)** shows a modular, pipeline approach to QA. A question is processed sequentially: first understood, then used to retrieve information from external sources (world, data, humans), and finally used to generate an answer, potentially using tools. The flow is linear with specific, separate modules for each task.

* **Diagram (C)** represents the simplest QA model: a single LLM takes a question directly and produces an answer, with no explicit external modules or memory.

* **Diagram (D)** integrates the agent concept from (A) with the QA task. The "LLM QA agent" uses a central "Memory" component. It plans actions, which can be internal ("Thinking" for understanding/generation) or external (retrieving/using tools). Observations from the environment update the memory, creating a continuous loop. This architecture is more dynamic and integrated than the traditional pipeline.

### Key Observations

1. **Evolution of Complexity:** The diagrams show a progression from a general agent model (A), to a specialized but rigid QA pipeline (B), to a minimal LLM-only model (C), and finally to a hybrid, agent-based QA system (D) that combines the strengths of (A) and (B).

2. **Role of Memory:** Memory is explicitly highlighted as a core component only in the agent-based systems (A and D), where it is central to state tracking and planning. In the traditional pipeline (B), state is implicit in the sequential module flow.

3. **Interaction with Environment:** Diagrams (A), (B), and (D) all emphasize interaction with an external environment. (B) and (D) specify this environment includes the physical world, digital world, and humans, accessed via information retrieval and tool use.

4. **Internal vs. External Actions:** Diagram (D) makes a clear distinction between internal cognitive actions ("Action for Thinking") and external, environment-interacting actions ("Action for External Environment"), a nuance not present in the other diagrams.

### Interpretation

This image serves as a conceptual framework for understanding different paradigms in AI for question answering. It argues visually that moving from a static pipeline (B) or a simple LLM call (C) towards an **agent-based architecture (D)**—which incorporates persistent memory, deliberate planning, and the ability to take both internal and external actions—creates a more robust, flexible, and capable system. The agent model (D) can dynamically decide *how* to answer a question (e.g., think deeply, look something up, ask a human) rather than following a fixed sequence. The inclusion of the classic agent model (A) grounds this modern LLM-based approach in established AI theory, suggesting that the principles of state, policy, and environment interaction remain fundamental. The minor typo ("Obeservation") in diagram (D) is a notable artifact but does not detract from the conceptual clarity.

DECODING INTELLIGENCE...