## Line Charts: Attack Success Rate vs. Ablating Head Numbers

### Overview

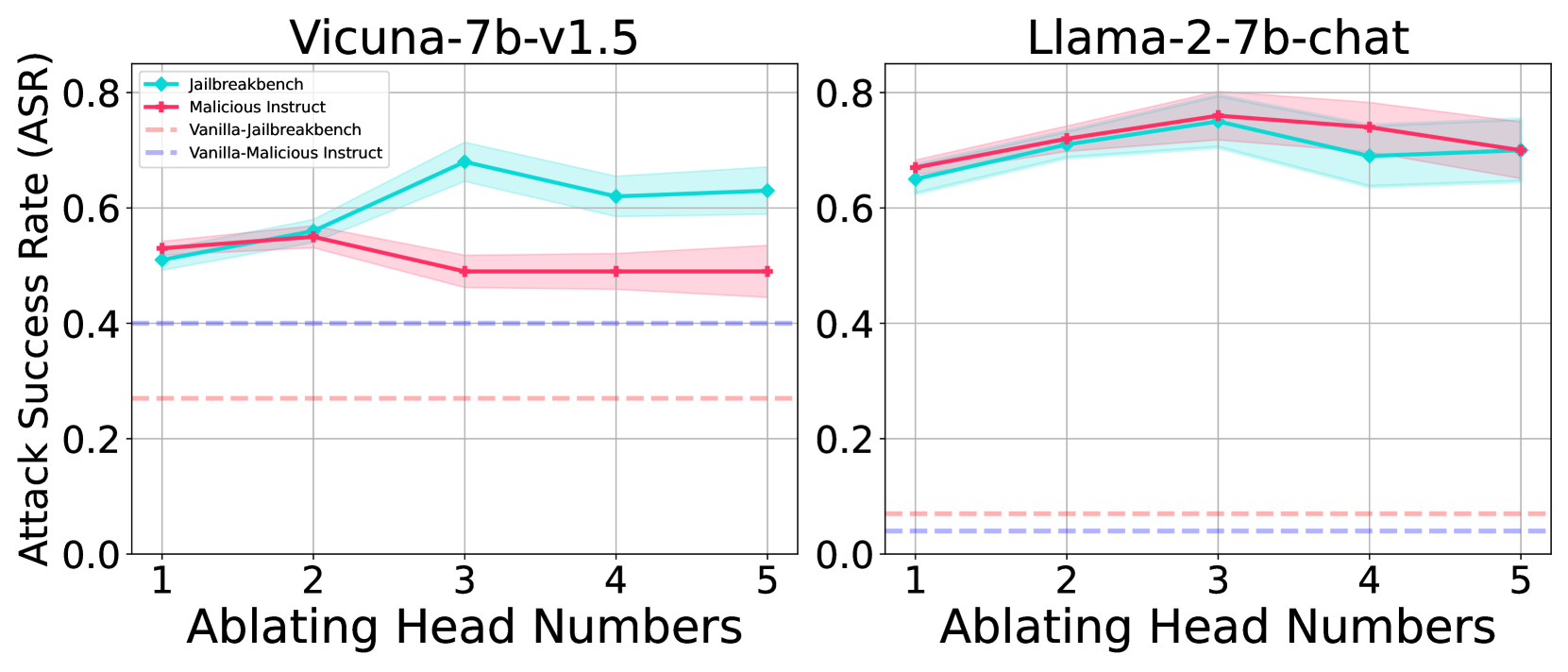

The image contains two side-by-side line charts comparing the Attack Success Rate (ASR) of different attack methods against two large language models (LLMs) as the number of ablated attention heads increases. The left chart is for the model "Vicuna-7b-v1.5," and the right chart is for "Llama-2-7b-chat." Each chart plots four data series, with shaded regions indicating confidence intervals or variance.

### Components/Axes

* **Chart Titles:**

* Left Chart: `Vicuna-7b-v1.5`

* Right Chart: `Llama-2-7b-chat`

* **Y-Axis (Both Charts):** Label: `Attack Success Rate (ASR)`. Scale ranges from 0.0 to 0.8, with major ticks at 0.0, 0.2, 0.4, 0.6, and 0.8.

* **X-Axis (Both Charts):** Label: `Ablating Head Numbers`. Discrete values marked at 1, 2, 3, 4, and 5.

* **Legend (Top-Left of each chart):** Contains four entries, consistent across both charts.

1. `Jailbreakbench`: Cyan solid line with diamond markers (◆).

2. `Malicious Instruct`: Red solid line with plus markers (+).

3. `Vanilla-Jailbreakbench`: Pink dashed line.

4. `Vanilla-Malicious Instruct`: Purple dashed line.

### Detailed Analysis

**Left Chart: Vicuna-7b-v1.5**

* **Jailbreakbench (Cyan, ◆):** Trend: Increases from x=1 to a peak at x=3, then slightly decreases. Points (approximate): (1, ~0.51), (2, ~0.56), (3, ~0.68), (4, ~0.62), (5, ~0.63). Shaded cyan region indicates variance.

* **Malicious Instruct (Red, +):** Trend: Slight increase from x=1 to x=2, then decreases and plateaus. Points (approximate): (1, ~0.53), (2, ~0.55), (3, ~0.49), (4, ~0.49), (5, ~0.49). Shaded red region indicates variance.

* **Vanilla-Jailbreakbench (Pink, dashed):** A flat, horizontal line at approximately ASR = 0.27 across all x-values.

* **Vanilla-Malicious Instruct (Purple, dashed):** A flat, horizontal line at approximately ASR = 0.40 across all x-values.

**Right Chart: Llama-2-7b-chat**

* **Jailbreakbench (Cyan, ◆):** Trend: Increases from x=1 to a peak at x=3, then decreases. Points (approximate): (1, ~0.64), (2, ~0.71), (3, ~0.75), (4, ~0.69), (5, ~0.70). Shaded cyan region indicates variance.

* **Malicious Instruct (Red, +):** Trend: Increases from x=1 to a peak at x=3, then decreases. Points (approximate): (1, ~0.67), (2, ~0.72), (3, ~0.76), (4, ~0.74), (5, ~0.70). Shaded red region indicates variance.

* **Vanilla-Jailbreakbench (Pink, dashed):** A flat, horizontal line at approximately ASR = 0.07 across all x-values.

* **Vanilla-Malicious Instruct (Purple, dashed):** A flat, horizontal line at approximately ASR = 0.04 across all x-values.

### Key Observations

1. **Model Vulnerability:** The Llama-2-7b-chat model exhibits a significantly higher baseline Attack Success Rate (ASR) for both active attack methods (Jailbreakbench and Malicious Instruct) compared to Vicuna-7b-v1.5, starting above 0.6 versus around 0.5.

2. **Effect of Ablation:** For both models and both active attack methods, ASR does not decrease monotonically with more ablated heads. Instead, it often peaks at 3 ablated heads before declining or stabilizing.

3. **Method Comparison:** On Vicuna, the `Jailbreakbench` method achieves a higher peak ASR (~0.68) than `Malicious Instruct` (~0.55). On Llama, the two methods perform very similarly, with `Malicious Instruct` having a marginally higher peak (~0.76 vs ~0.75).

4. **Vanilla Baselines:** The "Vanilla" (unmodified) attack baselines are constant and significantly lower than the active methods for both models. Notably, the vanilla baselines are much lower for Llama (~0.04-0.07) than for Vicuna (~0.27-0.40).

5. **Variance:** The shaded confidence intervals are wider for the active attack lines, especially around their peaks, indicating greater variability in results at those points. The vanilla baselines show no visible variance.

### Interpretation

This data suggests that the security vulnerability of these LLMs, as measured by ASR, has a non-linear relationship with the ablation of attention heads. The peak vulnerability at 3 ablated heads for both models is a critical finding, indicating a potential "sweet spot" where the model's safety mechanisms are most compromised by this specific intervention.

The stark difference in vanilla baseline ASR between Vicuna and Llama implies that Llama-2-7b-chat is inherently more susceptible to these attack benchmarks in its default state. However, the active attack methods (Jailbreakbench, Malicious Instruct) are effective at dramatically increasing the ASR for both models, with the effect being more pronounced on the initially more robust Vicuna model.

The convergence of the two active attack methods' performance on Llama suggests that for this model, the specific attack strategy may matter less than the act of ablating heads itself. In contrast, on Vicuna, the `Jailbreakbench` method appears to be a more potent attack vector. The results highlight that model robustness is not a fixed property but can be dynamically manipulated through interventions like attention head ablation, with the impact varying significantly between model architectures.