# Technical Document Extraction: Expert Redundancy Analysis

## 1. Image Overview

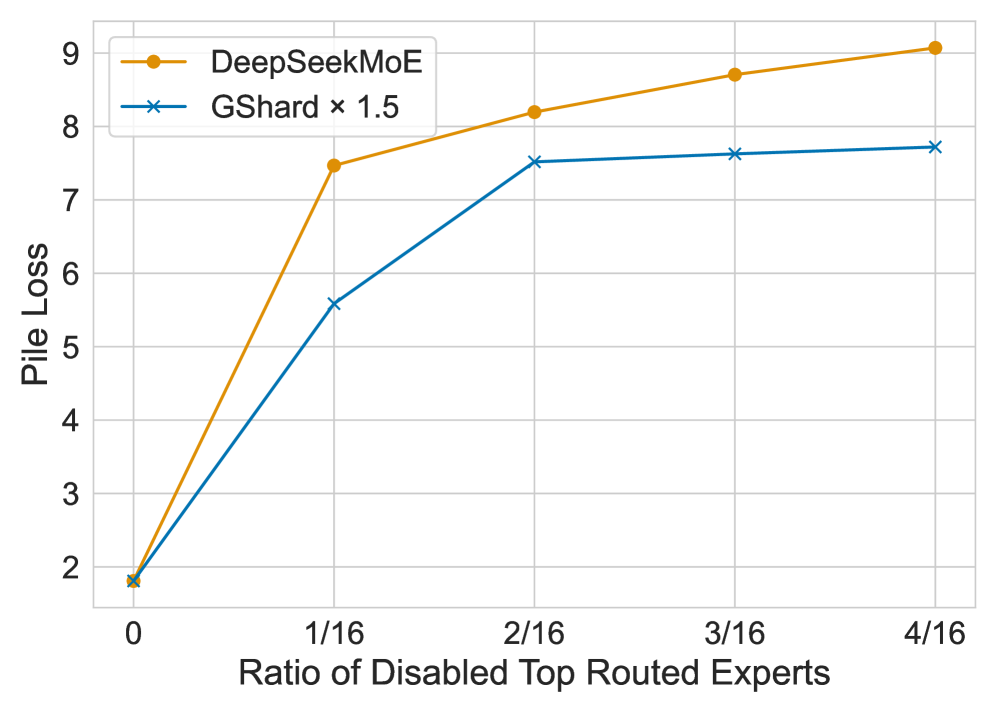

This image is a line graph comparing the robustness of two Mixture-of-Experts (MoE) architectures—**DeepSeekMoE** and **GShard × 1.5**—when specific ratios of their top-routed experts are disabled. The chart measures performance degradation via "Pile Loss."

## 2. Component Isolation

### A. Header / Legend

* **Location:** Top-left quadrant.

* **Series 1:** Orange line with circular markers (●) labeled "**DeepSeekMoE**".

* **Series 2:** Blue line with "x" markers (x) labeled "**GShard × 1.5**".

### B. Main Chart Area (Axes)

* **Y-Axis (Vertical):**

* **Title:** Pile Loss

* **Markers:** 2, 3, 4, 5, 6, 7, 8, 9

* **X-Axis (Horizontal):**

* **Title:** Ratio of Disabled Top Routed Experts

* **Markers:** 0, 1/16, 2/16, 3/16, 4/16

### C. Grid

* The chart features a light gray orthogonal grid corresponding to the major axis markers.

## 3. Trend Verification and Data Extraction

### Series 1: DeepSeekMoE (Orange Line, Circular Markers)

* **Visual Trend:** The line shows a very sharp, steep upward slope from 0 to 1/16, followed by a continued but more gradual upward slope through 4/16. This indicates that DeepSeekMoE is highly sensitive to the loss of its most frequently used experts, with loss increasing significantly even at low disablement ratios.

* **Data Points (Approximate):**

* **0:** ~1.8

* **1/16:** ~7.5

* **2/16:** ~8.2

* **3/16:** ~8.7

* **4/16:** ~9.1

### Series 2: GShard × 1.5 (Blue Line, "x" Markers)

* **Visual Trend:** The line shows a steep upward slope from 0 to 2/16, though less aggressive than the orange line. After 2/16, the curve flattens significantly (plateaus), showing very little increase in loss between 2/16 and 4/16.

* **Data Points (Approximate):**

* **0:** ~1.8

* **1/16:** ~5.6

* **2/16:** ~7.5

* **3/16:** ~7.6

* **4/16:** ~7.7

## 4. Data Table Reconstruction

| Ratio of Disabled Top Routed Experts | DeepSeekMoE (Pile Loss) | GShard × 1.5 (Pile Loss) |

| :--- | :--- | :--- |

| **0** | ~1.8 | ~1.8 |

| **1/16** | ~7.5 | ~5.6 |

| **2/16** | ~8.2 | ~7.5 |

| **3/16** | ~8.7 | ~7.6 |

| **4/16** | ~9.1 | ~7.7 |

## 5. Technical Summary

The graph demonstrates that while both models start at a similar baseline loss (~1.8) when no experts are disabled, **DeepSeekMoE** experiences a much more severe performance degradation (higher Pile Loss) as top-routed experts are removed.

**GShard × 1.5** exhibits better resilience; although its loss increases, it stabilizes much earlier than DeepSeekMoE. By the 4/16 ratio, DeepSeekMoE's loss is approximately 1.4 points higher than that of GShard × 1.5. This suggests that GShard × 1.5 may have higher redundancy or more distributed knowledge among its experts compared to the DeepSeekMoE architecture shown.