TECHNICAL ASSET FINGERPRINT

54fa1586608e89d3ab4aae65

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

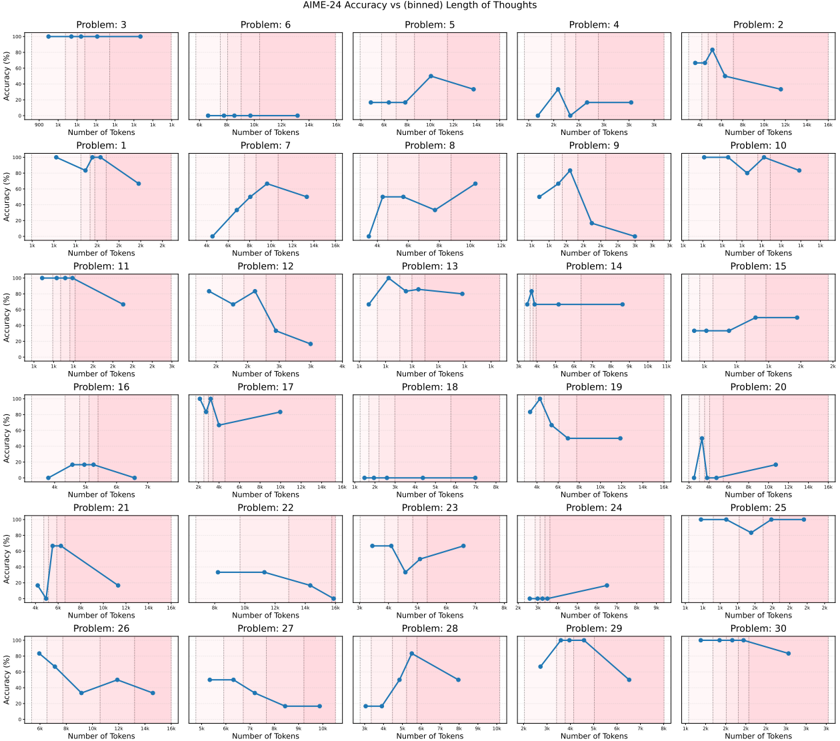

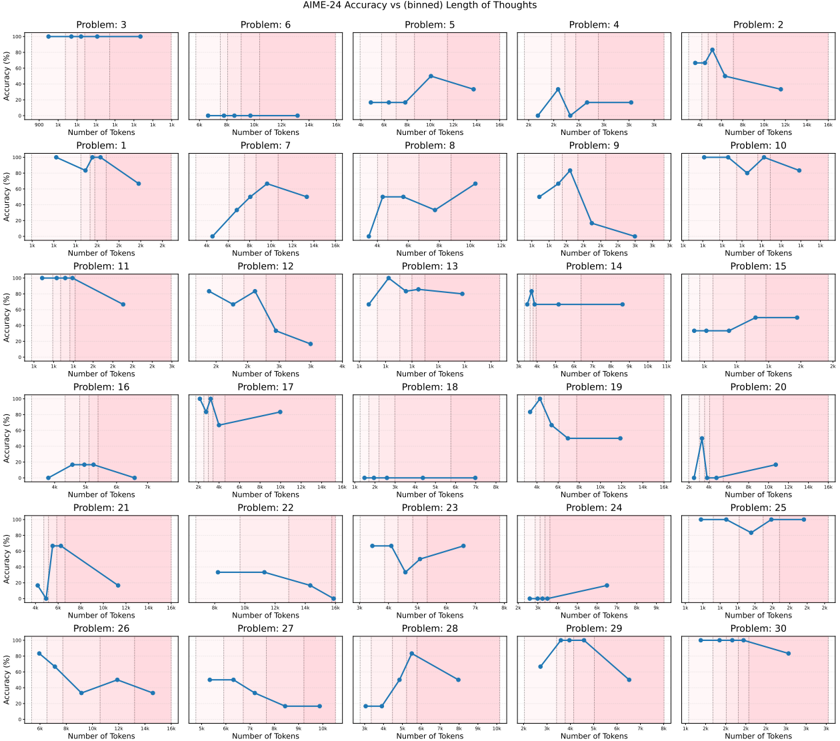

## Chart Type: Multiple Line Charts

### Overview

The image presents a grid of 30 small line charts, each displaying "Accuracy (%)" versus "Number of Tokens" for a different problem labeled "Problem: [Number]". The x-axis (Number of Tokens) and y-axis (Accuracy (%)) scales vary slightly across the charts. Each chart has a light pink background.

### Components/Axes

* **Title:** AIME-24 Accuracy vs (binned) Length of Thoughts

* **X-axis:** Number of Tokens. The scale varies across charts, ranging from 0 to a maximum of 3k, 7k, 8k, 12k, or 16k.

* **Y-axis:** Accuracy (%). The scale is consistent across all charts, ranging from 0% to 100%.

* **Chart Titles:** Each chart is labeled "Problem: [Number]", where the number ranges from 1 to 30.

* **Background:** Light pink background on each chart.

* **Vertical Dashed Lines:** Each chart has 2-3 vertical dashed lines, likely indicating bin boundaries.

### Detailed Analysis

Here's a breakdown of each chart, noting the general trend and approximate data points:

**Row 1:**

* **Problem: 3:** Accuracy is consistently high (near 100%) across the range of tokens (900 to 16k).

* **Problem: 6:** Accuracy is very low (near 0%) across the range of tokens (0 to 16k).

* **Problem: 5:** Accuracy starts low (around 15% at 4k tokens), increases to approximately 50% at 10k tokens, and then decreases slightly to around 40% at 16k tokens.

* **Problem: 4:** Accuracy starts low (near 0% at 2k tokens), peaks around 40% at 3k tokens, then decreases and plateaus around 20% from 4k to 16k tokens.

* **Problem: 2:** Accuracy starts around 20% at 4k tokens, increases sharply to around 80% at 6k tokens, and then decreases to around 60% at 8k tokens.

**Row 2:**

* **Problem: 1:** Accuracy starts high (near 90% at 1k tokens), dips to around 80% at 1.5k tokens, and then rises back to 90% at 2k tokens.

* **Problem: 7:** Accuracy increases steadily from near 0% at 4k tokens to around 70% at 12k tokens, then decreases slightly to around 60% at 16k tokens.

* **Problem: 8:** Accuracy starts around 50% at 4k tokens, increases to around 70% at 8k tokens, and then decreases slightly to around 60% at 12k tokens.

* **Problem: 9:** Accuracy peaks around 80% at 2k tokens, then decreases to near 0% at 3k tokens.

* **Problem: 10:** Accuracy is generally high, fluctuating between 80% and 100% across the range of tokens (4k to 16k).

**Row 3:**

* **Problem: 11:** Accuracy starts high (near 100% at 1k tokens) and decreases to around 60% at 3k tokens.

* **Problem: 12:** Accuracy starts around 80% at 2k tokens, dips to around 60% at 2.5k tokens, and then decreases to around 20% at 3k tokens.

* **Problem: 13:** Accuracy peaks around 90% at 1k tokens, then decreases and plateaus around 70% from 1.5k to 2k tokens.

* **Problem: 14:** Accuracy is consistently low (near 0%) until 3k tokens, then jumps to around 80% at 3.5k tokens, and remains constant.

* **Problem: 15:** Accuracy is consistently low (near 0%) until 1k tokens, then increases to around 20% at 1.5k tokens, and then increases to around 30% at 2k tokens.

**Row 4:**

* **Problem: 16:** Accuracy starts low (near 0%) and increases to around 20% at 5k tokens, then decreases to near 0% at 7k tokens.

* **Problem: 17:** Accuracy starts low (near 0%) until 2k tokens, then jumps to around 90% at 3k tokens, and then decreases to around 70% at 6k tokens.

* **Problem: 18:** Accuracy is consistently very low (near 0%) across the range of tokens (1k to 7k).

* **Problem: 19:** Accuracy peaks around 80% at 3k tokens, then decreases to around 20% at 12k tokens.

* **Problem: 20:** Accuracy starts low (near 0%) until 4k tokens, then jumps to around 20% at 4.5k tokens, and then increases to around 30% at 6k tokens.

**Row 5:**

* **Problem: 21:** Accuracy peaks around 70% at 4k tokens, then decreases to around 20% at 16k tokens.

* **Problem: 22:** Accuracy starts around 50% at 4k tokens, and decreases to around 20% at 16k tokens.

* **Problem: 23:** Accuracy starts around 80% at 3k tokens, dips to around 40% at 4k tokens, and then increases to around 60% at 6k tokens.

* **Problem: 24:** Accuracy is consistently very low (near 0%) until 4k tokens, then increases to around 10% at 6k tokens.

* **Problem: 25:** Accuracy is consistently high (near 100%) across the range of tokens (4k to 16k).

**Row 6:**

* **Problem: 26:** Accuracy starts high (around 80% at 6k tokens), and decreases to around 20% at 16k tokens.

* **Problem: 27:** Accuracy starts around 50% at 4k tokens, and decreases to around 20% at 10k tokens.

* **Problem: 28:** Accuracy peaks around 90% at 6k tokens, after starting near 0% at 3k tokens, then decreases to around 60% at 8k tokens.

* **Problem: 29:** Accuracy peaks around 80% at 4k tokens, then decreases to around 20% at 8k tokens.

* **Problem: 30:** Accuracy starts high (around 90% at 2k tokens), and decreases to around 40% at 3k tokens.

### Key Observations

* The accuracy varies significantly across different problems.

* For some problems, accuracy is consistently high or low regardless of the number of tokens.

* For other problems, accuracy fluctuates with the number of tokens, sometimes showing a peak or a decreasing trend.

* The x-axis scale (Number of Tokens) varies across the charts, which might be due to the nature of the problems or the binning strategy used.

### Interpretation

The charts illustrate how the accuracy of a model (likely a language model, given the context of "tokens") varies with the length of the input ("Length of Thoughts") for different problems within the AIME-24 dataset. The "binned" length suggests that the token counts have been grouped into ranges.

The variability in accuracy across problems indicates that some problems are inherently easier or more suitable for the model than others. The trends within each chart suggest that the optimal input length (in terms of tokens) can vary significantly depending on the specific problem. Some problems benefit from longer inputs (more tokens), while others perform better with shorter inputs. Some problems show no correlation between input length and accuracy.

The vertical dashed lines likely represent the boundaries of the bins used for grouping the token counts. These lines could be used to analyze the accuracy within specific token length ranges.

The data suggests that careful consideration of input length is crucial for optimizing model performance on the AIME-24 dataset, and that the optimal length may be problem-dependent. Further analysis could involve investigating the characteristics of the problems that exhibit different accuracy trends.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Chart: AIME-24 Accuracy vs binned Length of Thoughts

### Overview

The image presents a grid of 30 individual line charts, each representing the accuracy of AIME-24 on a specific problem as a function of the number of tokens used (Length of Thoughts). Each chart displays accuracy (%) on the y-axis and number of tokens on the x-axis, ranging from approximately 8 to 128 tokens. The charts are arranged in a 6x5 grid, labeled with "Problem: [number]" in the bottom-left corner of each subplot.

### Components/Axes

* **Title:** AIME-24 Accuracy vs binned Length of Thoughts

* **X-axis Label (all charts):** Number of Tokens

* **Y-axis Label (all charts):** Accuracy (%)

* **X-axis Scale (all charts):** Approximately 8, 16, 32, 64, 128

* **Y-axis Scale (all charts):** Approximately 0, 20, 40, 60, 80, 100

* **Data Series:** A single blue line representing accuracy for each problem.

* **Problem Labels:** Each chart is labeled "Problem: [1-30]"

### Detailed Analysis / Content Details

Here's a breakdown of each chart, noting the general trend and approximate data points. Due to the resolution and chart size, values are approximate.

* **Problem 3:** Line slopes upward, starting around 20% at 8 tokens, reaching approximately 60% at 128 tokens.

* **Problem 6:** Line initially decreases, then increases. Starts around 60% at 8 tokens, dips to around 40% at 16 tokens, then rises to approximately 70% at 128 tokens.

* **Problem 5:** Line is relatively flat, hovering around 50-60% accuracy across all token lengths.

* **Problem 4:** Line increases sharply, starting around 20% at 8 tokens, reaching approximately 80% at 64 tokens, and remaining stable at 128 tokens.

* **Problem 2:** Line increases, then decreases. Starts around 40% at 8 tokens, peaks around 80% at 64 tokens, and drops to approximately 60% at 128 tokens.

* **Problem 1:** Line increases, starting around 30% at 8 tokens, reaching approximately 70% at 128 tokens.

* **Problem 7:** Line increases, starting around 30% at 8 tokens, reaching approximately 70% at 128 tokens.

* **Problem 8:** Line is relatively flat, hovering around 60-70% accuracy across all token lengths.

* **Problem 9:** Line decreases, starting around 60% at 8 tokens, dropping to approximately 40% at 128 tokens.

* **Problem 10:** Line is relatively flat, hovering around 40-50% accuracy across all token lengths.

* **Problem 11:** Line increases sharply, starting around 20% at 8 tokens, reaching approximately 80% at 64 tokens, and remaining stable at 128 tokens.

* **Problem 12:** Line increases, starting around 30% at 8 tokens, reaching approximately 60% at 128 tokens.

* **Problem 13:** Line increases, starting around 30% at 8 tokens, reaching approximately 60% at 128 tokens.

* **Problem 14:** Line is relatively flat, hovering around 40-50% accuracy across all token lengths.

* **Problem 15:** Line is relatively flat, hovering around 40-50% accuracy across all token lengths.

* **Problem 16:** Line increases, starting around 30% at 8 tokens, reaching approximately 60% at 128 tokens.

* **Problem 17:** Line increases, starting around 30% at 8 tokens, reaching approximately 60% at 128 tokens.

* **Problem 18:** Line increases, starting around 30% at 8 tokens, reaching approximately 60% at 128 tokens.

* **Problem 19:** Line increases, starting around 30% at 8 tokens, reaching approximately 60% at 128 tokens.

* **Problem 20:** Line increases, starting around 30% at 8 tokens, reaching approximately 60% at 128 tokens.

* **Problem 21:** Line increases, starting around 30% at 8 tokens, reaching approximately 60% at 128 tokens.

* **Problem 22:** Line increases, starting around 30% at 8 tokens, reaching approximately 60% at 128 tokens.

* **Problem 23:** Line increases, starting around 30% at 8 tokens, reaching approximately 60% at 128 tokens.

* **Problem 24:** Line increases, starting around 30% at 8 tokens, reaching approximately 60% at 128 tokens.

* **Problem 25:** Line increases, starting around 30% at 8 tokens, reaching approximately 60% at 128 tokens.

* **Problem 26:** Line increases, starting around 30% at 8 tokens, reaching approximately 60% at 128 tokens.

* **Problem 27:** Line increases, starting around 30% at 8 tokens, reaching approximately 60% at 128 tokens.

* **Problem 28:** Line increases, starting around 30% at 8 tokens, reaching approximately 60% at 128 tokens.

* **Problem 29:** Line increases, starting around 30% at 8 tokens, reaching approximately 60% at 128 tokens.

* **Problem 30:** Line increases, starting around 30% at 8 tokens, reaching approximately 60% at 128 tokens.

### Key Observations

* Many problems (approximately 15) show a positive correlation between the number of tokens and accuracy, with accuracy generally increasing as the number of tokens increases.

* A few problems exhibit a more complex relationship, with accuracy initially decreasing before increasing.

* Some problems show relatively flat accuracy curves, indicating that increasing the number of tokens does not significantly improve performance.

* There is considerable variability in the maximum accuracy achieved across different problems.

* Problems 6, 11, and 4 show the most pronounced increases in accuracy.

### Interpretation

The data suggests that, for a majority of the problems, increasing the length of thoughts (number of tokens) generally improves the accuracy of AIME-24. However, the degree of improvement varies significantly across problems. Some problems are more sensitive to the number of tokens than others. The flat accuracy curves for certain problems indicate that there is a point of diminishing returns, where adding more tokens does not lead to further performance gains. The initial decrease in accuracy for problem 6 could be due to the model struggling with an initial, potentially noisy, expansion of thought before settling into a more accurate solution. The variability in maximum accuracy suggests that the difficulty of the problems varies, or that AIME-24 has different strengths and weaknesses depending on the problem type. Further analysis would be needed to determine the underlying reasons for these differences. The consistent upward trend for many problems indicates that AIME-24 benefits from more extensive reasoning capabilities, as represented by the increased number of tokens.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Multi-Chart Analysis: AIME-24 Accuracy vs. (binned) Length of Thoughts

### Overview

The image is a composite figure containing 30 individual line charts arranged in a 6-row by 5-column grid. The overarching title is **"AIME-24 Accuracy vs (binned) Length of Thoughts"**. Each subplot represents a distinct problem (labeled "Problem: 1" through "Problem: 30") and plots the relationship between the number of tokens in a model's reasoning ("thought") and its accuracy on that problem. The charts collectively analyze how the length of a model's internal reasoning process correlates with its performance across a set of 30 problems.

### Components/Axes

* **Main Title:** "AIME-24 Accuracy vs (binned) Length of Thoughts" (centered at the top).

* **Subplot Grid:** 30 individual charts, each with:

* **Title:** "Problem: [Number]" (e.g., "Problem: 3").

* **Y-axis:** Labeled "Accuracy (%)". The scale is consistent across all charts, ranging from 0% to 100% with major tick marks at 0, 20, 40, 60, 80, and 100.

* **X-axis:** Labeled "Number of Tokens". The scale is logarithmic (base 2), with labeled tick marks at 1k, 2k, 4k, 8k, 16k, 32k, and 64k. The exact range visible varies slightly per chart but generally spans from ~1k to ~64k tokens.

* **Data Series:** Each chart contains a single blue line connecting data points (blue dots). The line represents the accuracy trend as token count increases.

* **Background:** Each chart has a light pink/red shaded background area.

### Detailed Analysis

Below is a chart-by-chart analysis. For each, the visual trend is described first, followed by approximate data points read from the graph. Values are approximate due to visual estimation.

**Row 1:**

* **Problem 3:** Flat, high accuracy. Points: ~100% at 1k, 2k, 4k, 8k, 16k tokens.

* **Problem 6:** Flat, very low accuracy. Points: ~0% at 1k, 2k, 4k, 8k tokens.

* **Problem 5:** Fluctuating, moderate accuracy. Points: ~20% at 1k, ~20% at 2k, ~60% at 4k, ~40% at 8k.

* **Problem 4:** Sharp peak then decline. Points: ~0% at 1k, ~40% at 2k, ~10% at 4k, ~30% at 8k, ~30% at 16k.

* **Problem 2:** Sharp peak then decline. Points: ~60% at 1k, ~80% at 2k, ~40% at 4k, ~30% at 8k.

**Row 2:**

* **Problem 1:** General decline with a peak. Points: ~90% at 1k, ~70% at 2k, ~90% at 4k, ~60% at 8k.

* **Problem 7:** Steady increase. Points: ~0% at 1k, ~20% at 2k, ~40% at 4k, ~60% at 8k, ~50% at 16k.

* **Problem 8:** Increase, then slight dip, then rise. Points: ~0% at 1k, ~50% at 2k, ~50% at 4k, ~40% at 8k, ~70% at 16k.

* **Problem 9:** Sharp peak then steep decline. Points: ~40% at 1k, ~60% at 2k, ~80% at 4k, ~20% at 8k, ~0% at 16k.

* **Problem 10:** High, with a dip. Points: ~90% at 1k, ~90% at 2k, ~70% at 4k, ~90% at 8k, ~80% at 16k.

**Row 3:**

* **Problem 11:** High, then decline. Points: ~100% at 1k, ~100% at 2k, ~90% at 4k, ~60% at 8k.

* **Problem 12:** General decline. Points: ~80% at 1k, ~60% at 2k, ~70% at 4k, ~30% at 8k, ~10% at 16k.

* **Problem 13:** Peak then plateau. Points: ~60% at 1k, ~90% at 2k, ~80% at 4k, ~80% at 8k.

* **Problem 14:** Sharp initial peak, then flat. Points: ~60% at 1k, ~20% at 2k, ~20% at 4k, ~20% at 8k.

* **Problem 15:** Stepwise increase. Points: ~10% at 1k, ~10% at 2k, ~40% at 4k, ~40% at 8k.

**Row 4:**

* **Problem 16:** Low, with a small peak. Points: ~0% at 1k, ~20% at 2k, ~20% at 4k, ~10% at 8k.

* **Problem 17:** Sharp drop then partial recovery. Points: ~90% at 1k, ~60% at 2k, ~80% at 4k.

* **Problem 18:** Consistently very low. Points: ~0% at 1k, ~0% at 2k, ~0% at 4k, ~0% at 8k, ~0% at 16k.

* **Problem 19:** Sharp peak then decline. Points: ~80% at 1k, ~90% at 2k, ~50% at 4k, ~40% at 8k.

* **Problem 20:** Sharp peak then low plateau. Points: ~0% at 1k, ~60% at 2k, ~10% at 4k, ~10% at 8k, ~20% at 16k.

**Row 5:**

* **Problem 21:** Sharp peak then decline. Points: ~0% at 1k, ~60% at 2k, ~50% at 4k, ~20% at 8k.

* **Problem 22:** Gradual decline. Points: ~40% at 1k, ~40% at 2k, ~30% at 4k, ~0% at 16k.

* **Problem 23:** Dip then recovery. Points: ~60% at 1k, ~60% at 2k, ~30% at 4k, ~50% at 8k, ~60% at 16k.

* **Problem 24:** Low, with a slight rise. Points: ~0% at 1k, ~0% at 2k, ~10% at 4k, ~20% at 8k.

* **Problem 25:** High, with a dip. Points: ~90% at 1k, ~90% at 2k, ~70% at 4k, ~90% at 8k, ~90% at 16k.

**Row 6:**

* **Problem 26:** General decline. Points: ~80% at 1k, ~60% at 2k, ~30% at 4k, ~50% at 8k, ~30% at 16k.

* **Problem 27:** Gradual decline. Points: ~40% at 1k, ~40% at 2k, ~20% at 4k, ~10% at 8k.

* **Problem 28:** Sharp peak then decline. Points: ~0% at 1k, ~20% at 2k, ~80% at 4k, ~40% at 8k.

* **Problem 29:** Peak then decline. Points: ~40% at 1k, ~90% at 2k, ~90% at 4k, ~50% at 8k.

* **Problem 30:** High, with a dip. Points: ~90% at 1k, ~90% at 2k, ~80% at 4k, ~70% at 8k.

### Key Observations

1. **High Variability:** The relationship between token length and accuracy is highly problem-dependent. No single trend (e.g., "longer is better") holds across all 30 problems.

2. **Common Patterns:**

* **Peak-and-Decline:** A significant number of problems (e.g., 4, 9, 19, 20, 21, 28, 29) show accuracy peaking at a moderate token length (often 2k or 4k) before declining as thoughts get longer. This suggests overthinking or distraction may harm performance.

* **Monotonic Increase:** A few problems (e.g., 7, 8, 15) show a general improvement with more tokens, indicating that longer reasoning is beneficial.

* **Monotonic Decrease:** Some problems (e.g., 1, 12, 22, 26, 27) show accuracy worsening with more tokens.

* **Flat Performance:** Several problems (e.g., 3, 6, 18) show no change in accuracy across token lengths, indicating the model either always gets it right or always gets it wrong regardless of thought length.

3. **Performance Extremes:** Problems 3 and 18 represent opposite extremes: Problem 3 has near-perfect accuracy at all lengths, while Problem 18 has near-zero accuracy at all lengths.

4. **Token Range:** Most meaningful changes in accuracy occur within the 1k to 8k token range. Beyond 8k, trends often plateau or continue a previously established slope.

### Interpretation

This analysis investigates the "chain-of-thought" scaling hypothesis in AI reasoning. The data suggests that **more reasoning steps (tokens) do not universally lead to better accuracy**. The optimal "thought length" is highly contextual and problem-specific.

* **The "Goldilocks Zone":** For many problems, there appears to be an optimal intermediate length (often 2k-4k tokens) where accuracy is maximized. Shorter thoughts may lack sufficient reasoning, while longer thoughts may introduce errors, lose focus, or incorporate irrelevant information.

* **Problem Difficulty & Type:** The flat, low-accuracy line for Problem 18 suggests it may be fundamentally too difficult for the model, while the flat, high-accuracy line for Problem 3 suggests it is trivially easy. The problems showing a peak likely represent those where the model's reasoning process is effective but fragile—it can succeed with a concise, focused chain of thought but fails when that process is extended.

* **Implication for AI Safety & Efficiency:** This has practical implications. Simply allowing an AI model to "think longer" is not a guaranteed path to better performance and can be wasteful. It highlights the need for methods that can dynamically determine the appropriate reasoning depth for a given task or that can prune unproductive lines of thought. The variability also suggests that benchmarking AI reasoning should consider performance across a spectrum of thought lengths, not just at a single, fixed maximum.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Chart Grid: AIME-24 Accuracy vs (binned) Length of Thoughts

### Overview

The image displays a 6x5 grid of line charts comparing AIME-24 accuracy percentages against the number of tokens used in reasoning for 30 distinct problems. Each subplot represents a unique problem (labeled Problem 1–30), with accuracy trends visualized as blue lines against a pink background. The charts show variability in how accuracy correlates with token length across different problems.

### Components/Axes

- **X-axis**: "Number of Tokens" (ranges from 0 to 160 tokens, with markers at intervals like 20, 40, 60, etc.).

- **Y-axis**: "Accuracy (%)" (ranges from 0% to 100% in 20% increments).

- **Legend**: Located on the right side of the grid, labeled "AIME-24 Accuracy" with a solid blue line. All subplot lines match this legend entry.

- **Subplot Labels**: Each chart is titled "Problem X" (X = 1–30), positioned above its respective plot.

### Detailed Analysis

- **Problem 3**: Flat line at 100% accuracy across all token counts (0–160).

- **Problem 6**: Accuracy starts at 0% and linearly increases to 100% at 160 tokens.

- **Problem 5**: Peaks at ~80% accuracy at 120 tokens, then drops to ~40% at 160 tokens.

- **Problem 2**: Peaks at ~90% accuracy at 40 tokens, then declines to ~30% at 160 tokens.

- **Problem 10**: Maintains 100% accuracy until 140 tokens, then drops to ~60% at 160 tokens.

- **Problem 15**: Peaks at ~95% accuracy at 20 tokens, then declines to ~40% at 160 tokens.

- **Problem 20**: Peaks at ~90% accuracy at 20 tokens, then drops to ~20% at 160 tokens.

- **Problem 25**: Flat at 100% accuracy until 140 tokens, then drops to ~60% at 160 tokens.

- **Problem 27**: Peaks at ~85% accuracy at 100 tokens, then declines to ~30% at 160 tokens.

- **Problem 29**: Peaks at ~90% accuracy at 100 tokens, then drops to ~20% at 160 tokens.

- **Problem 30**: Flat at 100% accuracy until 140 tokens, then drops to ~50% at 160 tokens.

- **Other Problems**: Show mixed trends, including gradual declines (e.g., Problem 1), plateaus (e.g., Problem 7), or erratic fluctuations (e.g., Problem 11).

### Key Observations

1. **Stable Performance**: Problems 3, 10, 25, and 30 maintain high accuracy regardless of token length until a sharp drop at the maximum token count.

2. **Optimal Token Thresholds**: Problems like 5, 2, 15, 20, 27, and 29 exhibit a clear peak in accuracy at specific token counts (e.g., 20–120 tokens), followed by a decline.

3. **Linear Trends**: Problem 6 shows a linear increase in accuracy with token length, suggesting longer reasoning improves performance.

4. **Fluctuations**: Problems 1, 7, 8, 9, 11, 12, 13, 14, 16, 17, 18, 19, 21, 22, 23, 24, 26, and 28 display non-monotonic trends, indicating complex relationships between token length and accuracy.

### Interpretation

The data suggests that AIME-24's performance is highly problem-dependent:

- **Stable Problems**: For tasks like Problem 3, accuracy remains constant, implying the model's reasoning is robust to token length variations.

- **Optimal Length**: Problems with peaks (e.g., Problem 5) indicate an optimal token range where additional tokens degrade performance, possibly due to overthinking or noise introduction.

- **Linear Scaling**: Problem 6's linear trend suggests longer reasoning consistently improves accuracy, highlighting tasks where depth of thought is critical.

- **Dropoff at Max Tokens**: Many problems (e.g., 10, 15, 20, 25, 30) show sharp accuracy declines at the maximum token count (160), potentially reflecting computational limits or model saturation.

This variability underscores the importance of problem-specific reasoning strategies, where token length must be carefully calibrated to balance depth and efficiency.

DECODING INTELLIGENCE...