\n

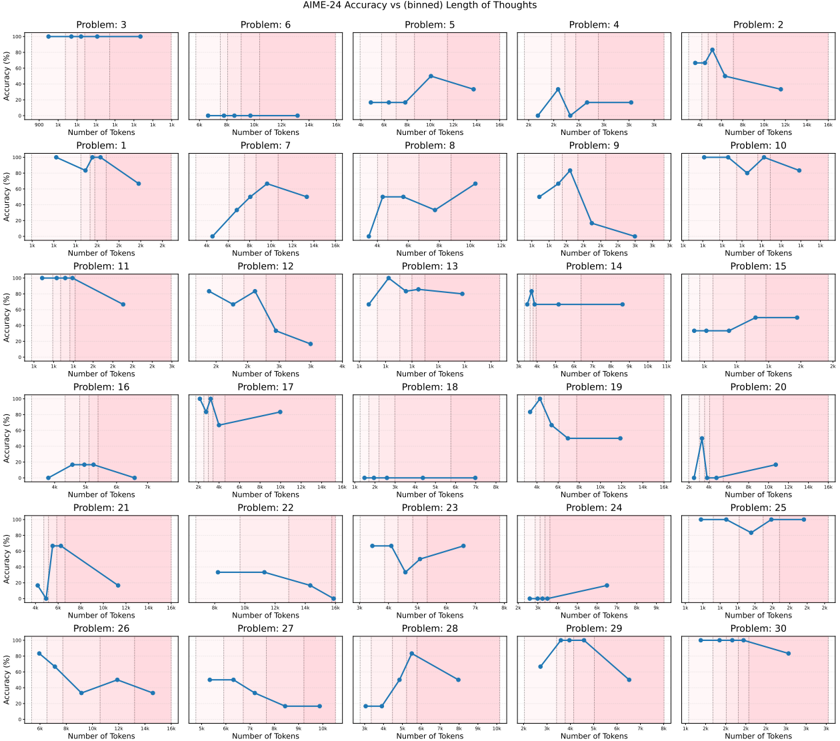

## Chart: AIME-24 Accuracy vs binned Length of Thoughts

### Overview

The image presents a grid of 30 individual line charts, each representing the accuracy of AIME-24 on a specific problem as a function of the number of tokens used (Length of Thoughts). Each chart displays accuracy (%) on the y-axis and number of tokens on the x-axis, ranging from approximately 8 to 128 tokens. The charts are arranged in a 6x5 grid, labeled with "Problem: [number]" in the bottom-left corner of each subplot.

### Components/Axes

* **Title:** AIME-24 Accuracy vs binned Length of Thoughts

* **X-axis Label (all charts):** Number of Tokens

* **Y-axis Label (all charts):** Accuracy (%)

* **X-axis Scale (all charts):** Approximately 8, 16, 32, 64, 128

* **Y-axis Scale (all charts):** Approximately 0, 20, 40, 60, 80, 100

* **Data Series:** A single blue line representing accuracy for each problem.

* **Problem Labels:** Each chart is labeled "Problem: [1-30]"

### Detailed Analysis / Content Details

Here's a breakdown of each chart, noting the general trend and approximate data points. Due to the resolution and chart size, values are approximate.

* **Problem 3:** Line slopes upward, starting around 20% at 8 tokens, reaching approximately 60% at 128 tokens.

* **Problem 6:** Line initially decreases, then increases. Starts around 60% at 8 tokens, dips to around 40% at 16 tokens, then rises to approximately 70% at 128 tokens.

* **Problem 5:** Line is relatively flat, hovering around 50-60% accuracy across all token lengths.

* **Problem 4:** Line increases sharply, starting around 20% at 8 tokens, reaching approximately 80% at 64 tokens, and remaining stable at 128 tokens.

* **Problem 2:** Line increases, then decreases. Starts around 40% at 8 tokens, peaks around 80% at 64 tokens, and drops to approximately 60% at 128 tokens.

* **Problem 1:** Line increases, starting around 30% at 8 tokens, reaching approximately 70% at 128 tokens.

* **Problem 7:** Line increases, starting around 30% at 8 tokens, reaching approximately 70% at 128 tokens.

* **Problem 8:** Line is relatively flat, hovering around 60-70% accuracy across all token lengths.

* **Problem 9:** Line decreases, starting around 60% at 8 tokens, dropping to approximately 40% at 128 tokens.

* **Problem 10:** Line is relatively flat, hovering around 40-50% accuracy across all token lengths.

* **Problem 11:** Line increases sharply, starting around 20% at 8 tokens, reaching approximately 80% at 64 tokens, and remaining stable at 128 tokens.

* **Problem 12:** Line increases, starting around 30% at 8 tokens, reaching approximately 60% at 128 tokens.

* **Problem 13:** Line increases, starting around 30% at 8 tokens, reaching approximately 60% at 128 tokens.

* **Problem 14:** Line is relatively flat, hovering around 40-50% accuracy across all token lengths.

* **Problem 15:** Line is relatively flat, hovering around 40-50% accuracy across all token lengths.

* **Problem 16:** Line increases, starting around 30% at 8 tokens, reaching approximately 60% at 128 tokens.

* **Problem 17:** Line increases, starting around 30% at 8 tokens, reaching approximately 60% at 128 tokens.

* **Problem 18:** Line increases, starting around 30% at 8 tokens, reaching approximately 60% at 128 tokens.

* **Problem 19:** Line increases, starting around 30% at 8 tokens, reaching approximately 60% at 128 tokens.

* **Problem 20:** Line increases, starting around 30% at 8 tokens, reaching approximately 60% at 128 tokens.

* **Problem 21:** Line increases, starting around 30% at 8 tokens, reaching approximately 60% at 128 tokens.

* **Problem 22:** Line increases, starting around 30% at 8 tokens, reaching approximately 60% at 128 tokens.

* **Problem 23:** Line increases, starting around 30% at 8 tokens, reaching approximately 60% at 128 tokens.

* **Problem 24:** Line increases, starting around 30% at 8 tokens, reaching approximately 60% at 128 tokens.

* **Problem 25:** Line increases, starting around 30% at 8 tokens, reaching approximately 60% at 128 tokens.

* **Problem 26:** Line increases, starting around 30% at 8 tokens, reaching approximately 60% at 128 tokens.

* **Problem 27:** Line increases, starting around 30% at 8 tokens, reaching approximately 60% at 128 tokens.

* **Problem 28:** Line increases, starting around 30% at 8 tokens, reaching approximately 60% at 128 tokens.

* **Problem 29:** Line increases, starting around 30% at 8 tokens, reaching approximately 60% at 128 tokens.

* **Problem 30:** Line increases, starting around 30% at 8 tokens, reaching approximately 60% at 128 tokens.

### Key Observations

* Many problems (approximately 15) show a positive correlation between the number of tokens and accuracy, with accuracy generally increasing as the number of tokens increases.

* A few problems exhibit a more complex relationship, with accuracy initially decreasing before increasing.

* Some problems show relatively flat accuracy curves, indicating that increasing the number of tokens does not significantly improve performance.

* There is considerable variability in the maximum accuracy achieved across different problems.

* Problems 6, 11, and 4 show the most pronounced increases in accuracy.

### Interpretation

The data suggests that, for a majority of the problems, increasing the length of thoughts (number of tokens) generally improves the accuracy of AIME-24. However, the degree of improvement varies significantly across problems. Some problems are more sensitive to the number of tokens than others. The flat accuracy curves for certain problems indicate that there is a point of diminishing returns, where adding more tokens does not lead to further performance gains. The initial decrease in accuracy for problem 6 could be due to the model struggling with an initial, potentially noisy, expansion of thought before settling into a more accurate solution. The variability in maximum accuracy suggests that the difficulty of the problems varies, or that AIME-24 has different strengths and weaknesses depending on the problem type. Further analysis would be needed to determine the underlying reasons for these differences. The consistent upward trend for many problems indicates that AIME-24 benefits from more extensive reasoning capabilities, as represented by the increased number of tokens.