## Diagram: Distributed Parameter Server Architecture

### Overview

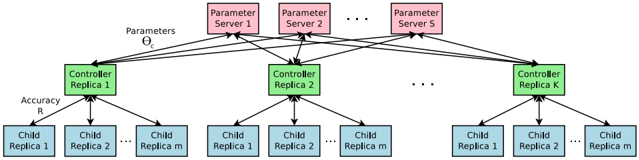

The image is a technical diagram illustrating a hierarchical, distributed system architecture, likely for machine learning model training or parameter management. It depicts a three-tiered structure with data flowing downwards and feedback flowing upwards, emphasizing scalability through replication at each level.

### Components/Axes

The diagram is organized into three distinct horizontal layers, each containing replicated components:

1. **Top Layer (Pink Boxes): Parameter Servers**

* **Labels:** "Parameter Server 1", "Parameter Server 2", ..., "Parameter Server S".

* **Function:** These are the source of model parameters, denoted by the label "Parameters Θ" (Greek letter Theta) on the arrows originating from this layer.

* **Spatial Placement:** Positioned at the top of the diagram, spanning horizontally.

2. **Middle Layer (Green Boxes): Controller Replicas**

* **Labels:** "Controller Replica 1", "Controller Replica 2", ..., "Controller Replica K".

* **Function:** These act as intermediaries. They receive parameters (Θ) from the Parameter Servers and distribute work to the layer below. They also receive feedback, labeled "Accuracy R", from the layer below.

* **Spatial Placement:** Centered horizontally in the diagram, below the Parameter Servers.

3. **Bottom Layer (Blue Boxes): Child Replicas**

* **Labels:** Organized in groups under each Controller Replica. Each group contains: "Child Replica 1", "Child Replica 2", ..., "Child Replica m".

* **Function:** These are the worker units that perform the core computation (e.g., training on data shards). They receive parameters from their parent Controller Replica and send back an "Accuracy R" metric.

* **Spatial Placement:** Arranged in clusters at the bottom of the diagram, with each cluster directly below its parent Controller Replica.

**Flow and Connections:**

* **Downward Flow (Black Arrows):** "Parameters Θ" flow from the Parameter Servers to all Controller Replicas. From each Controller Replica, parameters are then distributed to all of its assigned Child Replicas.

* **Upward Flow (Black Arrows):** "Accuracy R" (a performance metric) flows from each Child Replica back up to its parent Controller Replica. The diagram implies this feedback is aggregated at the controller level, though the final aggregation path to the Parameter Servers is not explicitly drawn.

### Detailed Analysis

* **Scalability Notation:** The use of ellipses ("...") in each layer (S servers, K controllers, m children per controller) explicitly denotes that the system is designed to scale horizontally by adding more replicas at any tier.

* **Hierarchy and Fan-Out:** The architecture shows a clear tree-like fan-out pattern. One Parameter Server connects to multiple Controllers (K connections). Each Controller, in turn, connects to multiple Child Replicas (m connections). This suggests a divide-and-conquer strategy for distributed processing.

* **Color Coding:** A consistent color scheme is used to differentiate component types:

* **Pink:** Parameter Servers (source of truth for model state).

* **Green:** Controller Replicas (orchestration and coordination).

* **Blue:** Child Replicas (execution and computation).

### Key Observations

1. **Bidirectional Communication:** The system is not merely a broadcast tree. The upward flow of "Accuracy R" is critical, indicating a closed-loop system where performance feedback is essential, likely for synchronization, averaging, or updating parameters.

2. **Replication for Fault Tolerance & Performance:** Every component type is replicated (S, K, m). This design pattern is used to increase throughput (parallel processing) and provide fault tolerance (if one replica fails, others can continue).

3. **Clear Separation of Concerns:** The architecture cleanly separates the roles of parameter storage (Servers), coordination (Controllers), and computation (Children).

### Interpretation

This diagram represents a **Parameter Server architecture for distributed machine learning**, a common pattern in large-scale training.

* **What it demonstrates:** It visualizes how a large model's parameters (Θ) can be served to many parallel workers (Child Replicas) through an intermediary coordination layer (Controllers). The workers compute on their data partitions and report back a performance metric (Accuracy R), which is likely used to update the global model parameters in an iterative fashion (e.g., via stochastic gradient descent).

* **Relationships:** The Parameter Servers are the authoritative source. The Controllers are managers that reduce the communication burden on the servers by handling a subset of workers. The Child Replicas are the workhorses. The flow shows a **downstream data/model distribution** and an **upstream metrics aggregation** pattern.

* **Notable Implications:**

* **Bottleneck Potential:** The Parameter Servers could become a communication bottleneck if not properly scaled (S is a key parameter).

* **Synchronization Challenge:** The architecture implies a need for a synchronization protocol (e.g., synchronous vs. asynchronous updates) to manage the parameters Θ and accuracy R across all replicas consistently.

* **Scalability Design:** The explicit use of variables (S, K, m) highlights that this is a scalable template. The actual system's performance would depend on the chosen values for these parameters and the network topology connecting them.

**Language Declaration:** All text in the image is in English. The mathematical symbol "Θ" (Theta) is used as a standard notation for model parameters.