## Heatmap: AUROC for Projections a^Tt

### Overview

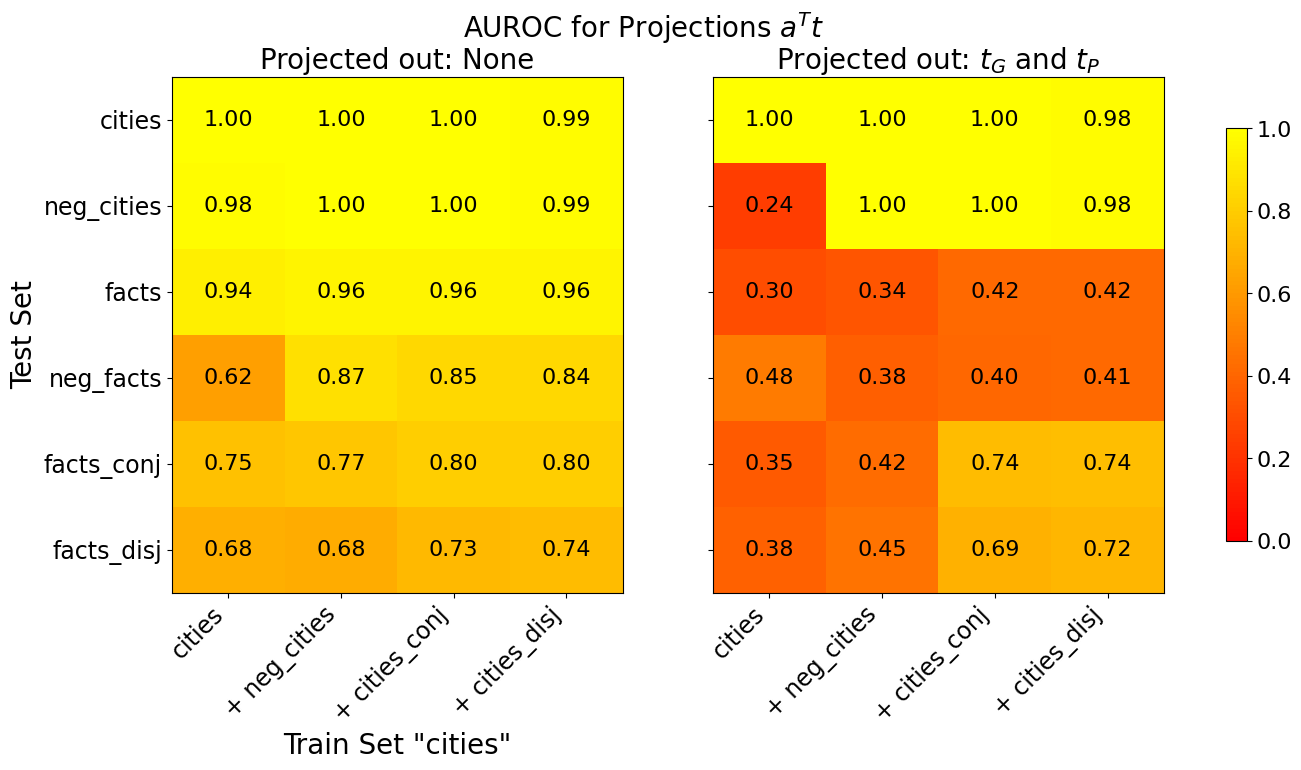

The image presents two heatmaps displaying the Area Under the Receiver Operating Characteristic Curve (AUROC) values for different projection methods. The left heatmap shows the AUROC values when no projection is applied ("Projected out: None"), while the right heatmap shows the AUROC values when projections tG and tP are applied ("Projected out: tG and tP"). The heatmaps compare the performance of a model trained on the "cities" dataset and tested on various datasets, including "cities", "neg_cities", "facts", "neg_facts", "facts_conj", and "facts_disj". The color intensity represents the AUROC value, with yellow indicating higher values (close to 1.0) and red indicating lower values (close to 0.0).

### Components/Axes

* **Title:** AUROC for Projections a^Tt

* **X-axis (Train Set "cities"):**

* cities

* \+ neg\_cities

* \+ cities\_conj

* \+ cities\_disj

* **Y-axis (Test Set):**

* cities

* neg\_cities

* facts

* neg\_facts

* facts\_conj

* facts\_disj

* **Heatmap 1 Title:** Projected out: None

* **Heatmap 2 Title:** Projected out: tG and tP

* **Colorbar:**

* 1. 0 (Yellow)

* 0.8

* 0.6

* 0.4

* 0.2

* 0. 0 (Red)

### Detailed Analysis

#### Heatmap 1: Projected out: None

| Test Set | cities | + neg\_cities | + cities\_conj | + cities\_disj |

| :---------- | :----- | :------------ | :------------- | :------------- |

| cities | 1.00 | 1.00 | 1.00 | 0.99 |

| neg\_cities | 0.98 | 1.00 | 1.00 | 0.99 |

| facts | 0.94 | 0.96 | 0.96 | 0.96 |

| neg\_facts | 0.62 | 0.87 | 0.85 | 0.84 |

| facts\_conj | 0.75 | 0.77 | 0.80 | 0.80 |

| facts\_disj | 0.68 | 0.68 | 0.73 | 0.74 |

* **cities:** The AUROC values are consistently high (>=0.99) across all training sets.

* **neg\_cities:** The AUROC values are also consistently high (>=0.98) across all training sets.

* **facts:** The AUROC values are high (>=0.94) across all training sets.

* **neg\_facts:** The AUROC values are lower compared to other test sets, ranging from 0.62 to 0.87.

* **facts\_conj:** The AUROC values range from 0.75 to 0.80.

* **facts\_disj:** The AUROC values range from 0.68 to 0.74.

#### Heatmap 2: Projected out: tG and tP

| Test Set | cities | + neg\_cities | + cities\_conj | + cities\_disj |

| :---------- | :----- | :------------ | :------------- | :------------- |

| cities | 1.00 | 1.00 | 1.00 | 0.98 |

| neg\_cities | 0.24 | 1.00 | 1.00 | 0.98 |

| facts | 0.30 | 0.34 | 0.42 | 0.42 |

| neg\_facts | 0.48 | 0.38 | 0.40 | 0.41 |

| facts\_conj | 0.35 | 0.42 | 0.74 | 0.74 |

| facts\_disj | 0.38 | 0.45 | 0.69 | 0.72 |

* **cities:** The AUROC values are high (>=0.98) across all training sets.

* **neg\_cities:** The AUROC value is very low (0.24) when trained on "cities" alone, but high (>=0.98) when trained on other sets.

* **facts:** The AUROC values are low, ranging from 0.30 to 0.42.

* **neg\_facts:** The AUROC values are low, ranging from 0.38 to 0.48.

* **facts\_conj:** The AUROC values range from 0.35 to 0.74.

* **facts\_disj:** The AUROC values range from 0.38 to 0.72.

### Key Observations

* When no projection is applied, the model performs well on "cities", "neg\_cities", and "facts" test sets, with high AUROC values.

* Applying projections tG and tP significantly reduces the performance on "neg\_cities", "facts", "neg\_facts", "facts\_conj", and "facts\_disj" test sets when trained on "cities" alone.

* Training on combined datasets (+ neg\_cities, + cities\_conj, + cities\_disj) improves the performance on "neg\_cities" when projections tG and tP are applied.

* The "cities" test set consistently shows high AUROC values regardless of the projection method or training set.

### Interpretation

The heatmaps illustrate the impact of applying projections tG and tP on the model's performance across different test sets. When no projection is applied, the model generalizes well to "cities", "neg\_cities", and "facts" datasets. However, applying projections tG and tP seems to negatively affect the model's ability to generalize, especially when trained solely on the "cities" dataset. This suggests that the projections might be removing information that is crucial for distinguishing between negative cities, facts, and negative facts.

The improvement in performance on "neg\_cities" when trained on combined datasets indicates that including negative examples during training can help the model learn more robust representations that are less sensitive to the applied projections. The consistently high AUROC values for the "cities" test set suggest that the model is able to effectively learn and recognize cities regardless of the projection method or training set.

The lower AUROC values for "neg\_facts", "facts\_conj", and "facts\_disj" compared to "cities" and "neg\_cities" suggest that these datasets are more challenging for the model to classify, possibly due to the complexity of the relationships between facts and their negations or conjunctions/disjunctions.