## Diagram: NeuroSymbolic AI Conceptual Framework

### Overview

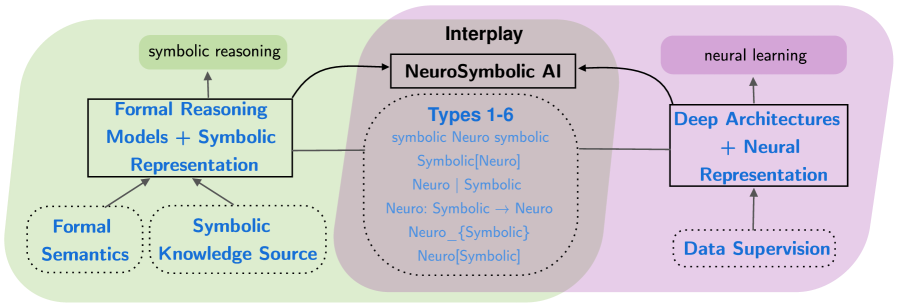

This image is a conceptual diagram illustrating the architecture and interplay of NeuroSymbolic AI. It visually segments the field into three primary, color-coded regions: a symbolic reasoning component (green, left), a neural learning component (purple, right), and the central "Interplay" zone (gray) where they combine to form NeuroSymbolic AI. Arrows indicate the flow of information and influence between these components.

### Components/Axes

The diagram is organized into three main vertical sections:

1. **Left Section (Green Background): Symbolic Reasoning Domain**

* **Central Box:** "Formal Reasoning Models + Symbolic Representation"

* **Upper Label:** "symbolic reasoning" (in a rounded rectangle)

* **Lower Supporting Elements (Dotted Boxes):**

* "Formal Semantics"

* "Symbolic Knowledge Source"

* **Flow:** Arrows point from the two lower dotted boxes up to the central box. An arrow also points from the central box up to the "symbolic reasoning" label.

2. **Center Section (Gray Background): Interplay Zone**

* **Top Label:** "Interplay"

* **Central Box:** "NeuroSymbolic AI"

* **Lower Dotted Circle:** Contains a list titled "Types 1-6" with the following text entries:

* symbolic Neuro symbolic

* Symbolic[Neuro]

* Neuro | Symbolic

* Neuro: Symbolic → Neuro

* Neuro_{Symbolic}

* Neuro[Symbolic]

* **Flow:** Arrows from the left (symbolic) and right (neural) central boxes point into the "NeuroSymbolic AI" box.

3. **Right Section (Purple Background): Neural Learning Domain**

* **Central Box:** "Deep Architectures + Neural Representation"

* **Upper Label:** "neural learning" (in a rounded rectangle)

* **Lower Supporting Element (Dotted Box):** "Data Supervision"

* **Flow:** An arrow points from the "Data Supervision" box up to the central box. An arrow also points from the central box up to the "neural learning" label.

### Detailed Analysis

* **Text Transcription:** All text in the diagram is in English. The list under "Types 1-6" uses symbolic notation (brackets, arrows, subscripts) to denote different integration patterns between neural and symbolic approaches.

* **Spatial Grounding:**

* The legend/labels for the domains ("symbolic reasoning", "neural learning") are placed at the top of their respective colored sections.

* The core model boxes ("Formal Reasoning Models...", "Deep Architectures...") are centered vertically within their sections.

* The supporting components ("Formal Semantics", "Data Supervision") are placed at the bottom of their sections.

* The "Interplay" label and "NeuroSymbolic AI" box are centered in the gray zone, with the "Types 1-6" list directly below.

* **Flow & Relationships:**

* The diagram establishes a clear bidirectional synthesis: Symbolic components (left) and Neural components (right) both feed into the central NeuroSymbolic AI system.

* Within each domain, there is a bottom-up flow: foundational elements (Semantics/Knowledge Source, Data Supervision) support the core models, which in turn enable the high-level reasoning/learning paradigm.

### Key Observations

1. **Symmetrical Structure:** The diagram is deliberately symmetrical, presenting symbolic and neural approaches as equally important pillars that converge.

2. **Taxonomy of Integration:** The "Types 1-6" list is a critical component, suggesting that NeuroSymbolic AI is not a single method but a spectrum of integration strategies, notated with formal symbols.

3. **Supporting Foundations:** The diagram explicitly identifies the foundational inputs for each paradigm: formal logic and curated knowledge for symbolic systems, and supervised data for neural systems.

4. **Visual Hierarchy:** Solid boxes represent core model architectures, while dotted boxes/circles represent supporting concepts or categorical lists, creating a clear visual distinction.

### Interpretation

This diagram serves as a high-level map for understanding the NeuroSymbolic AI field. It argues that this field emerges from the deliberate **interplay** between two historically distinct AI paradigms.

* **What it demonstrates:** It posits that robust NeuroSymbolic AI systems require both **formal reasoning models** (capable of logic and explicit knowledge representation) and **deep neural architectures** (capable of learning patterns from data). The central gray zone is where these are combined.

* **Relationships:** The flow arrows suggest a process of integration. The symbolic side provides structure, rules, and explainability. The neural side provides adaptability, pattern recognition, and scalability from data. The "Types 1-6" list implies that the research challenge lies in defining the precise syntax and mechanics of this combination (e.g., is the symbolic part a wrapper, a guide, or a substrate for the neural part?).

* **Underlying Message:** The diagram frames NeuroSymbolic AI as a necessary synthesis to overcome the limitations of pure symbolic AI (brittleness, knowledge acquisition bottleneck) and pure neural AI (lack of explainability, data hunger, poor reasoning). The inclusion of "Formal Semantics" and "Data Supervision" as foundations highlights that this synthesis must be grounded in both rigorous logic and empirical evidence.