## Diagram: NeuroSymbolic AI Architecture and Interplay

### Overview

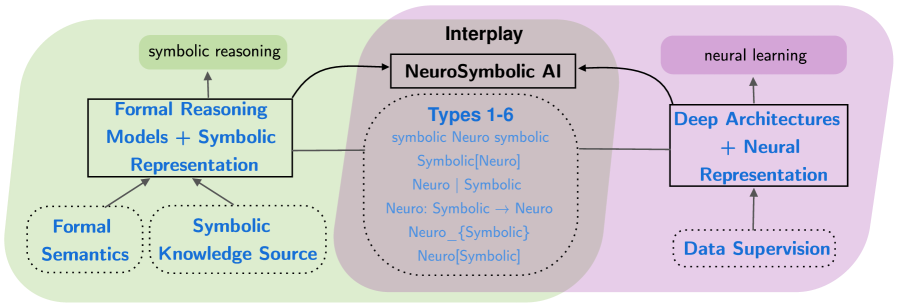

The diagram illustrates a conceptual framework for NeuroSymbolic AI, depicting the interplay between symbolic reasoning, neural learning, and their hybrid integration. It uses color-coded blocks to represent distinct paradigms and their relationships, with arrows indicating directional flow and integration points.

### Components/Axes

1. **Left Block (Green)**:

- **Title**: "symbolic reasoning"

- **Subcomponents**:

- "Formal Reasoning Models + Symbolic Representation"

- "Formal Semantics" (dotted box)

- "Symbolic Knowledge Source" (dotted box)

2. **Center Block (Gray)**:

- **Title**: "Interplay" → "NeuroSymbolic AI"

- **Subcomponents**:

- "Types 1-6" (dotted box with hierarchical list):

- symbolic Neuro symbolic

- Symbolic[Neuro]

- Neuro | Symbolic

- Neuro: Symbolic → Neuro

- Neuro_{Symbolic}

- Neuro[Symbolic]

3. **Right Block (Purple)**:

- **Title**: "neural learning"

- **Subcomponents**:

- "Deep Architectures + Neural Representation"

- "Data Supervision" (dotted box)

### Detailed Analysis

- **Flow Direction**:

- Arrows originate from the left (symbolic reasoning) and right (neural learning) blocks, converging on the central NeuroSymbolic AI block.

- The central block contains bidirectional arrows, suggesting mutual influence between symbolic and neural components.

- **Textual Elements**:

- All labels are in English, with no non-English text present.

- Dotted boxes emphasize auxiliary concepts (e.g., "Formal Semantics," "Data Supervision").

### Key Observations

1. **Modular Design**: The diagram emphasizes modularity, with distinct but interconnected paradigms.

2. **Hybrid Integration**: The central "NeuroSymbolic AI" block acts as a bridge, combining symbolic reasoning (left) and neural learning (right).

3. **Type Hierarchy**: The "Types 1-6" list suggests a progression or classification of integration methods, ranging from purely symbolic to purely neural and their combinations.

4. **Support Systems**: Dotted boxes highlight foundational elements (e.g., "Formal Semantics" as a knowledge source for symbolic reasoning).

### Interpretation

This diagram advocates for a **dual-paradigm approach** to AI development, where symbolic reasoning (rule-based, logic-driven systems) and neural learning (data-driven, pattern recognition) are not treated as opposing forces but as complementary components. The central "NeuroSymbolic AI" block represents the synthesis of these approaches, enabling systems to leverage the interpretability of symbolic methods and the adaptability of neural networks.

The "Types 1-6" hierarchy implies a spectrum of integration strategies, from hybrid systems (e.g., "Neuro: Symbolic → Neuro") to fully integrated architectures (e.g., "Neuro[Symbolic]"). The inclusion of "Data Supervision" on the right suggests that neural components rely on supervised learning frameworks, while symbolic reasoning depends on formal semantics and knowledge sources.

The bidirectional arrows in the central block underscore the importance of **feedback loops** between symbolic and neural systems, enabling continuous refinement. For example, neural networks could learn from symbolic rules, while symbolic models could be optimized using neural insights. This interplay could address limitations of standalone systems (e.g., neural networks' "black box" nature) while retaining their strengths.

The diagram’s color coding (green for symbolic, purple for neural) visually reinforces the separation and eventual convergence of these paradigms, making it a compelling case for hybrid AI architectures in complex problem-solving scenarios.