# Technical Data Extraction: Quantization Performance Comparison (INT3/g128)

## 1. Document Overview

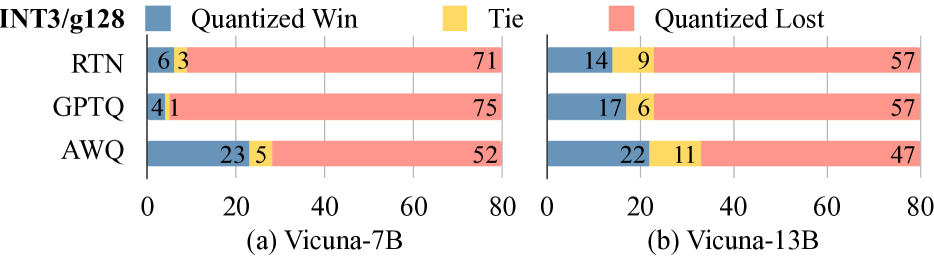

This image contains two horizontal stacked bar charts comparing the performance of different quantization methods (RTN, GPTQ, and AWQ) applied to two versions of the Vicuna Large Language Model (7B and 13B). The data specifically refers to the **INT3/g128** quantization configuration.

## 2. Component Isolation

### Header / Legend

* **Main Title (Top Left):** `INT3/g128`

* **Legend (Top Center):**

* **Blue Square:** `Quantized Win`

* **Yellow Square:** `Tie`

* **Red/Pink Square:** `Quantized Lost`

* **Spatial Grounding:** The legend is positioned at the top of the image, spanning across both sub-charts.

### Main Chart Structure

The image is divided into two sub-charts:

* **(a) Vicuna-7B** (Left side)

* **(b) Vicuna-13B** (Right side)

**Common Y-Axis (Methods):**

* `RTN`

* `GPTQ`

* `AWQ`

**Common X-Axis (Scale):**

* Numerical scale from `0` to `80` with markers at `0`, `20`, `40`, `60`, and `80`.

---

## 3. Data Extraction and Trend Analysis

### (a) Vicuna-7B Data Table

**Trend Observation:** For the 7B model, "Quantized Lost" (Red) is the dominant outcome across all methods, though AWQ shows a significantly higher "Win" rate compared to RTN and GPTQ.

| Method | Quantized Win (Blue) | Tie (Yellow) | Quantized Lost (Red) | Total Points Accounted |

| :--- | :---: | :---: | :---: | :---: |

| **RTN** | 6 | 3 | 71 | 80 |

| **GPTQ** | 4 | 1 | 75 | 80 |

| **AWQ** | 23 | 5 | 52 | 80 |

### (b) Vicuna-13B Data Table

**Trend Observation:** In the 13B model, the "Quantized Win" and "Tie" segments increase across all methods compared to the 7B model. AWQ remains the strongest performer with the lowest "Lost" count, while GPTQ shows a notable improvement in "Win" rate over its 7B counterpart.

| Method | Quantized Win (Blue) | Tie (Yellow) | Quantized Lost (Red) | Total Points Accounted |

| :--- | :---: | :---: | :---: | :---: |

| **RTN** | 14 | 9 | 57 | 80 |

| **GPTQ** | 17 | 6 | 57 | 80 |

| **AWQ** | 22 | 11 | 47 | 80 |

---

## 4. Key Findings and Comparative Analysis

* **Quantization Method Performance:** In both the 7B and 13B models, **AWQ** consistently achieves the highest number of "Quantized Wins" and the lowest number of "Quantized Lost" results.

* **Model Scale Impact:** Moving from Vicuna-7B to Vicuna-13B improves the performance of all quantization methods. The "Quantized Lost" count decreases for every method as the model size increases.

* **GPTQ vs. RTN:** In the 7B model, RTN slightly outperforms GPTQ in wins (6 vs 4). However, in the 13B model, GPTQ outperforms RTN in wins (17 vs 14).

* **Data Consistency:** Each bar sums to exactly 80 units, representing a consistent sample size across all tests.