\n

## Diagram: Model Training Pipeline

### Overview

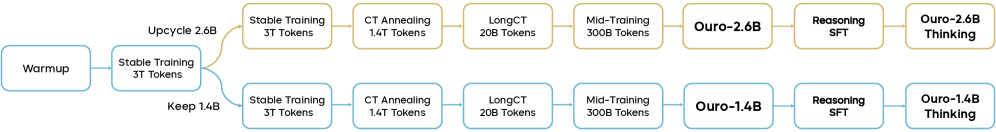

The image depicts a flowchart illustrating a model training pipeline, likely for a large language model. The pipeline consists of several stages, with branching paths based on model size (2.6B and 1.6B). The diagram shows the sequence of training steps and the token counts associated with each stage.

### Components/Axes

The diagram consists of rectangular blocks representing training stages, connected by arrows indicating the flow of the process. There are no explicit axes or scales. The stages are labeled with their names and token counts.

### Detailed Analysis or Content Details

The pipeline begins with a "Warmup" stage. From there, it branches into two paths:

**Upper Branch (2.6B Model):**

1. **Warmup** -> **Upcycle 2.6B**

2. **Upcycle 2.6B** -> **Stable Training 3T Tokens**

3. **Stable Training 3T Tokens** -> **CT Annealing 1.4T Tokens**

4. **CT Annealing 1.4T Tokens** -> **LongCT 20B Tokens**

5. **LongCT 20B Tokens** -> **Mid-Training 300B Tokens**

6. **Mid-Training 300B Tokens** -> **Ouro-2.6B**

7. **Ouro-2.6B** -> **Reasoning SFT**

8. **Reasoning SFT** -> **Ouro-2.6B Thinking**

**Lower Branch (1.6B Model):**

1. **Warmup** -> **Keep 1.4B**

2. **Keep 1.4B** -> **Stable Training 3T Tokens**

3. **Stable Training 3T Tokens** -> **CT Annealing 1.4T Tokens**

4. **CT Annealing 1.4T Tokens** -> **LongCT 20B Tokens**

5. **LongCT 20B Tokens** -> **Mid-Training 300B Tokens**

6. **Mid-Training 300B Tokens** -> **Ouro-1.6B**

7. **Ouro-1.6B** -> **Reasoning SFT**

8. **Reasoning SFT** -> **Ouro-1.6B Thinking**

The token counts associated with each stage are:

* Warmup: Not specified

* Upcycle 2.6B: 2.6B

* Keep 1.4B: 1.4B

* Stable Training: 3T (3 Trillion)

* CT Annealing: 1.4T (1.4 Trillion)

* LongCT: 20B (20 Billion)

* Mid-Training: 300B (300 Billion)

* Ouro-2.6B/Ouro-1.6B: 2.6B/1.6B

* Reasoning SFT: Not specified

* Ouro-2.6B Thinking/Ouro-1.6B Thinking: Not specified

### Key Observations

The diagram highlights a parallel training process for two model sizes (2.6B and 1.6B). The initial stages (Warmup, Stable Training, CT Annealing, LongCT, Mid-Training) are common to both branches. The "Upcycle" and "Keep" stages represent the initial divergence based on model size. The final stages involve "Reasoning SFT" and "Thinking" stages for both models. The token counts increase significantly from the "LongCT" stage to the "Mid-Training" stage.

### Interpretation

The diagram illustrates a phased approach to training large language models. The initial stages focus on foundational training with large token counts, followed by more specialized training stages like "Reasoning SFT" and "Thinking." The branching paths suggest that the training process is adapted based on the desired model size. The use of "CT Annealing" and "LongCT" suggests techniques for improving the model's context handling capabilities. The diagram provides a high-level overview of the training pipeline and does not delve into the specific details of each stage. The "Ouro" stages likely represent the final, refined models. The diagram suggests a focus on scaling model size and improving reasoning abilities.