TECHNICAL ASSET FINGERPRINT

55a3e76a88621eac99565a2f

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

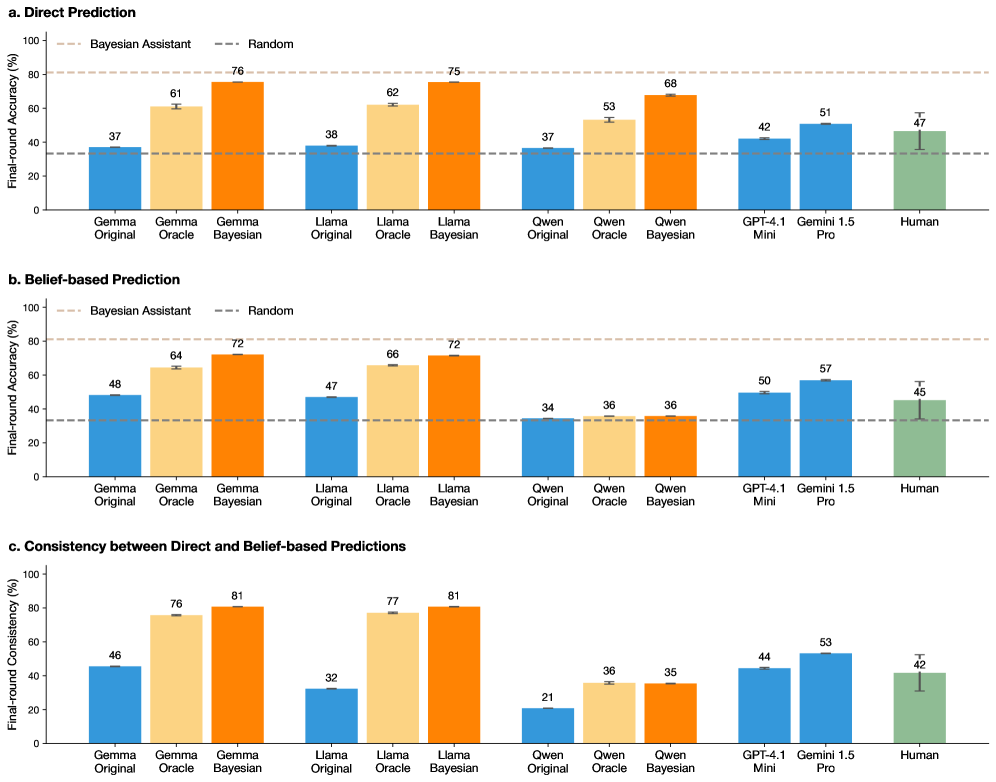

## Bar Charts: Model Performance Comparison

### Overview

The image presents three bar charts comparing the performance of various language models (Gemma, Llama, Qwen, GPT-4.1, Gemini 1.5) and human performance on a task. The charts depict "Final-round Accuracy (%)" or "Final-round Consistency (%)" on the y-axis, with different model configurations (Original, Oracle, Bayesian) on the x-axis. The charts compare "Direct Prediction", "Belief-based Prediction", and "Consistency between Direct and Belief-based Predictions". Horizontal dashed lines represent the performance of a "Bayesian Assistant" and a "Random" baseline.

### Components/Axes

**General Chart Elements:**

* **Title:** The image contains three sub-charts labeled "a. Direct Prediction", "b. Belief-based Prediction", and "c. Consistency between Direct and Belief-based Predictions".

* **Y-axis:** Labeled "Final-round Accuracy (%)" for charts a and b, and "Final-round Consistency (%)" for chart c. The scale ranges from 0 to 100 in increments of 20.

* **X-axis:** Categorical axis representing different language models and configurations: Gemma (Original, Oracle, Bayesian), Llama (Original, Oracle, Bayesian), Qwen (Original, Oracle, Bayesian), GPT-4.1 Mini, Gemini 1.5 Pro, and Human.

* **Bars:** Represent the performance of each model/configuration.

* **Error Bars:** Small vertical lines on top of each bar, indicating the standard error or confidence interval.

* **Horizontal Dashed Lines:** Two horizontal dashed lines representing the performance of "Bayesian Assistant" (top line) and "Random" (bottom line).

**Legend (Inferred):**

* Blue bars: "Original" model configuration.

* Light Orange bars: "Oracle" model configuration.

* Dark Orange bars: "Bayesian" model configuration.

* Green bars: "Human" performance.

### Detailed Analysis

#### a. Direct Prediction

* **Y-axis:** Final-round Accuracy (%)

* **Bayesian Assistant Baseline:** Approximately 80%

* **Random Baseline:** Approximately 35%

**Data Points:**

* **Gemma Original:** 37%

* **Gemma Oracle:** 61%

* **Gemma Bayesian:** 76%

* **Llama Original:** 38%

* **Llama Oracle:** 62%

* **Llama Bayesian:** 75%

* **Qwen Original:** 37%

* **Qwen Oracle:** 53%

* **Qwen Bayesian:** 68%

* **GPT-4.1 Mini:** 42%

* **Gemini 1.5 Pro:** 51%

* **Human:** 47%

**Trends:**

* For Gemma, Llama, and Qwen, the Bayesian configuration consistently outperforms the Oracle and Original configurations.

* GPT-4.1 Mini and Gemini 1.5 Pro perform similarly to each other.

* Human performance is comparable to GPT-4.1 Mini and Gemini 1.5 Pro.

#### b. Belief-based Prediction

* **Y-axis:** Final-round Accuracy (%)

* **Bayesian Assistant Baseline:** Approximately 80%

* **Random Baseline:** Approximately 35%

**Data Points:**

* **Gemma Original:** 48%

* **Gemma Oracle:** 64%

* **Gemma Bayesian:** 72%

* **Llama Original:** 47%

* **Llama Oracle:** 66%

* **Llama Bayesian:** 72%

* **Qwen Original:** 34%

* **Qwen Oracle:** 36%

* **Qwen Bayesian:** 36%

* **GPT-4.1 Mini:** 50%

* **Gemini 1.5 Pro:** 57%

* **Human:** 45%

**Trends:**

* Similar to Direct Prediction, the Bayesian configuration generally outperforms the Oracle and Original configurations for Gemma and Llama.

* Qwen's performance is relatively flat across all three configurations.

* GPT-4.1 Mini and Gemini 1.5 Pro show improved performance compared to the Direct Prediction chart.

* Human performance is slightly lower than in the Direct Prediction chart.

#### c. Consistency between Direct and Belief-based Predictions

* **Y-axis:** Final-round Consistency (%)

* **Bayesian Assistant Baseline:** Not applicable in this chart.

* **Random Baseline:** Not applicable in this chart.

**Data Points:**

* **Gemma Original:** 46%

* **Gemma Oracle:** 76%

* **Gemma Bayesian:** 81%

* **Llama Original:** 32%

* **Llama Oracle:** 77%

* **Llama Bayesian:** 81%

* **Qwen Original:** 21%

* **Qwen Oracle:** 36%

* **Qwen Bayesian:** 35%

* **GPT-4.1 Mini:** 44%

* **Gemini 1.5 Pro:** 53%

* **Human:** 42%

**Trends:**

* The Bayesian and Oracle configurations for Gemma and Llama show significantly higher consistency than the Original configurations.

* Qwen's consistency is notably lower compared to other models.

* GPT-4.1 Mini and Gemini 1.5 Pro show moderate consistency.

* Human consistency is relatively low.

### Key Observations

* The "Bayesian" configurations of Gemma and Llama consistently achieve the highest accuracy and consistency across all three charts.

* Qwen's performance is generally lower and more consistent across different configurations, especially in the "Belief-based Prediction" and "Consistency" charts.

* GPT-4.1 Mini and Gemini 1.5 Pro show comparable performance, often outperforming the "Original" configurations of Gemma, Llama, and Qwen.

* Human performance varies across the charts, sometimes exceeding the performance of certain models but generally falling within the range of GPT-4.1 Mini and Gemini 1.5 Pro.

### Interpretation

The data suggests that incorporating Bayesian methods into language models can significantly improve their accuracy and consistency in both direct and belief-based predictions. The "Oracle" configurations also show substantial improvements over the "Original" models, indicating the value of informed model design.

The relatively low consistency of Qwen suggests that its predictions may be less reliable or more sensitive to the specific task or context. The performance of GPT-4.1 Mini and Gemini 1.5 Pro highlights the capabilities of larger, more advanced models.

The variability in human performance underscores the challenges of evaluating language models against human benchmarks, as human performance itself can be inconsistent. The fact that human performance is sometimes worse than the models suggests that the models are in some cases better than humans.

The relationship between the charts is that they show different aspects of model performance. "Direct Prediction" measures raw accuracy, "Belief-based Prediction" measures accuracy when considering the model's confidence, and "Consistency" measures how well the model's direct and belief-based predictions align.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

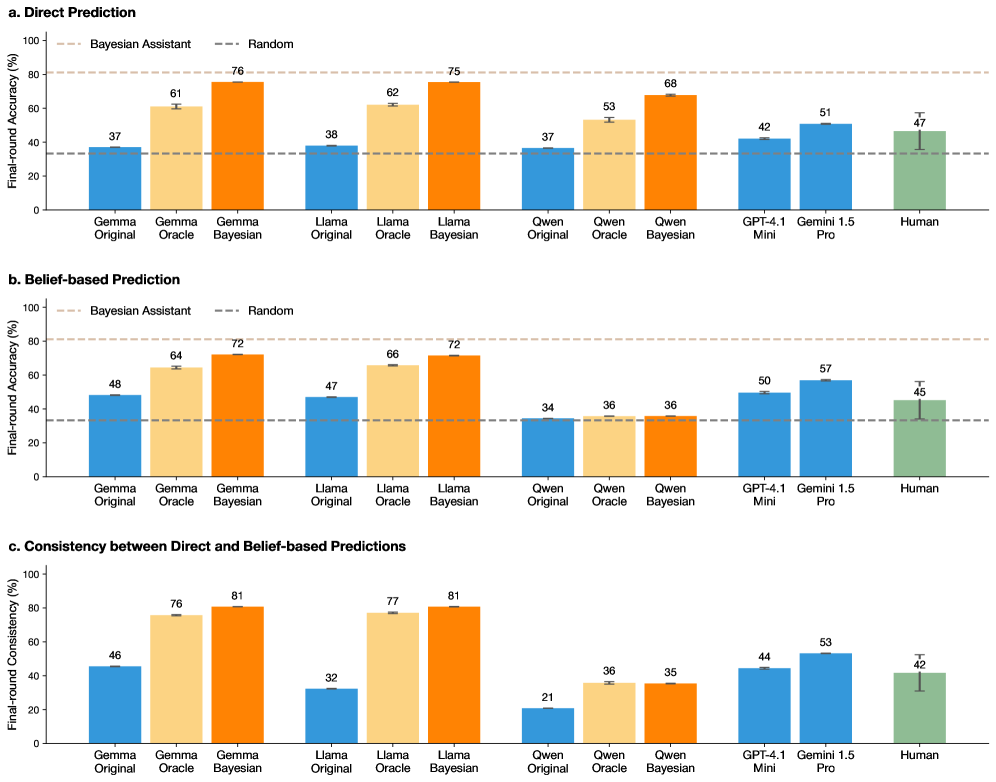

## Bar Chart: Model Performance Comparison

### Overview

The image presents a comparison of the performance of several Large Language Models (LLMs) – Gemma Original, Gemma Oracle, Gemma Bayesian, Llama Original, Llama Oracle, Llama Bayesian, Owen Original, Owen Oracle, Owen Bayesian, GPT-4.1 Mini, Gemini 1.5 Pro, and Human – across three different prediction tasks: Direct Prediction, Belief-based Prediction, and Consistency between Direct and Belief-based Predictions. The performance metric is "Final-round Accuracy (%)" for the first two tasks and "Final-round Consistency (%)" for the third. Each model's performance is represented by a bar with error bars indicating uncertainty. A "Bayesian Assistant" and "Random" baseline are also included for comparison.

### Components/Axes

* **X-axis:** Model Name (Gemma Original, Gemma Oracle, Gemma Bayesian, Llama Original, Llama Oracle, Llama Bayesian, Owen Original, Owen Oracle, Owen Bayesian, GPT-4.1 Mini, Gemini 1.5 Pro, Human)

* **Y-axis:** Final-round Accuracy (%) or Final-round Consistency (%) (Scale: 0 to 100)

* **Legend:**

* Blue: Bayesian Assistant

* Orange: Random

* Green: Model Performance (varying shades for each model)

* **Subplots:** Three separate bar charts labeled a, b, and c, representing the three prediction tasks.

* **Error Bars:** Represent uncertainty in the performance metric.

### Detailed Analysis or Content Details

**a. Direct Prediction**

* **Bayesian Assistant:** Approximately 68% accuracy, with an error bar ranging from approximately 64% to 72%.

* **Random:** Approximately 37% accuracy, with an error bar ranging from approximately 33% to 41%.

* **Gemma Original:** Approximately 37% accuracy, with an error bar ranging from approximately 33% to 41%.

* **Gemma Oracle:** Approximately 61% accuracy, with an error bar ranging from approximately 57% to 65%.

* **Gemma Bayesian:** Approximately 76% accuracy, with an error bar ranging from approximately 72% to 80%.

* **Llama Original:** Approximately 38% accuracy, with an error bar ranging from approximately 34% to 42%.

* **Llama Oracle:** Approximately 62% accuracy, with an error bar ranging from approximately 58% to 66%.

* **Llama Bayesian:** Approximately 75% accuracy, with an error bar ranging from approximately 71% to 79%.

* **Owen Original:** Approximately 37% accuracy, with an error bar ranging from approximately 33% to 41%.

* **Owen Oracle:** Approximately 53% accuracy, with an error bar ranging from approximately 49% to 57%.

* **Owen Bayesian:** Approximately 68% accuracy, with an error bar ranging from approximately 64% to 72%.

* **GPT-4.1 Mini:** Approximately 42% accuracy, with an error bar ranging from approximately 38% to 46%.

* **Gemini 1.5 Pro:** Approximately 51% accuracy, with an error bar ranging from approximately 47% to 55%.

* **Human:** Approximately 47% accuracy, with an error bar ranging from approximately 43% to 51%.

**b. Belief-based Prediction**

* **Bayesian Assistant:** Approximately 64% accuracy, with an error bar ranging from approximately 60% to 68%.

* **Random:** Approximately 34% accuracy, with an error bar ranging from approximately 30% to 38%.

* **Gemma Original:** Approximately 48% accuracy, with an error bar ranging from approximately 44% to 52%.

* **Gemma Oracle:** Approximately 72% accuracy, with an error bar ranging from approximately 68% to 76%.

* **Gemma Bayesian:** Approximately 72% accuracy, with an error bar ranging from approximately 68% to 76%.

* **Llama Original:** Approximately 47% accuracy, with an error bar ranging from approximately 43% to 51%.

* **Llama Oracle:** Approximately 66% accuracy, with an error bar ranging from approximately 62% to 70%.

* **Llama Bayesian:** Approximately 72% accuracy, with an error bar ranging from approximately 68% to 76%.

* **Owen Original:** Approximately 36% accuracy, with an error bar ranging from approximately 32% to 40%.

* **Owen Oracle:** Approximately 36% accuracy, with an error bar ranging from approximately 32% to 40%.

* **Owen Bayesian:** Approximately 36% accuracy, with an error bar ranging from approximately 32% to 40%.

* **GPT-4.1 Mini:** Approximately 50% accuracy, with an error bar ranging from approximately 46% to 54%.

* **Gemini 1.5 Pro:** Approximately 57% accuracy, with an error bar ranging from approximately 53% to 61%.

* **Human:** Approximately 45% accuracy, with an error bar ranging from approximately 41% to 49%.

**c. Consistency between Direct and Belief-based Predictions**

* **Bayesian Assistant:** Approximately 76% consistency, with an error bar ranging from approximately 72% to 80%.

* **Random:** Approximately 46% consistency, with an error bar ranging from approximately 42% to 50%.

* **Gemma Original:** Approximately 46% consistency, with an error bar ranging from approximately 42% to 50%.

* **Gemma Oracle:** Approximately 76% consistency, with an error bar ranging from approximately 72% to 80%.

* **Gemma Bayesian:** Approximately 81% consistency, with an error bar ranging from approximately 77% to 85%.

* **Llama Original:** Approximately 32% consistency, with an error bar ranging from approximately 28% to 36%.

* **Llama Oracle:** Approximately 77% consistency, with an error bar ranging from approximately 73% to 81%.

* **Llama Bayesian:** Approximately 81% consistency, with an error bar ranging from approximately 77% to 85%.

* **Owen Original:** Approximately 21% consistency, with an error bar ranging from approximately 17% to 25%.

* **Owen Oracle:** Approximately 36% consistency, with an error bar ranging from approximately 32% to 40%.

* **Owen Bayesian:** Approximately 35% consistency, with an error bar ranging from approximately 31% to 39%.

* **GPT-4.1 Mini:** Approximately 44% consistency, with an error bar ranging from approximately 40% to 48%.

* **Gemini 1.5 Pro:** Approximately 53% consistency, with an error bar ranging from approximately 49% to 57%.

* **Human:** Approximately 42% consistency, with an error bar ranging from approximately 38% to 46%.

### Key Observations

* Models with "Bayesian" in their name consistently outperform their "Original" and "Oracle" counterparts across all three tasks.

* The "Random" baseline performs poorly in all tasks, indicating the models are learning something beyond chance.

* Gemma Bayesian and Llama Bayesian achieve the highest accuracy/consistency scores in most cases.

* Owen models generally perform worse than Gemma, Llama, GPT-4.1, and Gemini.

* Human performance is generally comparable to or slightly below that of the best-performing models (Gemini 1.5 Pro and Bayesian models).

### Interpretation

The data suggests that incorporating Bayesian principles into the model architecture significantly improves performance in both direct prediction and belief-based prediction tasks, as well as the consistency between the two. The consistently high scores of the "Bayesian" models indicate that this approach is effective in capturing and representing uncertainty, leading to more accurate and reliable predictions. The relatively poor performance of the "Owen" models suggests that their architecture or training data may be less effective. The fact that human performance is competitive with the best models highlights the complexity of the tasks and the potential for further improvement in LLM performance. The consistency metric (c) is particularly interesting, as it suggests that the Bayesian models are not only more accurate but also more internally coherent in their predictions. This could be a valuable property for applications where trustworthiness and explainability are important. The error bars indicate that the differences in performance between some models are statistically significant, while others may be due to random variation. Further analysis with larger sample sizes would be needed to confirm these findings.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Bar Charts: Model Performance Comparison Across Prediction Tasks

### Overview

The image contains three horizontal bar charts (labeled a, b, and c) comparing the performance of various AI models and humans on two prediction tasks and their consistency. The charts are titled "Direct Prediction," "Belief-based Prediction," and "Consistency between Direct and Belief-based Predictions." Each chart compares multiple model families (Gemma, Llama, Qwen) in three variants (Original, Oracle, Bayesian), alongside GPT-4.1 Mini, Gemini 1.5 Pro, and Human performance.

### Components/Axes

* **Chart Titles:**

* a. Direct Prediction

* b. Belief-based Prediction

* c. Consistency between Direct and Belief-based Predictions

* **Y-Axis Labels:**

* Charts a & b: "Final-round Accuracy (%)"

* Chart c: "Final-round Consistency (%)"

* **Y-Axis Scale:** 0 to 100 for all charts, with major ticks every 20 units.

* **X-Axis Categories (Identical for all charts):**

* Gemma Original, Gemma Oracle, Gemma Bayesian

* Llama Original, Llama Oracle, Llama Bayesian

* Qwen Original, Qwen Oracle, Qwen Bayesian

* GPT-4.1 Mini, Gemini 1.5 Pro, Human

* **Legend (Present in all charts, positioned top-left):**

* `--- Bayesian Assistant` (Dashed light brown line)

* `--- Random` (Dashed grey line)

* **Bar Colors:**

* Original variants: Blue

* Oracle variants: Light orange/peach

* Bayesian variants: Dark orange

* GPT-4.1 Mini & Gemini 1.5 Pro: Blue (same shade as "Original")

* Human: Green

* **Error Bars:** Present on all bars, indicating variability or confidence intervals.

### Detailed Analysis

#### **Chart a. Direct Prediction**

* **Trend:** For each model family (Gemma, Llama, Qwen), performance increases from Original -> Oracle -> Bayesian. The Bayesian variant consistently achieves the highest accuracy within its family.

* **Data Points (Approximate % Accuracy):**

* **Gemma:** Original ~37, Oracle ~61, Bayesian ~76

* **Llama:** Original ~38, Oracle ~62, Bayesian ~75

* **Qwen:** Original ~37, Oracle ~53, Bayesian ~68

* **Other Models:** GPT-4.1 Mini ~42, Gemini 1.5 Pro ~51, Human ~47

* **Benchmarks:** The "Bayesian Assistant" dashed line is at ~80%. The "Random" dashed line is at ~33%.

#### **Chart b. Belief-based Prediction**

* **Trend:** Similar upward trend from Original to Bayesian for Gemma and Llama. For Qwen, performance is flat across all three variants (~34-36). GPT-4.1 Mini and Gemini 1.5 Pro show moderate performance.

* **Data Points (Approximate % Accuracy):**

* **Gemma:** Original ~48, Oracle ~64, Bayesian ~72

* **Llama:** Original ~47, Oracle ~66, Bayesian ~72

* **Qwen:** Original ~34, Oracle ~36, Bayesian ~36

* **Other Models:** GPT-4.1 Mini ~50, Gemini 1.5 Pro ~57, Human ~45

* **Benchmarks:** "Bayesian Assistant" line at ~80%, "Random" line at ~33%.

#### **Chart c. Consistency between Direct and Belief-based Predictions**

* **Trend:** Consistency generally increases from Original to Oracle to Bayesian for Gemma and Llama. Qwen shows low consistency, with a slight increase from Original to Oracle/Bayesian. Gemini 1.5 Pro shows the highest consistency among non-family models.

* **Data Points (Approximate % Consistency):**

* **Gemma:** Original ~46, Oracle ~76, Bayesian ~81

* **Llama:** Original ~32, Oracle ~77, Bayesian ~81

* **Qwen:** Original ~21, Oracle ~36, Bayesian ~35

* **Other Models:** GPT-4.1 Mini ~44, Gemini 1.5 Pro ~53, Human ~42

### Key Observations

1. **Bayesian Superiority:** The "Bayesian" variant of each model family (Gemma, Llama) is the top performer in both accuracy tasks (Charts a & b) and shows the highest internal consistency (Chart c).

2. **Qwen Anomaly:** The Qwen model family shows a distinct pattern. While its Bayesian variant improves on Direct Prediction (Chart a), it shows no improvement in Belief-based Prediction (Chart b) and has significantly lower consistency scores (Chart c) compared to Gemma and Llama.

3. **Human vs. Model:** Human performance (~47% Direct, ~45% Belief-based) is generally outperformed by the Oracle and Bayesian variants of Gemma and Llama, and by Gemini 1.5 Pro in the Belief-based task.

4. **Benchmark Context:** The "Bayesian Assistant" benchmark (~80%) is only approached or matched by the top-performing Bayesian model variants (Gemma/Llama Bayesian in Direct Prediction, Gemma/Llama Bayesian in Consistency). All models and humans perform well above the "Random" baseline (~33%).

5. **Gemini 1.5 Pro Strength:** Among the standalone models, Gemini 1.5 Pro consistently outperforms GPT-4.1 Mini and shows the highest consistency score outside the Bayesian model families.

### Interpretation

This data strongly suggests that integrating Bayesian methods ("Bayesian" variants) into language models significantly enhances both their predictive accuracy and the consistency between their direct outputs and their stated beliefs. The near-identical high performance of Gemma-Bayesian and Llama-Bayesian indicates this improvement may be a robust effect of the method rather than a specific model architecture.

The Qwen family's failure to improve in the Belief-based task and its low consistency scores point to a potential disconnect in how that model processes or represents belief states compared to direct prediction. This could indicate a difference in training, architecture, or internal representation.

The fact that advanced proprietary models (GPT-4.1 Mini, Gemini 1.5 Pro) are outperformed by open-weight models equipped with Bayesian techniques (Gemma/Llama Oracle/Bayesian) highlights the potential of specialized inference methods to boost performance beyond raw model scale. The "Bayesian Assistant" line likely represents a theoretical or ideal performance ceiling for this methodology, which the best models are nearing.

Overall, the charts make a case for the value of Bayesian approaches in making model predictions more accurate, reliable (consistent), and aligned with their internal belief states—a crucial factor for trustworthy AI.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Bar Charts: Comparison of AI Models and Human Performance Across Prediction Metrics

### Overview

The image contains three grouped bar charts comparing the performance of various AI models (Gemini, Llama, Qwen, GPT-4.1 Mini, Gemini 1.5 Pro) and humans across three metrics:

1. **Direct Prediction** (Chart a)

2. **Belief-based Prediction** (Chart b)

3. **Consistency between Direct and Belief-based Predictions** (Chart c)

Each chart evaluates final-round accuracy (%) against a "Random" baseline (blue dashed line) and a "Bayesian Assistant" benchmark (orange bars).

### Components/Axes

- **X-axis**: Models/human (Gemini Original, Gemini Oracle, Gemini Bayesian, Llama Original, Llama Oracle, Llama Bayesian, Qwen Original, Qwen Oracle, Qwen Bayesian, GPT-4.1 Mini, Gemini 1.5 Pro, Human).

- **Y-axis**: Final-round accuracy (%) from 0 to 100.

- **Legends**:

- **Bayesian Assistant**: Orange bars (Bayesian-enhanced models).

- **Random**: Blue dashed line (baseline performance).

- **Spatial Grounding**:

- Legends are positioned on the right of each chart.

- X-axis labels are centered below each chart; y-axis labels are on the left.

### Detailed Analysis

#### Chart a: Direct Prediction

- **Bayesian Models**:

- Gemini Bayesian: 76%

- Llama Bayesian: 75%

- Qwen Bayesian: 68%

- **Non-Bayesian Models**:

- Gemini Original: 37%

- Llama Original: 38%

- Qwen Original: 37%

- **Human**: 47%

#### Chart b: Belief-based Prediction

- **Bayesian Models**:

- Gemini Bayesian: 72%

- Llama Bayesian: 72%

- Qwen Bayesian: 36%

- **Non-Bayesian Models**:

- Gemini Original: 48%

- Llama Original: 47%

- Qwen Original: 34%

- **Human**: 45%

#### Chart c: Consistency between Direct and Belief-based Predictions

- **Bayesian Models**:

- Gemini Bayesian: 81%

- Llama Bayesian: 81%

- Qwen Bayesian: 35%

- **Non-Bayesian Models**:

- Gemini Original: 46%

- Llama Original: 32%

- Qwen Original: 21%

- **Human**: 42%

### Key Observations

1. **Bayesian Models Outperform Others**:

- Gemini and Llama Bayesian models consistently achieve the highest accuracy across all metrics (e.g., 76% in Direct Prediction, 81% in Consistency).

- Qwen Bayesian underperforms relative to its non-Bayesian counterpart in Belief-based Prediction (36% vs. 34%).

2. **Human Performance**:

- Humans score mid-range (42–47%) across metrics, outperforming non-Bayesian models but trailing Bayesian models.

3. **Qwen Anomalies**:

- Qwen Bayesian shows inconsistent results: 68% in Direct Prediction but only 35% in Consistency.

4. **Random Baseline**:

- All models and humans exceed the Random baseline (34–38%), indicating meaningful performance.

### Interpretation

- **Bayesian Advantage**: The use of Bayesian methods (e.g., Gemini Bayesian, Llama Bayesian) significantly improves accuracy and consistency, suggesting these models better integrate prior knowledge or uncertainty.

- **Qwen’s Limitations**: Qwen’s Bayesian implementation appears less effective, possibly due to architectural constraints or training data gaps.

- **Human vs. AI**: While humans outperform non-Bayesian models, they lag behind advanced Bayesian AI, highlighting the latter’s potential for complex reasoning tasks.

- **Consistency as a Proxy for Reliability**: High consistency scores (e.g., 81% for Gemini Bayesian) indicate stable performance across prediction types, a critical factor for real-world applications.

This analysis underscores the transformative impact of Bayesian approaches in AI systems, particularly for tasks requiring robust and reliable predictions.

DECODING INTELLIGENCE...