# Technical Document Extraction: Attention Forward Speed Benchmark

## 1. Header Information

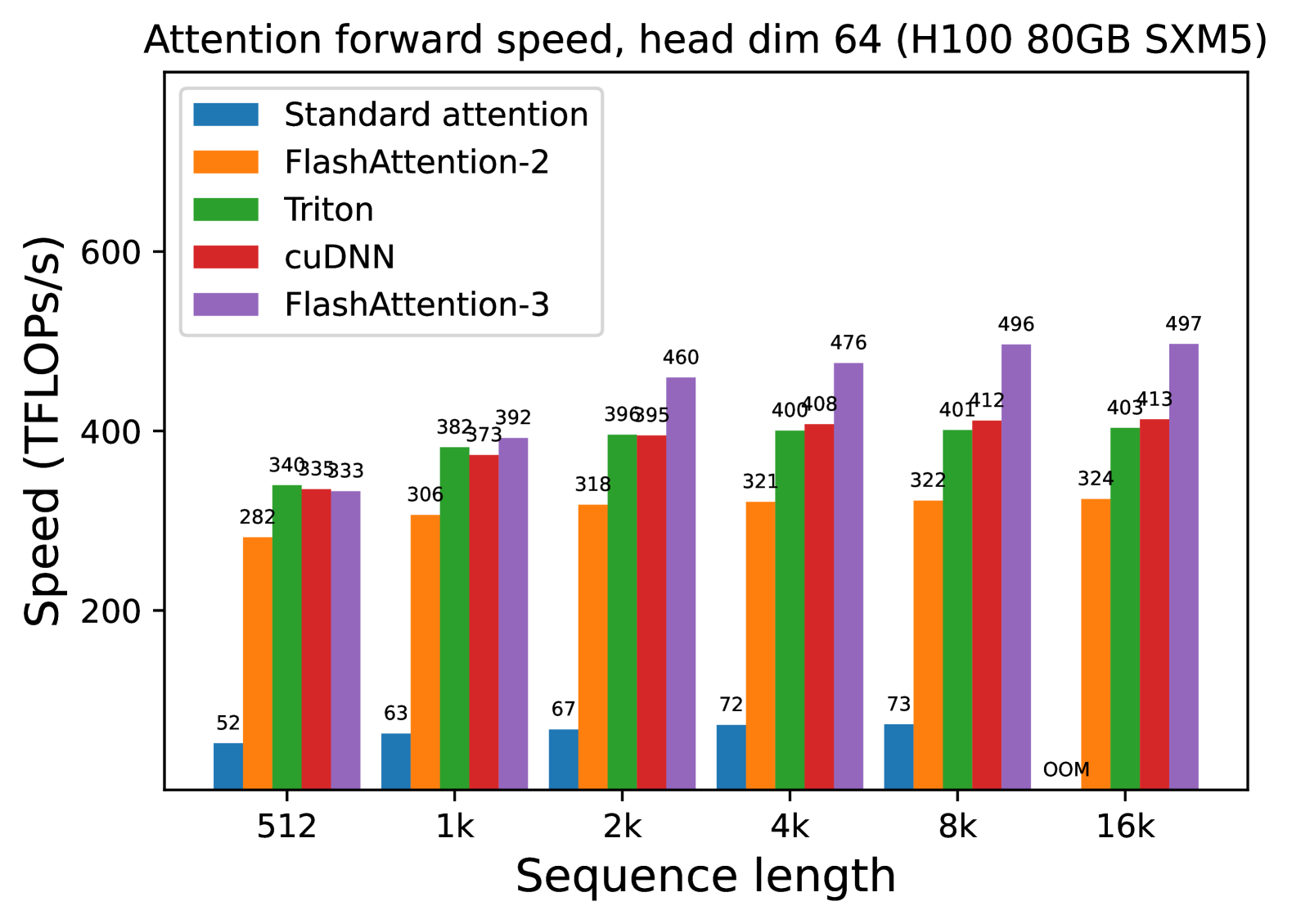

* **Title:** Attention forward speed, head dim 64 (H100 80GB SXM5)

* **Hardware Context:** NVIDIA H100 80GB SXM5 GPU.

* **Parameter Context:** Head dimension is fixed at 64.

## 2. Chart Structure and Metadata

* **Chart Type:** Grouped Bar Chart.

* **Y-Axis Label:** Speed (TFLOPS/s)

* **Y-Axis Scale:** Linear, ranging from 0 to 600+ (markers at 200, 400, 600).

* **X-Axis Label:** Sequence length

* **X-Axis Categories:** 512, 1k, 2k, 4k, 8k, 16k.

* **Legend Placement:** Top-left [x≈0.15, y≈0.85].

### Legend / Data Series Identification

| Color | Label | Trend Description |

| :--- | :--- | :--- |

| **Blue** | Standard attention | Low performance; slight upward trend as sequence length increases, then terminates. |

| **Orange** | FlashAttention-2 | Moderate performance; steady upward trend, plateauing around 324 TFLOPS/s. |

| **Green** | Triton | High performance; rapid initial growth, plateauing around 400-403 TFLOPS/s. |

| **Red** | cuDNN | High performance; steady upward trend, consistently outperforming Triton at higher sequence lengths. |

| **Purple** | FlashAttention-3 | Highest performance; aggressive upward trend, significantly outperforming all other methods as sequence length increases. |

## 3. Data Table Extraction

The following table reconstructs the numerical values (TFLOPS/s) displayed above each bar in the chart.

| Sequence Length | Standard attention (Blue) | FlashAttention-2 (Orange) | Triton (Green) | cuDNN (Red) | FlashAttention-3 (Purple) |

| :--- | :---: | :---: | :---: | :---: | :---: |

| **512** | 52 | 282 | 340 | 335 | 333 |

| **1k** | 63 | 306 | 382 | 373 | 392 |

| **2k** | 67 | 318 | 396 | 395 | 460 |

| **4k** | 72 | 321 | 400 | 408 | 476 |

| **8k** | 73 | 322 | 401 | 412 | 496 |

| **16k** | **OOM*** | 324 | 403 | 413 | 497 |

*\*OOM: Out of Memory*

## 4. Key Observations and Trends

### Performance Hierarchy

1. **FlashAttention-3 (Purple):** The clear leader in throughput. It shows the most significant scaling, reaching nearly 500 TFLOPS/s at a 16k sequence length. It is approximately 1.5x faster than FlashAttention-2 at large scales.

2. **cuDNN (Red) & Triton (Green):** These two implementations perform similarly. Triton is slightly faster at the smallest sequence length (512), but cuDNN overtakes it starting at the 4k sequence length and maintains a slight lead thereafter.

3. **FlashAttention-2 (Orange):** Maintains a consistent performance level between 282 and 324 TFLOPS/s, significantly faster than standard attention but trailing the newer optimizations.

4. **Standard Attention (Blue):** Performs poorly across all tests, never exceeding 73 TFLOPS/s.

### Scalability and Memory

* **Memory Limit:** "Standard attention" fails at the 16k sequence length due to an **OOM (Out of Memory)** error, whereas all optimized kernels (FlashAttention variants, Triton, and cuDNN) successfully process the 16k sequence.

* **Efficiency Gains:** The performance gap between optimized kernels and standard attention widens as sequence length increases. At 8k, FlashAttention-3 is roughly **6.8x faster** than standard attention.