## Line Charts: Training Loss and Average Reward Over Iterations

### Overview

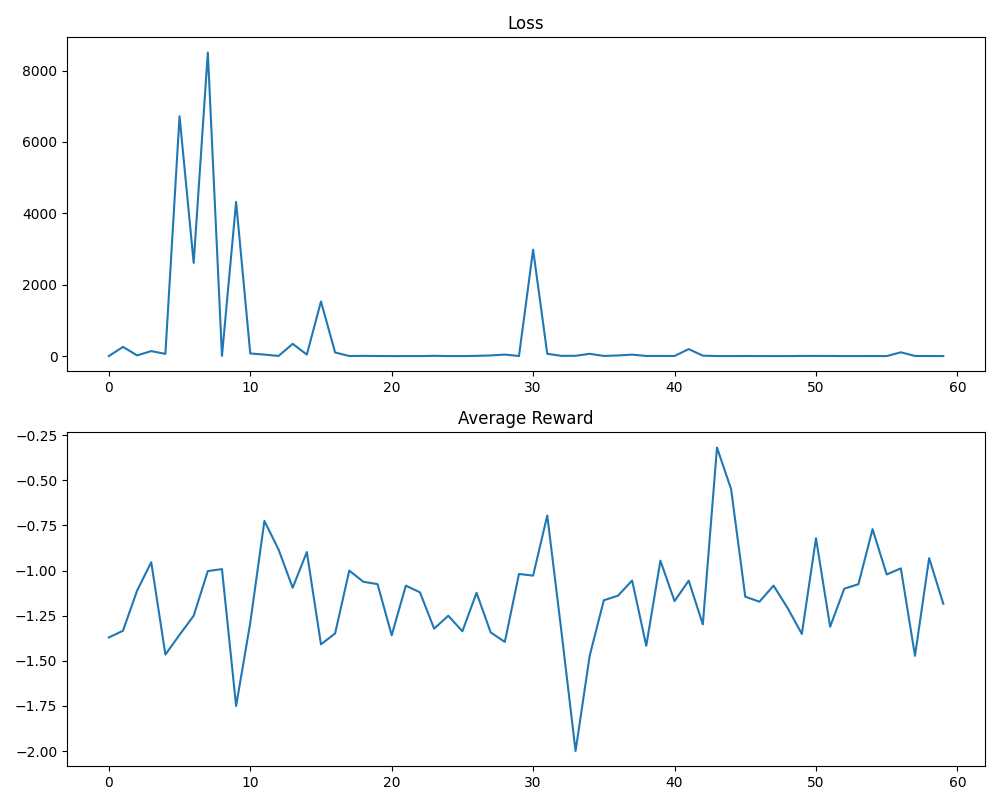

The image displays two vertically stacked line charts sharing a common x-axis, likely representing training iterations or epochs (0 to 60). The top chart tracks a "Loss" metric, and the bottom chart tracks "Average Reward." The data suggests a machine learning or reinforcement learning training process.

### Components/Axes

**Top Chart: "Loss"**

* **Title:** "Loss" (centered above the chart).

* **Y-axis:** Numerical scale from 0 to 8000, with major tick marks at 0, 2000, 4000, 6000, and 8000.

* **X-axis:** Numerical scale from 0 to 60, with major tick marks at 0, 10, 20, 30, 40, 50, and 60.

* **Data Series:** A single blue line representing the loss value over iterations.

**Bottom Chart: "Average Reward"**

* **Title:** "Average Reward" (centered above the chart).

* **Y-axis:** Numerical scale from -2.00 to -0.25, with major tick marks at -2.00, -1.75, -1.50, -1.25, -1.00, -0.75, -0.50, and -0.25.

* **X-axis:** Identical to the top chart (0 to 60).

* **Data Series:** A single blue line representing the average reward value over iterations.

**Spatial Layout:** The "Loss" chart occupies the top half of the image. The "Average Reward" chart occupies the bottom half. There is no legend, as each chart contains only one data series.

### Detailed Analysis

**Loss Chart Trend & Data Points:**

The loss line exhibits extreme volatility in the first third of training before stabilizing at a very low value.

* **Initial Phase (x=0 to ~15):** Characterized by massive spikes.

* Starts near 0 at x=0.

* First major spike: Peaks at approximately **6500** around x=5.

* Second, highest spike: Peaks at approximately **8500** around x=7-8.

* Third spike: Peaks at approximately **4200** around x=9.

* Fourth spike: Peaks at approximately **1500** around x=15.

* **Stabilization Phase (x=~15 to 60):** After the spike at x=15, the loss drops dramatically and remains consistently low.

* A notable, isolated spike occurs around **x=30**, reaching approximately **3000**.

* For the majority of iterations from x=16 onward (excluding the spike at 30), the loss value appears to be **below 500**, often hovering near or just above 0.

**Average Reward Chart Trend & Data Points:**

The average reward line shows persistent, high-frequency oscillation throughout the entire training period, with no clear upward or downward trend.

* **Range:** The reward fluctuates primarily between **-1.75 and -0.75**.

* **Notable Extremes:**

* **Global Minimum:** A sharp dip to approximately **-2.00** occurs around **x=33**.

* **Global Maximum:** A sharp peak to approximately **-0.30** occurs around **x=43**.

* **Pattern:** The line is jagged, indicating significant variance in reward from one iteration to the next. It does not show signs of convergence to a stable value.

### Key Observations

1. **Divergent Behavior:** The two metrics show completely different behaviors. Loss converges to near-zero after an initial volatile period, while Average Reward remains highly volatile and does not improve (increase) over time.

2. **Loss Spike Anomaly:** The isolated loss spike at iteration 30 is significant, as it occurs after the metric had already stabilized, suggesting a temporary instability in the training process.

3. **Reward Volatility:** The lack of any discernible trend in the Average Reward is a critical observation. The model's performance, as measured by reward, is not improving despite the decreasing loss.

4. **Temporal Correlation:** The period of highest loss volatility (x=0-15) corresponds to a period of relatively lower-magnitude reward fluctuations. The most extreme reward values (both min and max) occur later, during the period of low loss.

### Interpretation

This pair of charts likely depicts a **reinforcement learning (RL) training run**. The "Loss" typically measures the error in the agent's policy or value function predictions, while "Average Reward" measures the actual performance in the environment.

* **What the data suggests:** The agent is successfully learning to minimize its internal prediction error (loss), as evidenced by the convergence after iteration 15. However, this improved prediction accuracy is **not translating into better task performance** (higher reward). The agent may be "overfitting" to its prediction targets or experiencing a **misalignment between its loss function and the true reward objective**.

* **Relationship between elements:** The charts reveal a potential flaw in the training setup. The loss metric is being optimized effectively, but it is a poor proxy for the ultimate goal of maximizing reward. This is a classic problem in RL known as **"reward hacking"** or a **mis-specified objective function**.

* **Notable Anomaly:** The spike in loss at x=30, which does not correspond to a dramatic change in the reward trend, further indicates that the loss signal can be decoupled from environmental performance.

* **Conclusion:** The training process is unstable from a reward perspective. While the learning algorithm is reducing its internal error, it is not consistently improving the agent's behavior. This suggests the need to re-examine the reward function, the policy gradient algorithm, or the exploration strategy to ensure that minimizing loss correlates with maximizing reward.