\n

## Line Charts: AIME-24 Accuracy vs (binned) Length of Thoughts

### Overview

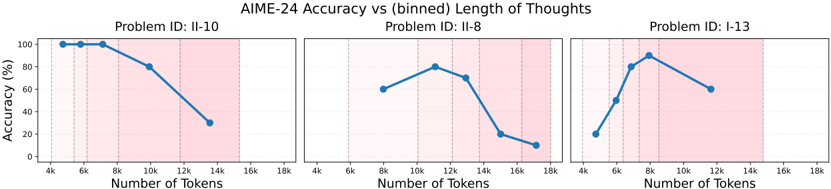

The image displays three separate line charts arranged horizontally, each plotting the relationship between the number of tokens (a measure of thought length) and the accuracy percentage for a specific problem from the AIME-24 dataset. The overall title is "AIME-24 Accuracy vs (binned) Length of Thoughts." Each chart has a distinct trend, suggesting that the optimal length of reasoning (in tokens) varies significantly by problem.

### Components/Axes

* **Main Title:** "AIME-24 Accuracy vs (binned) Length of Thoughts" (centered at the top).

* **Chart Arrangement:** Three charts in a single row.

* **Individual Chart Titles (Top-Center of each panel):**

* Left Chart: "Problem ID: II-10"

* Middle Chart: "Problem ID: II-8"

* Right Chart: "Problem ID: I-13"

* **X-Axis (All Charts):** Labeled "Number of Tokens". The scale is linear, with major tick marks at 4k, 6k, 8k, 10k, 12k, 14k, 16k, and 18k.

* **Y-Axis (Left Chart Only):** Labeled "Accuracy (%)". The scale is linear from 0 to 100, with major ticks at 0, 20, 40, 60, 80, and 100. The middle and right charts share this same vertical scale but do not have the axis label repeated.

* **Data Series:** Each chart contains a single blue line with circular data points.

* **Legend:** No explicit legend is present within the chart areas. The single data series per chart is implied by the title and axis labels.

* **Background:** Each chart has a light pink shaded region covering the area from approximately 12k tokens to the right edge (18k). The meaning of this shading is not labeled.

### Detailed Analysis

**Chart 1: Problem ID: II-10 (Left)**

* **Trend:** The line starts at maximum accuracy and remains flat for the first two data points, then slopes downward steadily.

* **Data Points (Approximate):**

* At 4k tokens: Accuracy ≈ 100%

* At 6k tokens: Accuracy ≈ 100%

* At 10k tokens: Accuracy ≈ 80%

* At 14k tokens: Accuracy ≈ 30%

**Chart 2: Problem ID: II-8 (Middle)**

* **Trend:** The line shows an initial increase to a peak, followed by a steep decline.

* **Data Points (Approximate):**

* At 8k tokens: Accuracy ≈ 60%

* At 12k tokens: Accuracy ≈ 80% (Peak)

* At 16k tokens: Accuracy ≈ 20%

* At 18k tokens: Accuracy ≈ 10%

**Chart 3: Problem ID: I-13 (Right)**

* **Trend:** The line forms an inverted "U" shape, rising to a sharp peak and then falling.

* **Data Points (Approximate):**

* At 4k tokens: Accuracy ≈ 20%

* At 6k tokens: Accuracy ≈ 50%

* At 8k tokens: Accuracy ≈ 90% (Peak)

* At 12k tokens: Accuracy ≈ 60%

### Key Observations

1. **Problem-Specific Optima:** Each problem exhibits a distinct optimal token length for peak accuracy: ~4-6k for II-10, ~12k for II-8, and ~8k for I-13.

2. **Performance Degradation:** For all three problems, accuracy decreases significantly when the number of tokens exceeds the identified optimum. The decline is particularly steep for problems II-8 and I-13.

3. **Initial Performance Variance:** The starting accuracy at the lowest measured token count varies widely: very high for II-10 (100%), moderate for II-8 (60%), and low for I-13 (20%).

4. **Shaded Region:** The consistent pink shading from ~12k tokens onward may indicate a zone of "excessive" or "diminishing return" thought length, where performance is generally poor across problems.

### Interpretation

The data suggests a non-monotonic relationship between reasoning length (token count) and problem-solving accuracy for these AIME-24 problems. The core insight is that **more reasoning is not always better**. There appears to be a "sweet spot" of token usage that maximizes accuracy, which is highly dependent on the specific problem.

* **Problem II-10** seems to be solvable with concise reasoning; additional tokens beyond 6k may introduce confusion or errors, leading to a steady decline.

* **Problem II-8** benefits from more extended reasoning up to a point (12k tokens), after which performance collapses, possibly indicating over-complication or getting lost in the reasoning process.

* **Problem I-13** shows the most dramatic sensitivity, requiring a precise amount of reasoning (~8k tokens). Too little reasoning leads to failure, while too much also leads to significant performance loss.

The shaded region (12k+ tokens) correlates with the declining phase for two of the three problems, supporting the hypothesis that very long thought processes are detrimental for this set of tasks. This analysis implies that for complex problem-solving, calibrating the depth or length of the reasoning process is crucial, and an optimal strategy may need to be tailored to the problem's inherent complexity or structure.