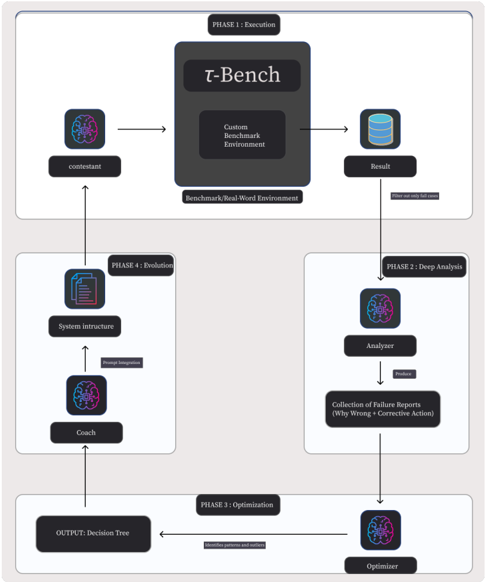

## Flowchart: Iterative Performance Evaluation and Optimization System

### Overview

The flowchart depicts a cyclical process for evaluating, analyzing, and optimizing performance in a benchmark environment. It consists of four interconnected phases: Execution, Evolution, Analysis, and Optimization. The system integrates AI components (contestant, coach, analyzer, optimizer) with a custom benchmark environment (τ-Bench) to iteratively refine decision-making capabilities.

### Components/Axes

1. **Phases**:

- **Phase 1: Execution** (Top)

- **Phase 4: Evolution** (Left)

- **Phase 2: Deep Analysis** (Right)

- **Phase 3: Optimization** (Bottom)

2. **Key Elements**:

- **τ-Bench**: Central benchmark/real-world environment

- **Contestant**: AI agent undergoing evaluation

- **Analyzer**: Processes failure reports

- **Optimizer**: Generates decision trees

- **Coach**: Integrates system intructure and prompts

- **System Intructure**: Technical framework for prompt integration

3. **Flow Direction**:

- Solid arrows indicate primary workflow

- Dashed arrows represent feedback loops

- Bidirectional connections between phases

### Detailed Analysis

1. **Phase 1: Execution**

- Contestant interacts with τ-Bench (custom benchmark environment)

- Outputs results stored in a database

- Filtering mechanism selects only "fail cases" for further analysis

2. **Phase 2: Deep Analysis**

- Analyzer processes failure reports

- Extracts "Why Wrong" diagnostics and corrective actions

- Produces structured failure data for optimization

3. **Phase 3: Optimization**

- Optimizer uses failure data to build decision trees

- Identifies patterns and outliers in performance

- Outputs refined decision-making frameworks

4. **Phase 4: Evolution**

- Coach integrates system intructure with prompt engineering

- Receives optimized decision trees from Phase 3

- Feeds improved strategies back to contestant via τ-Bench

### Key Observations

1. **Cyclical Nature**: The system forms a closed-loop process with continuous feedback between phases

2. **Failure-Driven Improvement**: Only failed cases from Phase 1 trigger deeper analysis

3. **Multi-Stage Refinement**: Each phase builds on previous outputs (results → analysis → optimization → evolution)

4. **Human-AI Collaboration**: The Coach component bridges technical systems with strategic prompt engineering

5. **Modular Architecture**: Components operate independently but interconnect through defined interfaces

### Interpretation

This flowchart represents an advanced AI training pipeline that combines:

- **Controlled Testing**: τ-Bench provides a standardized evaluation environment

- **Failure Analysis**: Systematic diagnosis of errors through the Analyzer

- **Adaptive Learning**: Optimizer creates decision trees to address identified weaknesses

- **Strategic Integration**: Coach component ensures human-guided prompt engineering enhances AI capabilities

The system emphasizes iterative improvement through:

1. **Data-Driven Refinement**: Each phase processes outputs from the previous stage

2. **Failure-Centric Learning**: Focus on error cases drives continuous improvement

3. **Human-AI Synergy**: The Coach component maintains strategic oversight while leveraging automated optimization

Notable design choices include:

- Bidirectional arrows between phases suggesting dynamic adjustment capabilities

- Database symbol indicating persistent storage of results and failure data

- Explicit separation of analytical and optimization functions for specialized processing

This architecture demonstrates a sophisticated approach to AI development that balances automated optimization with human strategic guidance, creating a robust framework for developing adaptive, high-performance AI systems.