## Diagram: Transformer LLM with Explicit Memory

### Overview

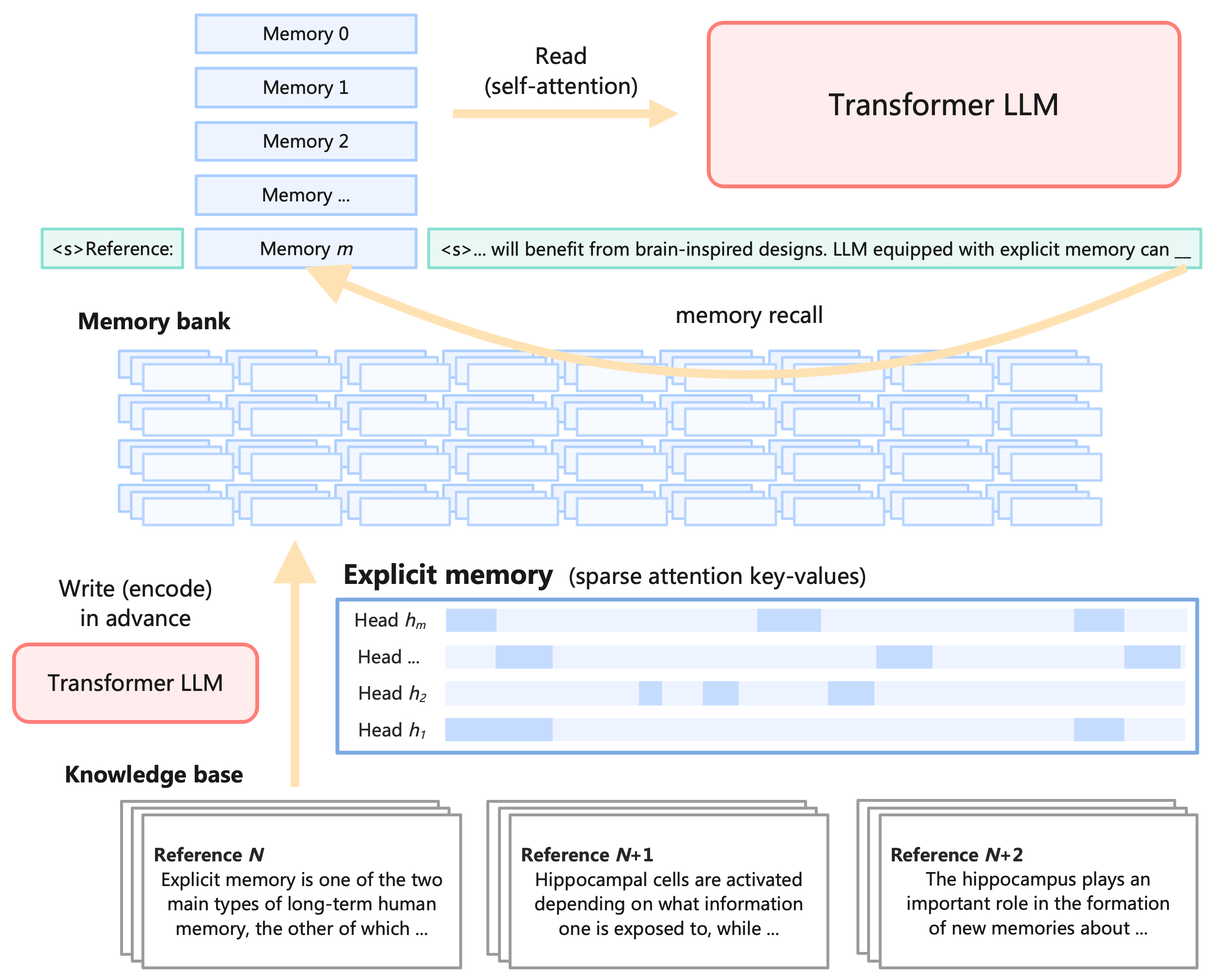

The image is a diagram illustrating how a Transformer Large Language Model (LLM) can be enhanced with explicit memory, drawing inspiration from brain-inspired designs. It shows the flow of information between a memory bank, the Transformer LLM, and a knowledge base, highlighting the processes of writing (encoding) and reading (recalling) memory.

### Components/Axes

* **Memory Bank:** A series of memory slots labeled "Memory 0", "Memory 1", "Memory 2", "Memory ...", and "Memory m".

* **Transformer LLM (Top-Right):** A red rounded rectangle labeled "Transformer LLM".

* **Transformer LLM (Bottom-Left):** A red rounded rectangle labeled "Transformer LLM".

* **Read (self-attention):** An arrow pointing from the memory slots to the Transformer LLM (top-right).

* **Write (encode) in advance:** An arrow pointing from the Transformer LLM (bottom-left) to the explicit memory.

* **Explicit Memory (sparse attention key-values):** A table-like structure representing the explicit memory, with rows labeled "Head h\_m", "Head ...", "Head h\_2", and "Head h\_1". The cells in the table are either empty or filled with a light blue color, indicating the presence of a key-value pair.

* **Knowledge Base:** Three stacked cards labeled "Reference N", "Reference N+1", and "Reference N+2".

* **Memory Recall:** An arrow pointing from the memory bank to the text "<s>... will benefit from brain-inspired designs. LLM equipped with explicit memory can \_\_".

* **Reference:** Text snippets "<s>Reference:" and "<s>... will benefit from brain-inspired designs. LLM equipped with explicit memory can \_\_".

### Detailed Analysis or ### Content Details

* **Memory Bank:** The memory bank consists of multiple memory slots, suggesting a sequential organization of memory. The slots are labeled from "Memory 0" to "Memory m", indicating a range of memory locations.

* **Read (self-attention):** The "Read (self-attention)" arrow indicates that the Transformer LLM accesses the memory bank through a self-attention mechanism.

* **Transformer LLM:** The Transformer LLM is represented twice in the diagram, once for reading memory (top-right) and once for writing (encoding) memory (bottom-left).

* **Write (encode) in advance:** The "Write (encode) in advance" arrow indicates that the Transformer LLM writes information into the explicit memory before it is needed.

* **Explicit Memory (sparse attention key-values):** The explicit memory is organized into "Heads," each with a sparse set of key-value pairs. The presence of light blue cells indicates the existence of a key-value pair for a specific head.

* Head h\_m: Has a key-value pair in the first column.

* Head ...: Has a key-value pair in the second column.

* Head h\_2: Has key-value pairs in the third and fifth columns.

* Head h\_1: Has key-value pairs in the first and fourth columns.

* **Knowledge Base:** The knowledge base contains references to external information.

* **Reference N:** "Explicit memory is one of the two main types of long-term human memory, the other of which ..."

* **Reference N+1:** "Hippocampal cells are activated depending on what information one is exposed to, while ..."

* **Reference N+2:** "The hippocampus plays an important role in the formation of new memories about ..."

* **Memory Recall:** The "memory recall" arrow indicates that the memory bank is used to provide context or information to the LLM.

* **Reference:** The text snippets suggest that the LLM is using the memory bank to improve its performance.

### Key Observations

* The diagram highlights the interaction between the Transformer LLM and an external memory bank.

* The explicit memory is represented as a sparse attention key-value store, suggesting that only relevant information is stored and retrieved.

* The knowledge base provides additional context and information to the LLM.

* The diagram emphasizes the importance of both writing (encoding) and reading (recalling) memory for the LLM.

### Interpretation

The diagram illustrates a system where a Transformer LLM leverages an explicit memory component to enhance its capabilities. The memory bank acts as a repository of information that the LLM can access and utilize during processing. The explicit memory, organized as sparse attention key-values, allows the LLM to selectively attend to relevant information, improving efficiency and performance. The knowledge base provides external references that further enrich the LLM's understanding.

The diagram suggests that by incorporating explicit memory, the Transformer LLM can better mimic the human brain's ability to store and retrieve information, leading to more sophisticated and context-aware language processing. The use of sparse attention mechanisms highlights the importance of selective attention in memory retrieval, allowing the LLM to focus on the most relevant information and avoid being overwhelmed by irrelevant details.