## Diagram: Transformer LLM with Explicit Memory

### Overview

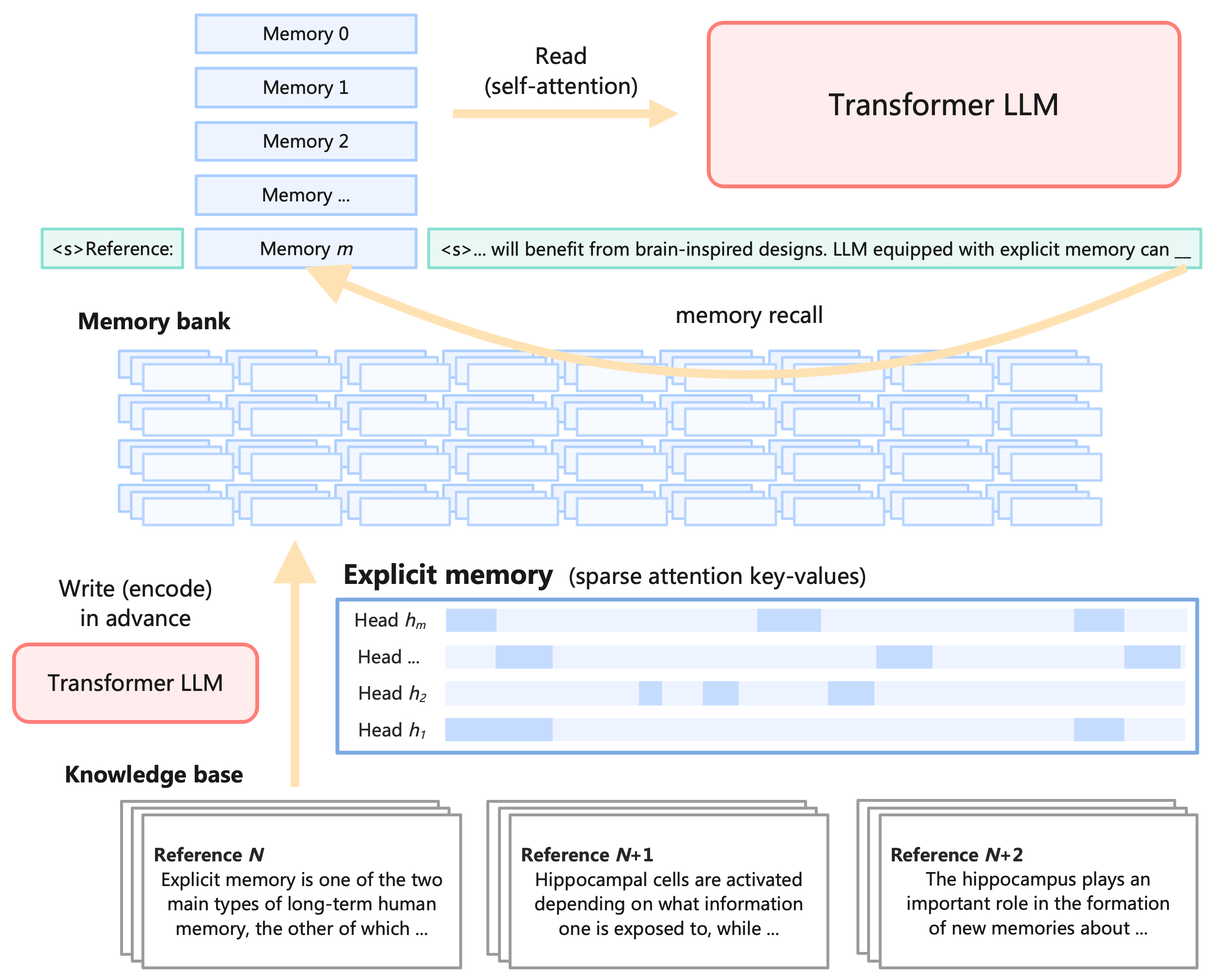

The diagram illustrates a Transformer Large Language Model (LLM) architecture augmented with an "Explicit Memory" component. It depicts the flow of information between a knowledge base, memory bank, and the Transformer LLM, highlighting the processes of writing (encoding) to memory, reading (self-attention) from memory, and memory recall. The diagram emphasizes the integration of brain-inspired designs into LLMs.

### Components/Axes

The diagram consists of several key components:

* **Transformer LLM (Top-Right):** Represented as a rectangular block labeled "Transformer LLM".

* **Memory Bank (Top-Left):** A stack of rectangular blocks labeled "Memory 0", "Memory 1", "Memory 2", and "Memory m". A `<s> Reference:` label is present next to "Memory m".

* **Explicit Memory (Center):** A rectangular block labeled "Explicit memory (sparse attention key-values)". Contains labels "Head h<sub>m</sub>", "Head h<sub>2</sub>", "Head h<sub>1</sub>".

* **Knowledge Base (Bottom-Left):** A stack of rectangular blocks labeled "Reference N", "Reference N+1", and "Reference N+2".

* **Connections:** Curved arrows representing data flow between components. Labels on the arrows include "Read (self-attention)" and "memory recall". "Write (encode) in advance" is also present.

* **Text Blocks:** Embedded text within the "Memory m" and "Reference N", "Reference N+1", "Reference N+2" blocks.

### Detailed Analysis or Content Details

**Memory Bank:**

* Contains 'm' memories, indexed from 0 to m.

* The text next to "Memory m" reads: "<s>... will benefit from brain-inspired designs. LLM equipped with explicit memory can ...".

**Explicit Memory:**

* Contains multiple "Heads" labeled h<sub>m</sub>, h<sub>2</sub>, and h<sub>1</sub>. The number of heads is not fully specified, indicated by "...".

**Knowledge Base:**

* **Reference N:** "Explicit memory is one of the two main types of long-term human memory, the other of which ...".

* **Reference N+1:** "Hippocampal cells are activated depending on what information one is exposed to, while ...".

* **Reference N+2:** "The hippocampus plays an important role in the formation of new memories about ...".

**Data Flow:**

* **Write (encode) in advance:** Arrows originate from the "Transformer LLM" and point towards the "Knowledge Base".

* **Read (self-attention):** Arrows originate from the "Memory Bank" and point towards the "Transformer LLM".

* **memory recall:** Arrows originate from the "Explicit Memory" and point towards the "Transformer LLM". There are multiple arrows, suggesting parallel recall paths.

* Arrows also connect the "Knowledge Base" to the "Explicit Memory".

### Key Observations

* The diagram highlights a two-way interaction between the Transformer LLM and both the Memory Bank and the Knowledge Base.

* The "Explicit Memory" acts as an intermediary between the Memory Bank and the Transformer LLM, potentially facilitating more efficient memory recall.

* The references in the Knowledge Base provide context about the biological inspiration behind the explicit memory component (hippocampus and long-term memory).

* The "Write (encode) in advance" suggests a pre-processing step where information is encoded into the Knowledge Base before being used.

### Interpretation

The diagram proposes an architecture for enhancing LLMs with a form of explicit memory, drawing inspiration from the human brain. The explicit memory component, utilizing sparse attention key-values, appears to act as a selective filter or index for the larger Memory Bank. This allows the LLM to focus on relevant information during the "Read (self-attention)" process, potentially improving performance and reducing computational cost. The Knowledge Base provides foundational information, and the "Write (encode) in advance" step suggests a proactive approach to knowledge integration. The references to the hippocampus and long-term memory indicate that the design aims to mimic the brain's efficient memory systems. The diagram suggests a shift from purely parametric memory (weights within the LLM) to a hybrid approach that combines parametric and explicit memory. This could lead to LLMs that are more robust, adaptable, and capable of reasoning about complex information. The use of "Heads" within the explicit memory suggests a multi-faceted approach to memory representation and retrieval.