## Diagram: Transformer LLM with Explicit Memory Integration

### Overview

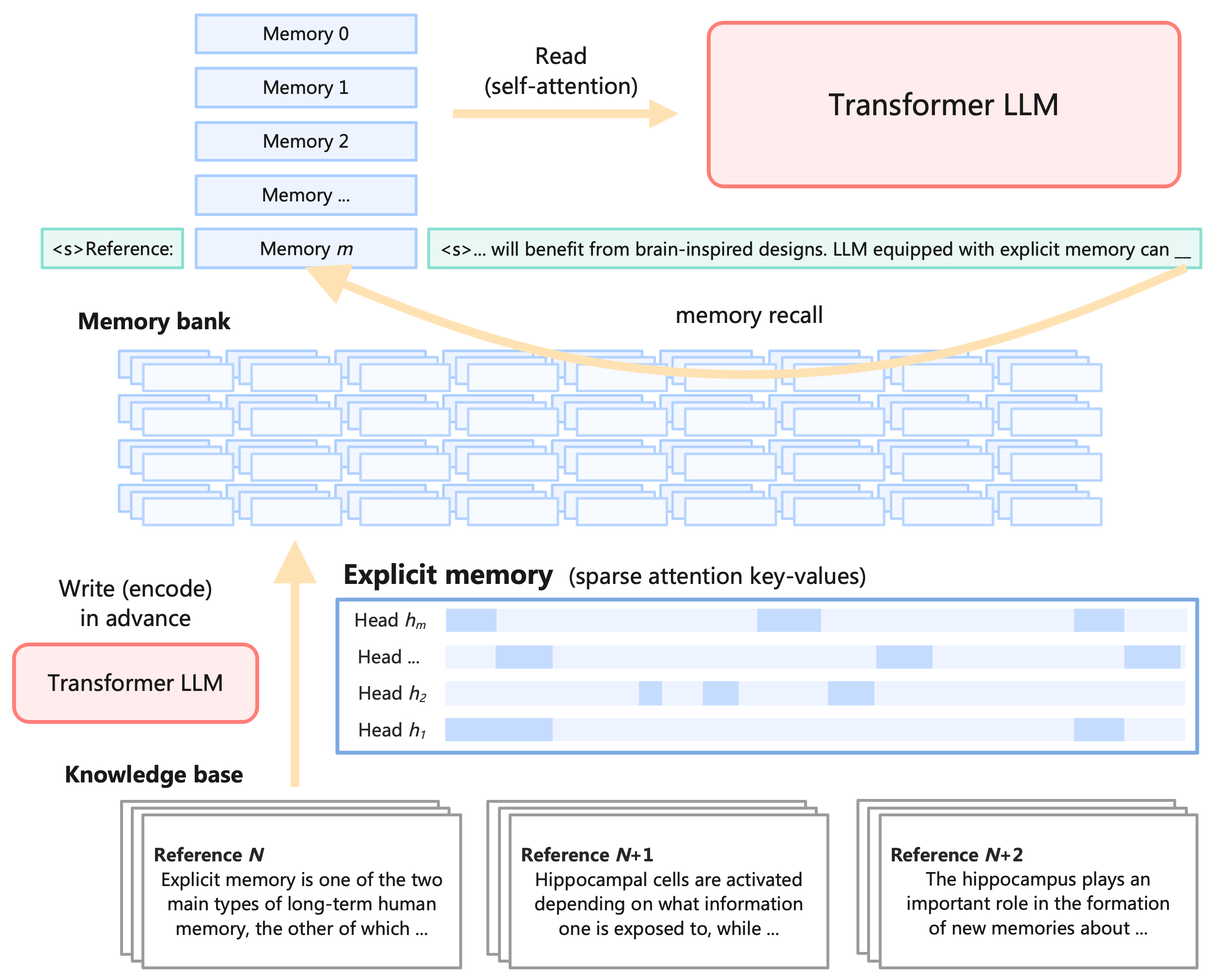

The diagram illustrates the interaction between a Transformer-based Large Language Model (LLM) and an explicit memory system. It highlights how the LLM reads from and writes to a memory bank, integrates explicit memory (sparse attention key-values), and interacts with a knowledge base. Arrows indicate data flow, and color-coded components represent different system elements.

### Components/Axes

1. **Transformer LLM**:

- Pink rectangle labeled "Transformer LLM" at the top-right.

- Receives input from the memory bank via "Read (self-attention)" (yellow arrow).

- Outputs to the memory bank via "Write (encode) in advance" (yellow arrow).

2. **Memory Bank**:

- Vertical stack of blue rectangles labeled "Memory 0" to "Memory m" on the left.

- Contains stored information accessible to the LLM.

3. **Explicit Memory**:

- Grid of blue rectangles labeled "Head h₁" to "Head hₘ" at the bottom.

- Represents sparse attention key-values, with varying shades of blue indicating different attention weights.

- Legend: Darker blue = higher attention weight.

4. **Knowledge Base**:

- Bottom section with three stacked text blocks labeled "Reference N," "Reference N+1," and "Reference N+2."

- Contains factual snippets (e.g., "Explicit memory is one of the two main types of long-term human memory...").

5. **Arrows**:

- Yellow arrows indicate data flow:

- From Transformer LLM to memory bank (write operation).

- From memory bank to Transformer LLM (read operation).

- From explicit memory to the Transformer LLM (memory recall).

### Detailed Analysis

- **Memory Bank**:

- Contains "Memory 0" to "Memory m," suggesting a scalable storage system.

- No numerical values provided; labels imply sequential storage.

- **Explicit Memory**:

- Attention heads (h₁ to hₘ) are color-coded, with darker shades indicating stronger attention weights.

- Example: Head h₁ has the darkest shade, suggesting it focuses most on specific key-values.

- **Knowledge Base**:

- References N, N+1, and N+2 provide context for memory recall.

- Text snippets describe hippocampal function and explicit memory types.

### Key Observations

1. The Transformer LLM dynamically interacts with the memory bank, reading and writing information.

2. Explicit memory uses attention mechanisms to prioritize relevant data during recall.

3. The knowledge base serves as a static repository for factual information, aiding the LLM’s reasoning.

4. No numerical data is present; the diagram focuses on conceptual relationships.

### Interpretation

The diagram demonstrates a hybrid architecture where the Transformer LLM leverages both implicit memory (self-attention) and explicit memory (sparse key-values) to enhance performance. The explicit memory system, inspired by biological attention mechanisms, allows the LLM to focus on critical information during processing. The knowledge base acts as a grounding source for factual accuracy, reducing hallucinations.

The absence of numerical values suggests this is a conceptual model rather than an empirical study. The use of attention heads in explicit memory mirrors biological neural processes, implying potential efficiency gains in memory recall. The bidirectional flow between the LLM and memory bank indicates real-time adaptation, while the knowledge base provides static context.

This architecture could improve LLMs’ ability to handle long-term dependencies and factual consistency, though implementation details (e.g., memory capacity, attention weight calculation) are not specified.