\n

## Diagram: Federated Learning with Differential Privacy

### Overview

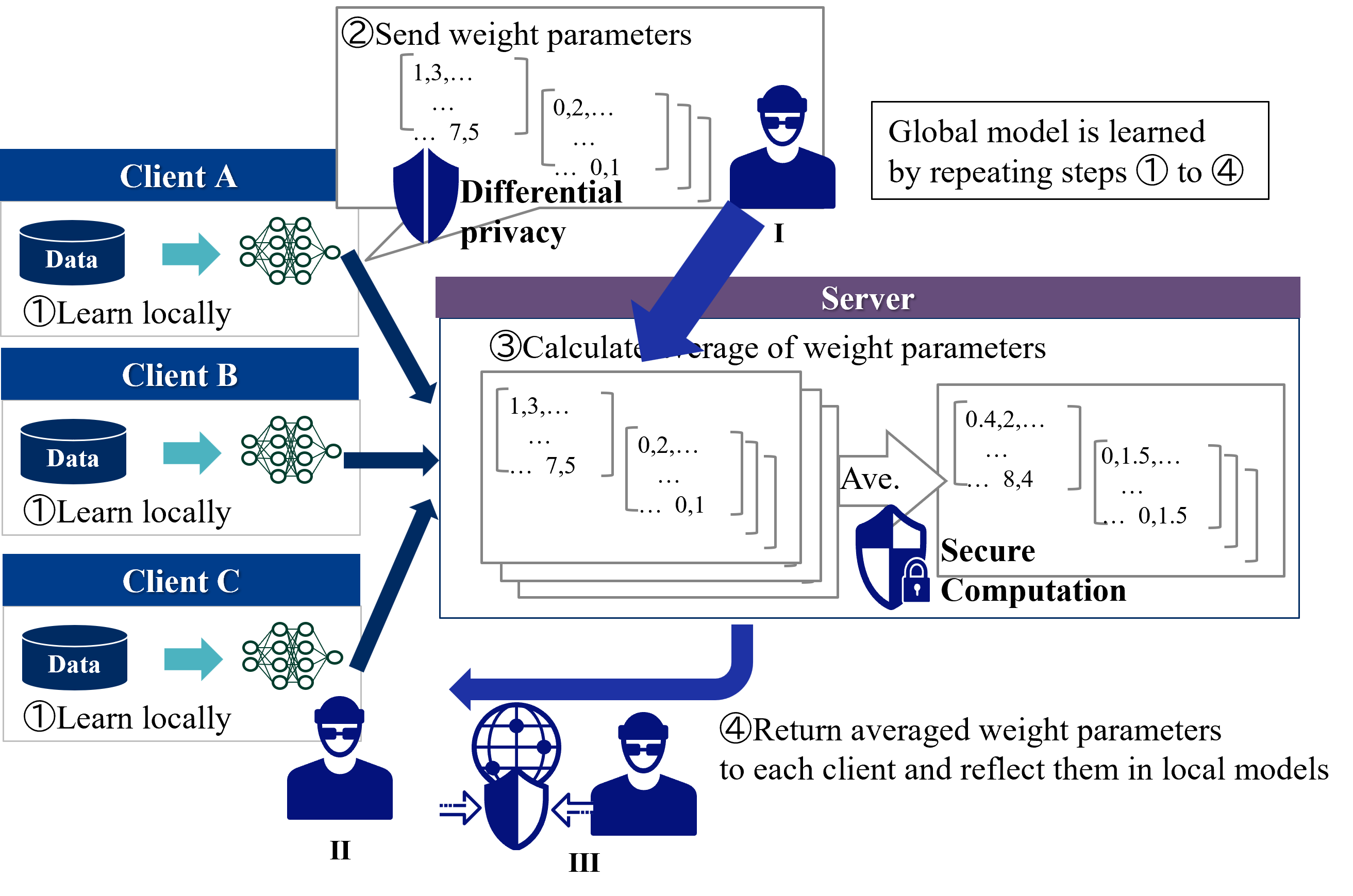

This diagram illustrates a federated learning process with differential privacy applied. It depicts three clients (A, B, and C) training local models on their respective data, sending weight parameters to a central server, the server aggregating these parameters, and returning the averaged weights back to the clients. Differential privacy is applied during the parameter transmission phase. The process is iterative, aiming to learn a global model through repeated local training and global aggregation.

### Components/Axes

The diagram consists of three client blocks (A, B, C), a central server block, and arrows indicating data flow. Numbered steps (①, ②, ③, ④) guide the process. Key text labels include: "Data", "Learn locally", "Send weight parameters", "Differential privacy", "Calculate average of weight parameters", "Secure Computation", "Return averaged weight parameters", "Global model is learned by repeating steps ① to ④". There are also three icons representing clients with masks (I, II, III). Weight parameter matrices are shown with example values.

### Detailed Analysis or Content Details

The process unfolds as follows:

1. **Client Local Training (①):** Each client (A, B, C) possesses "Data" and performs "Learn locally" using a neural network represented by a cluster of interconnected nodes.

2. **Parameter Transmission with Differential Privacy (②):** Clients send their "weight parameters" to the server. The weight parameters are represented as matrices with the following approximate values:

* Matrix 1: [1.3, ..., 7.5, ..., 0.1]

* Matrix 2: [0.2, ..., 0.1]

Differential privacy is applied during this transmission, indicated by the label "Differential privacy". Client I is shown sending data to the server.

3. **Server Aggregation (③):** The server "Calculate[s] average of weight parameters" using "Secure Computation". The averaged weight parameters are represented as matrices with the following approximate values:

* Matrix 1: [0.4, 2, ..., 8.4]

* Matrix 2: [0.1, 5, ..., 0.15]

Client II is shown sending data to the server.

4. **Parameter Return (④):** The server "Return[s] averaged weight parameters to each client and reflect them in local models". Client III is shown receiving data from the server.

The diagram also shows three masked client icons (I, II, III) representing the privacy aspect of the process.

### Key Observations

The diagram highlights the iterative nature of federated learning. The use of differential privacy suggests a focus on protecting the privacy of individual client data during the model training process. The "Secure Computation" label indicates that the server performs aggregation in a privacy-preserving manner. The matrices representing weight parameters are illustrative and do not represent a complete dataset.

### Interpretation

This diagram demonstrates a federated learning approach designed to train a global model without directly accessing or centralizing client data. The process leverages local computation on each client's data, followed by secure aggregation of model updates on a central server. The inclusion of differential privacy suggests a commitment to protecting the privacy of individual client contributions. The iterative nature of the process (steps ① to ④) implies that the global model is refined over multiple rounds of local training and global aggregation. The masked client icons emphasize the privacy-preserving nature of the system. The use of "Secure Computation" suggests techniques like secure multi-party computation are employed to prevent the server from inferring individual client data from the aggregated updates. This approach is particularly relevant in scenarios where data privacy is paramount, such as healthcare or finance.