## Pie Chart: Distribution of Data Types in Pre-training Dataset

### Overview

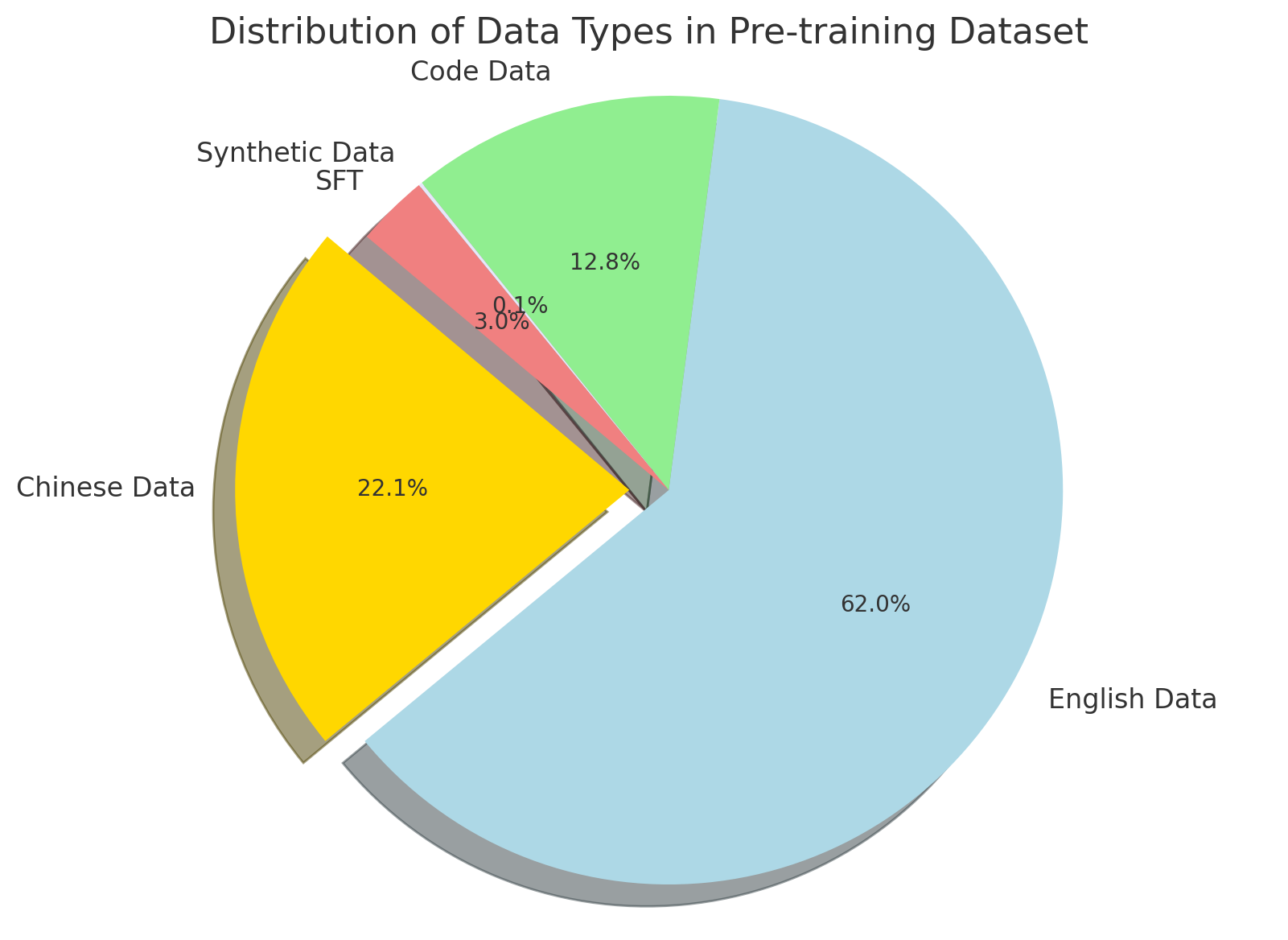

The image is a pie chart illustrating the proportional composition of different data types within a pre-training dataset. The chart is titled "Distribution of Data Types in Pre-training Dataset." It displays five distinct segments, each representing a category of data with its corresponding percentage of the total.

### Components/Axes

* **Title:** "Distribution of Data Types in Pre-training Dataset" (centered at the top).

* **Chart Type:** Pie chart with an exploded (separated) slice.

* **Segments & Labels:** The chart contains five labeled segments. The labels are placed outside the pie, adjacent to their respective slices.

* **Legend:** There is no separate legend box; labels are directly associated with slices.

* **Spatial Layout:**

* The largest slice ("English Data") occupies the right and bottom-right portion of the chart.

* The second-largest slice ("Chinese Data") is on the left side and is exploded (pulled out) from the main pie.

* The "Code Data" slice is at the top.

* The "Synthetic Data SFT" slice is a small wedge between "Code Data" and "Chinese Data."

* A very thin, unlabeled slice (0.1%) is visible between "Synthetic Data SFT" and "Chinese Data."

### Detailed Analysis

The following table details each segment, its color, percentage, and spatial position within the pie chart (proceeding clockwise from the top):

| Segment Label | Color (Approximate) | Percentage | Spatial Position & Notes |

| :--- | :--- | :--- | :--- |

| **Code Data** | Light green | 12.8% | Top segment. |

| **Synthetic Data SFT** | Salmon pink / light red | 3.0% | Small wedge to the left of "Code Data." |

| *(Unlabeled Segment)* | Grey | 0.1% | Extremely thin slice between "Synthetic Data SFT" and "Chinese Data." No external label is provided for this segment. |

| **Chinese Data** | Bright yellow | 22.1% | Large slice on the left, exploded outward from the pie. |

| **English Data** | Light blue | 62.0% | The dominant slice, occupying the entire right side and bottom of the chart. |

**Trend Verification:** The visual trend is one of clear dominance by a single category ("English Data"), followed by a significant secondary category ("Chinese Data"). The remaining categories ("Code Data," "Synthetic Data SFT," and the unlabeled 0.1% slice) constitute a much smaller minority of the total dataset.

### Key Observations

1. **Dominance of English Data:** The "English Data" segment is the overwhelming majority, comprising 62.0% of the total dataset.

2. **Significant Chinese Component:** "Chinese Data" represents a substantial portion at 22.1%, indicating a strong multilingual focus, particularly between English and Chinese.

3. **Minority Categories:** "Code Data" (12.8%) and "Synthetic Data SFT" (3.0%) are minor components. The "Synthetic Data SFT" slice is notably small.

4. **Unlabeled Anomaly:** There is a very thin, grey slice labeled with "0.1%" inside the pie but with no corresponding external category label. This represents a data type that is either unnamed or considered negligible.

5. **Visual Emphasis:** The "Chinese Data" slice is exploded (separated) from the pie, a design choice that visually emphasizes this specific segment despite it not being the largest.

### Interpretation

This pie chart provides a clear breakdown of the data sources used to pre-train an AI model. The composition suggests a model with a strong foundation in English-language data, balanced with a significant amount of Chinese-language data, pointing towards a bilingual or cross-lingual training objective. The inclusion of "Code Data" (12.8%) indicates the model is also being trained on programming languages to develop technical or reasoning capabilities.

The very small "Synthetic Data SFT" (Supervised Fine-Tuning) slice (3.0%) suggests that artificially generated data plays a minimal role in the initial pre-training phase, possibly being reserved for later fine-tuning stages. The presence of the tiny, unlabeled 0.1% segment is an interesting anomaly; it could represent miscellaneous data, corrupted entries, or a category deemed too small to warrant a label. Overall, the dataset is heavily skewed towards natural language (English and Chinese), with code and synthetic data forming important but secondary pillars of the training corpus.