## Bar Chart: Latency vs. Batch Size for FP16 and INT8

### Overview

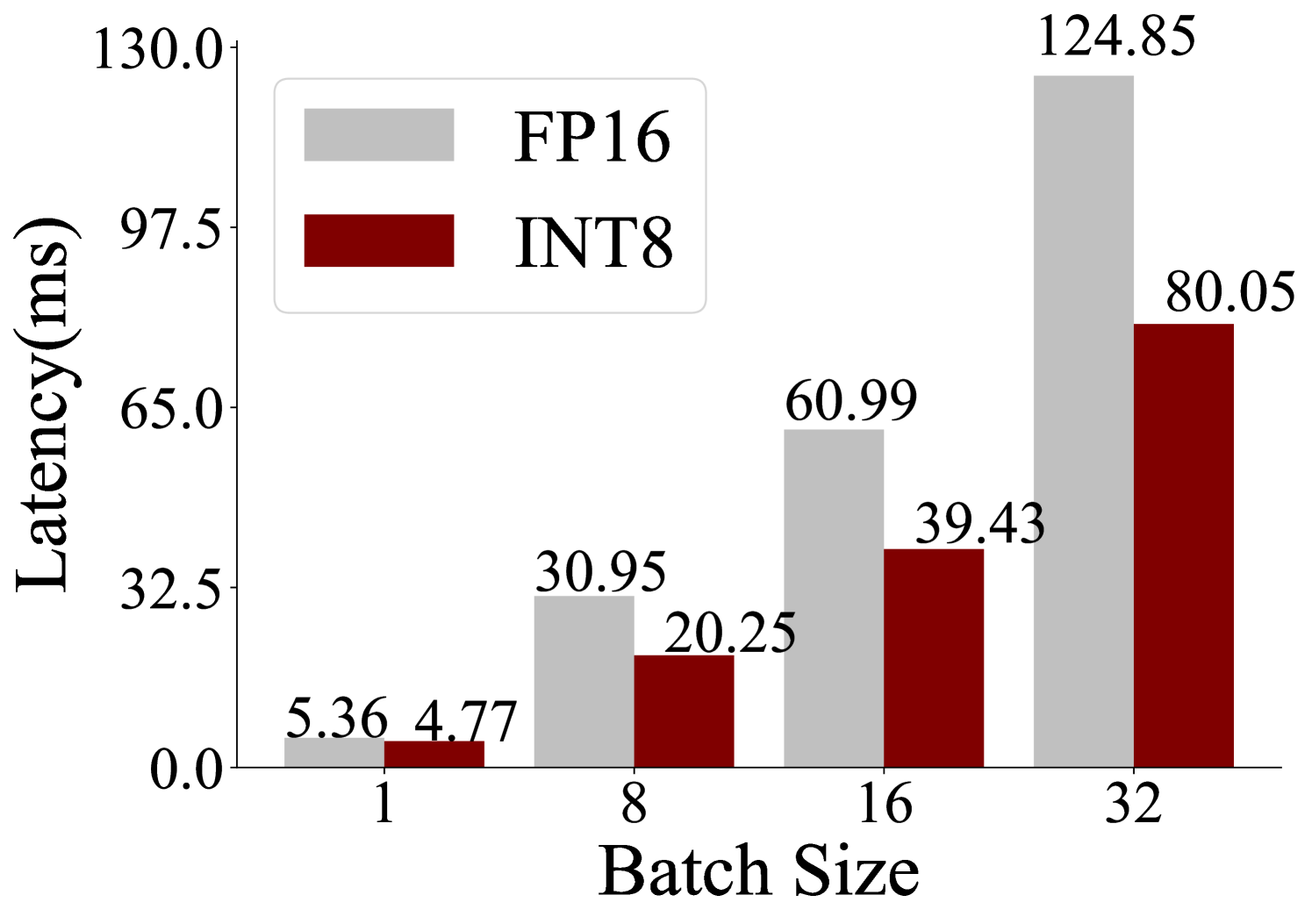

The image is a bar chart comparing the latency (in milliseconds) of FP16 and INT8 data types across different batch sizes (1, 8, 16, and 32). The chart shows that latency generally increases with batch size for both data types, but INT8 consistently exhibits lower latency than FP16 for all batch sizes tested.

### Components/Axes

* **Title:** There is no explicit title.

* **X-axis:** "Batch Size" with values 1, 8, 16, and 32.

* **Y-axis:** "Latency(ms)" with values 0.0, 32.5, 65.0, 97.5, and 130.0.

* **Legend:** Located in the top-center of the chart.

* FP16: Represented by light gray bars.

* INT8: Represented by dark red bars.

### Detailed Analysis

The chart presents latency measurements for FP16 and INT8 data types at different batch sizes.

* **Batch Size 1:**

* FP16: Latency is 5.36 ms.

* INT8: Latency is 4.77 ms.

* **Batch Size 8:**

* FP16: Latency is 30.95 ms.

* INT8: Latency is 20.25 ms.

* **Batch Size 16:**

* FP16: Latency is 60.99 ms.

* INT8: Latency is 39.43 ms.

* **Batch Size 32:**

* FP16: Latency is 124.85 ms.

* INT8: Latency is 80.05 ms.

**Trend Verification:**

* **FP16:** The latency for FP16 increases as the batch size increases.

* **INT8:** The latency for INT8 also increases as the batch size increases, but at a slower rate than FP16.

### Key Observations

* INT8 consistently shows lower latency compared to FP16 for all batch sizes.

* The difference in latency between FP16 and INT8 increases as the batch size increases.

* The latency increases significantly for both FP16 and INT8 as the batch size goes from 16 to 32.

### Interpretation

The data suggests that using INT8 data type results in lower latency compared to FP16, especially at larger batch sizes. This indicates that INT8 is more efficient for processing larger amounts of data in parallel. The increasing difference in latency between FP16 and INT8 with larger batch sizes suggests that INT8 scales better than FP16 in terms of performance. The significant increase in latency from batch size 16 to 32 for both data types could indicate a bottleneck or limitation in the system's ability to handle larger batches efficiently.