\n

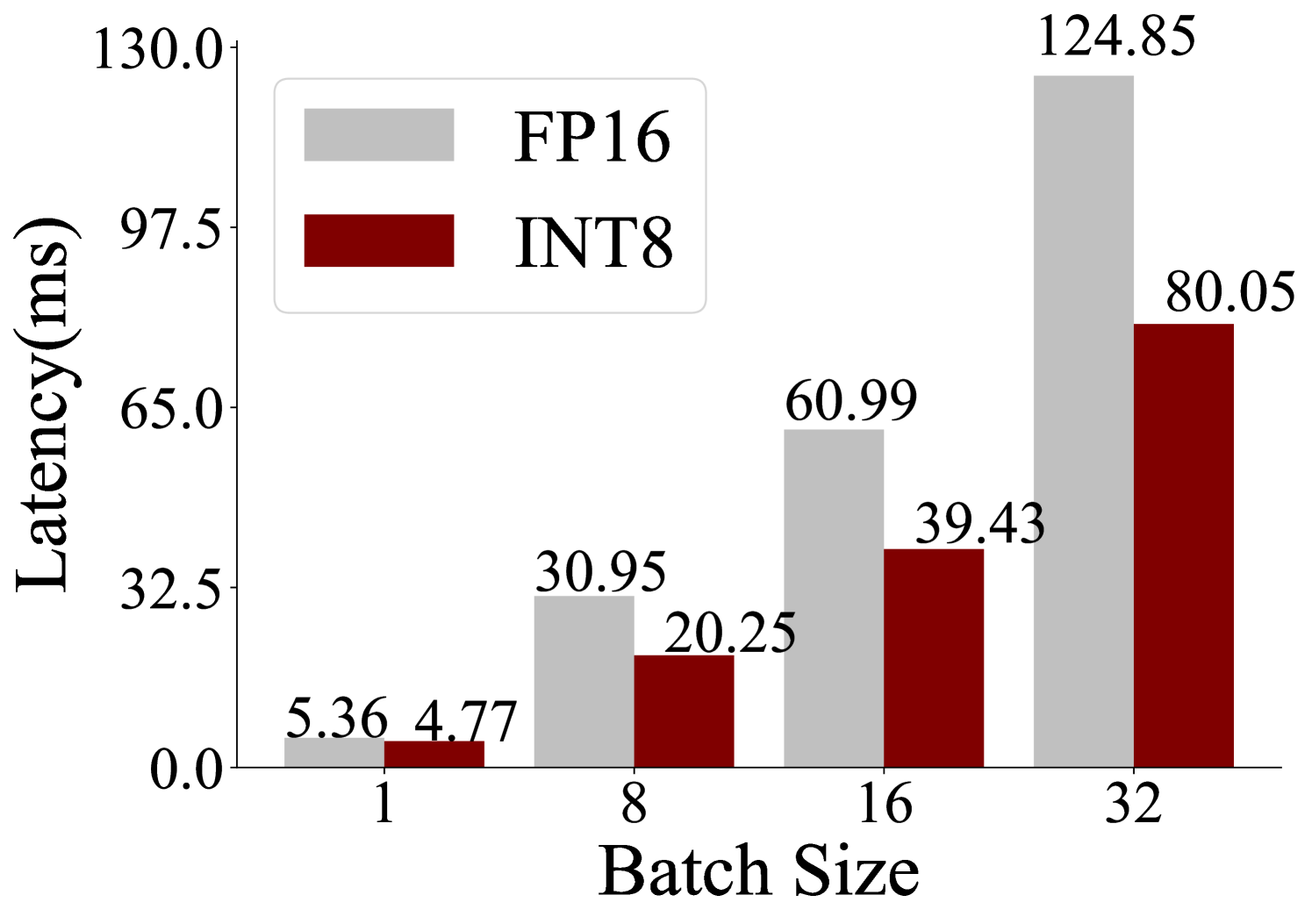

## Bar Chart: Latency vs. Batch Size for FP16 and INT8

### Overview

This bar chart compares the latency (in milliseconds) of two data types, FP16 and INT8, across different batch sizes (1, 8, 16, and 32). The chart visually represents how latency changes with increasing batch size for each data type.

### Components/Axes

* **X-axis:** Batch Size (labeled as "Batch Size"). Markers are at 1, 8, 16, and 32.

* **Y-axis:** Latency (in milliseconds) (labeled as "Latency(ms)"). Scale ranges from 0.0 to 130.0, with increments of approximately 25.0.

* **Legend:** Located in the top-left corner.

* FP16: Represented by a light gray color.

* INT8: Represented by a dark red color.

### Detailed Analysis

The chart consists of paired bars for each batch size, representing FP16 and INT8 latency.

* **Batch Size 1:**

* FP16: Approximately 5.36 ms.

* INT8: Approximately 4.77 ms.

* **Batch Size 8:**

* FP16: Approximately 30.95 ms.

* INT8: Approximately 20.25 ms.

* **Batch Size 16:**

* FP16: Approximately 60.99 ms.

* INT8: Approximately 39.43 ms.

* **Batch Size 32:**

* FP16: Approximately 124.85 ms.

* INT8: Approximately 80.05 ms.

**Trends:**

* **FP16:** The FP16 latency increases significantly as the batch size increases. The trend is strongly upward, appearing roughly exponential.

* **INT8:** The INT8 latency also increases with batch size, but at a slower rate than FP16. The trend is also upward, but less steep.

### Key Observations

* INT8 consistently exhibits lower latency than FP16 across all batch sizes.

* The difference in latency between FP16 and INT8 becomes more pronounced as the batch size increases.

* The latency increase is more dramatic for FP16, suggesting it is more sensitive to batch size.

### Interpretation

The data suggests that using INT8 quantization can significantly reduce latency compared to FP16, especially when processing larger batches. This is likely due to the reduced memory footprint and computational requirements of INT8. The increasing latency with batch size is expected, as larger batches require more processing time. The steeper slope for FP16 indicates that it may become a bottleneck for larger batch sizes, making INT8 a more efficient choice in such scenarios. This chart demonstrates a clear trade-off between precision (FP16) and performance (INT8). The choice between the two would depend on the specific application's requirements for accuracy and speed. The data implies that INT8 is a viable optimization strategy for reducing latency in this system, particularly as batch sizes grow.