\n

## Line Charts: Performance of Question Answering Models with Varying Action Counts

### Overview

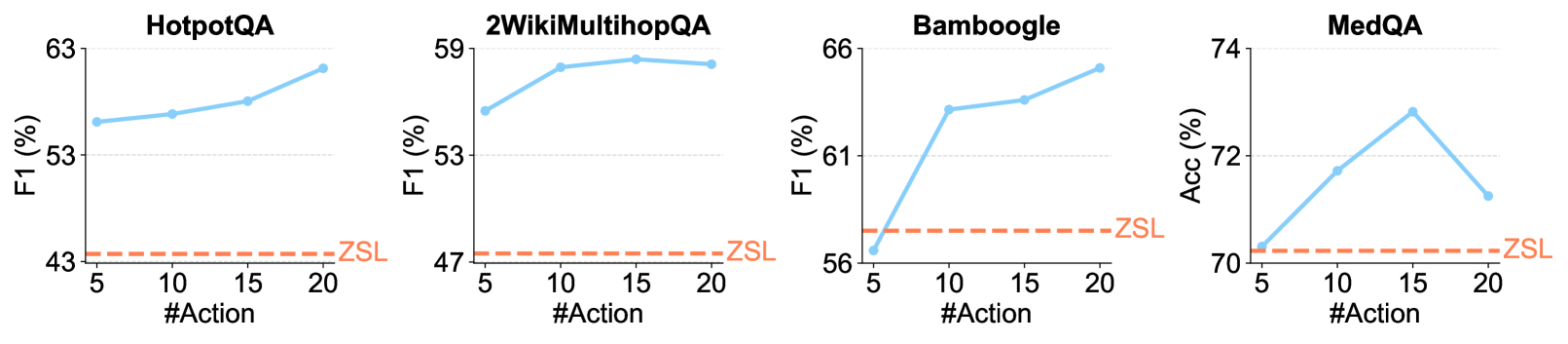

The image presents four separate line charts, each depicting the performance of a different question answering (QA) model – HotpotQA, 2WikiMultihopQA, Bamboogle, and MedQA – as a function of the number of actions taken. The performance metric varies between models (F1 score for HotpotQA, 2WikiMultihopQA, and Bamboogle, and Accuracy (Acc) for MedQA). A dashed horizontal line represents the performance of a baseline method labeled "ZSL" across all charts.

### Components/Axes

Each chart shares the following components:

* **X-axis:** Labeled "#Action", representing the number of actions, with a scale ranging from 5 to 20 in increments of 5.

* **Y-axis:** Represents the performance metric.

* HotpotQA, 2WikiMultihopQA, and Bamboogle: Labeled "F1 (%)", with a scale ranging from approximately 43% to 63%.

* MedQA: Labeled "Acc (%)", with a scale ranging from approximately 70% to 74%.

* **Data Series:** Each chart contains two lines:

* A solid light-blue line representing the performance of the specific QA model.

* A dashed orange line representing the performance of the "ZSL" baseline.

* **Titles:** Each chart has a title indicating the QA model being evaluated (HotpotQA, 2WikiMultihopQA, Bamboogle, MedQA).

* **Legend:** The legend is implicit, with "ZSL" clearly labeled on the dashed orange line.

### Detailed Analysis or Content Details

**1. HotpotQA:**

* The light-blue line slopes upward, indicating increasing F1 score with increasing number of actions.

* At #Action = 5, the F1 score is approximately 54%.

* At #Action = 10, the F1 score is approximately 57%.

* At #Action = 15, the F1 score is approximately 60%.

* At #Action = 20, the F1 score is approximately 62%.

* The ZSL baseline maintains a constant F1 score of approximately 43%.

**2. 2WikiMultihopQA:**

* The light-blue line initially rises sharply, then plateaus.

* At #Action = 5, the F1 score is approximately 48%.

* At #Action = 10, the F1 score is approximately 56%.

* At #Action = 15, the F1 score is approximately 59%.

* At #Action = 20, the F1 score is approximately 59%.

* The ZSL baseline maintains a constant F1 score of approximately 47%.

**3. Bamboogle:**

* The light-blue line initially decreases, then increases.

* At #Action = 5, the F1 score is approximately 64%.

* At #Action = 10, the F1 score is approximately 58%.

* At #Action = 15, the F1 score is approximately 60%.

* At #Action = 20, the F1 score is approximately 63%.

* The ZSL baseline maintains a constant F1 score of approximately 56%.

**4. MedQA:**

* The light-blue line initially increases, then decreases.

* At #Action = 5, the Accuracy is approximately 71%.

* At #Action = 10, the Accuracy is approximately 72%.

* At #Action = 15, the Accuracy is approximately 73%.

* At #Action = 20, the Accuracy is approximately 73%.

* The ZSL baseline maintains a constant Accuracy of approximately 70%.

### Key Observations

* All models show some improvement in performance with increasing actions, although the nature of the improvement varies.

* Bamboogle exhibits a non-monotonic performance curve, initially decreasing before increasing.

* The ZSL baseline consistently performs worse than all the QA models across all action counts.

* 2WikiMultihopQA shows a rapid initial improvement, followed by diminishing returns.

* MedQA shows a relatively stable performance around 73% accuracy for action counts of 15 and 20.

### Interpretation

The charts demonstrate the impact of increasing the number of actions on the performance of different question answering models. The "ZSL" baseline likely represents a zero-shot learning approach, where the model is evaluated without any specific training on the task. The fact that all models outperform ZSL suggests that allowing the model to take more actions (potentially through reasoning or information retrieval steps) improves its ability to answer questions.

The varying trends across models suggest that different QA architectures benefit differently from increased action counts. The non-monotonic behavior of Bamboogle could indicate an optimal action count beyond which performance degrades, potentially due to increased noise or irrelevant information. The plateauing performance of 2WikiMultihopQA suggests that the model reaches a point of diminishing returns, where additional actions do not significantly improve its ability to find the correct answer. The MedQA results suggest that the model is relatively robust to changes in action count within the tested range.

These results highlight the importance of action selection and control in question answering systems. Further investigation could focus on identifying the optimal action count for each model and understanding the reasons behind the observed performance trends.