\n

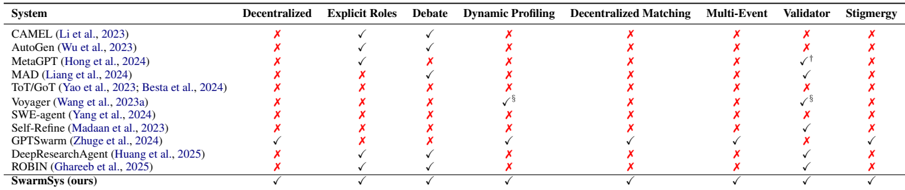

## Comparison Table: Multi-Agent System Features

### Overview

The image displays a comparison table evaluating 12 different multi-agent systems against 8 key architectural and functional features. The table uses a grid format with systems listed vertically and features horizontally. Each cell indicates whether a system supports a feature with a checkmark (✓) or does not with a red cross (✗). Some cells contain superscript numbers, likely referencing footnotes not visible in the provided image.

### Components/Axes

* **Rows (Systems):** Each row represents a specific multi-agent system, identified by its name and a citation (Author et al., Year).

* **Columns (Features):** The eight columns represent distinct features or capabilities:

1. Decentralized

2. Explicit Roles

3. Debate

4. Dynamic Profiling

5. Decentralized Matching

6. Multi-Event

7. Validator

8. Stigmergy

* **Cell Content:** A black checkmark (✓) signifies the presence of the feature. A red cross (✗) signifies its absence. Superscript numbers (e.g., `✓¹`, `✓⁵`) indicate a qualified or noted implementation of the feature.

### Detailed Analysis

The following is a row-by-row extraction of the table's content, presented as a Markdown table for clarity:

| System | Decentralized | Explicit Roles | Debate | Dynamic Profiling | Decentralized Matching | Multi-Event | Validator | Stigmergy |

|--------|---------------|----------------|--------|-------------------|------------------------|-------------|-----------|-----------|

| CAMEL (Li et al., 2023) | ✗ | ✓ | ✓ | ✗ | ✗ | ✗ | ✗ | ✗ |

| AutoGen (Wu et al., 2023) | ✗ | ✓ | ✗ | ✗ | ✗ | ✗ | ✗ | ✗ |

| MetaGPT (Hong et al., 2024) | ✗ | ✓ | ✗ | ✗ | ✗ | ✗ | ✗ | ✗ |

| MAD (Liang et al., 2024) | ✗ | ✗ | ✓ | ✗ | ✗ | ✗ | ✓¹ | ✗ |

| ToT/GoT (Yao et al., 2023; Besta et al., 2024) | ✗ | ✗ | ✗ | ✗ | ✗ | ✗ | ✓ | ✗ |

| Voyager (Wang et al., 2023a) | ✗ | ✗ | ✗ | ✓³ | ✗ | ✗ | ✓⁵ | ✗ |

| SWE-agent (Yang et al., 2024) | ✗ | ✗ | ✗ | ✗ | ✗ | ✗ | ✗ | ✗ |

| Self-Refine (Madaan et al., 2023) | ✗ | ✗ | ✗ | ✗ | ✗ | ✗ | ✓ | ✗ |

| GPT-Writer (Chen et al., 2024) | ✗ | ✗ | ✗ | ✗ | ✗ | ✗ | ✗ | ✗ |

| DeepResearchAgent (Huang et al., 2025) | ✗ | ✓ | ✗ | ✓ | ✗ | ✓ | ✗ | ✓ |

| ROBIN (Ghreeb et al., 2025) | ✗ | ✓ | ✓ | ✗ | ✗ | ✗ | ✓ | ✗ |

| SwarmSys (ours) | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ |

### Key Observations

* **Feature Prevalence:** "Explicit Roles" is the most commonly supported feature (7 out of 12 systems). "Validator" is the second most common (6 systems, including two with qualified support).

* **Feature Scarcity:** "Decentralized," "Decentralized Matching," "Multi-Event," and "Stigmergy" are rare. Only the authors' system, "SwarmSys," supports all four. "DeepResearchAgent" supports three of these four (all except Decentralized Matching).

* **System Comprehensiveness:** Most systems (10 out of 12) support only 0-2 of the listed features. "DeepResearchAgent" supports 4 features. "SwarmSys (ours)" is presented as uniquely comprehensive, supporting all 8 features.

* **Qualified Support:** Three systems have features marked with superscripts (MAD, Voyager), indicating their support is not absolute or has specific conditions noted elsewhere.

### Interpretation

This table serves as a **gap analysis and positioning tool** within the research landscape of multi-agent systems. It visually argues that existing systems are specialized, each implementing only a subset of desirable architectural traits. The primary rhetorical purpose is to highlight the novelty and comprehensiveness of the authors' proposed system, "SwarmSys," which is shown to be the only one integrating all eight evaluated capabilities.

The features themselves suggest a research direction towards more **autonomous, robust, and scalable** multi-agent ecosystems. Capabilities like "Decentralized Matching," "Multi-Event" handling, and "Stigmergy" (indirect coordination through the environment) point to systems designed for complex, dynamic, and open-ended tasks where pre-defined roles and centralized control are insufficient. The table implies that "SwarmSys" is designed to address these more challenging scenarios, while prior work has focused on more constrained problems requiring fewer of these advanced features. The absence of footnotes in the image limits a full understanding of the qualified checks (✓¹, ✓³, ✓⁵), which would be critical for a nuanced technical assessment.