## Heatmap: Neural Network Layer Activation Intensity

### Overview

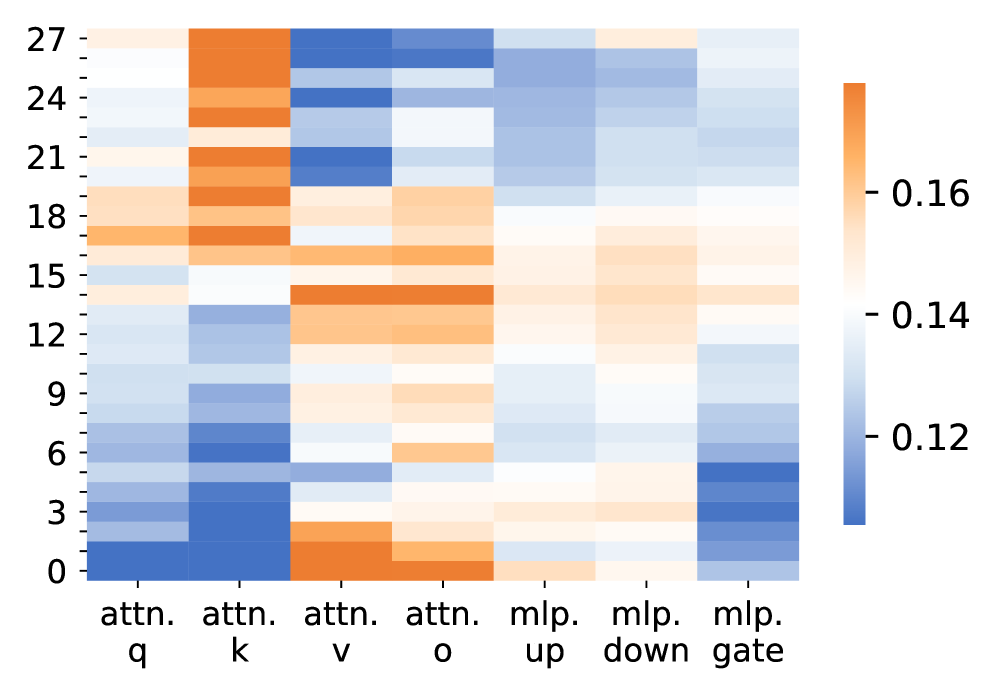

The image is a heatmap visualizing numerical values across a grid. The vertical axis represents an index (likely layer or position) from 0 to 27. The horizontal axis represents seven distinct components of a neural network architecture, specifically parts of attention and MLP (Multi-Layer Perceptron) blocks. Color intensity encodes the value, with a scale provided on the right.

### Components/Axes

* **Vertical Axis (Y-axis):** Labeled with integers from 0 to 27, incrementing by 3. The axis is positioned on the left side of the heatmap.

* **Horizontal Axis (X-axis):** Contains seven categorical labels, positioned at the bottom. From left to right:

1. `attn. q` (Attention Query)

2. `attn. k` (Attention Key)

3. `attn. v` (Attention Value)

4. `attn. o` (Attention Output)

5. `mlp. up` (MLP Up-projection)

6. `mlp. down` (MLP Down-projection)

7. `mlp. gate` (MLP Gate)

* **Color Scale/Legend:** Positioned on the right side of the heatmap. It is a vertical color bar showing a gradient from dark blue (bottom) to orange (top). The scale is annotated with three approximate values:

* **~0.12** (Dark Blue)

* **~0.14** (Light Blue/White)

* **~0.16** (Orange)

The scale indicates that blue tones represent lower values, white/light tones represent mid-range values, and orange tones represent higher values.

### Detailed Analysis

The heatmap displays a 28 (rows) x 7 (columns) grid of values. Each cell's color corresponds to a value between approximately 0.11 and 0.17, based on the color bar.

**Trend Verification by Column:**

1. **`attn. q`:** The column shows a clear upward trend in value. It starts with the darkest blue (lowest values, ~0.11-0.12) at rows 0-3 and gradually transitions to light blue/white (mid-range values, ~0.14) by rows 24-27.

2. **`attn. k`:** This column exhibits a more complex pattern. It has moderate blue values in the lower rows (0-9), transitions to orange (high values, ~0.16) in the middle-upper rows (15-21), and returns to light blue/white in the top rows (24-27).

3. **`attn. v`:** Shows a distinct "U-shaped" or bimodal trend. It has orange (high values) at the very bottom (rows 0-3), transitions to blue (low values) in the middle rows (6-18), and returns to orange/blue mix at the top.

4. **`attn. o`:** Displays a generally increasing trend. It starts with orange at the bottom (rows 0-3), dips to light blue in the lower-middle section (rows 6-12), and then shows a band of orange in the middle-upper rows (15-18) before fading to light blue at the top.

5. **`mlp. up`:** This column is predominantly light blue/white (mid-range values, ~0.14) throughout, with a slight tendency toward lighter shades (higher values) in the upper half (rows 15-27).

6. **`mlp. down`:** Similar to `mlp. up`, it is mostly light blue/white, but shows a more consistent, slightly lighter shade across most rows, suggesting values consistently near or slightly above 0.14.

7. **`mlp. gate`:** Shows a clear downward trend in value. It starts with dark blue (low values, ~0.12) at the bottom (rows 0-6) and becomes progressively lighter, reaching light blue/white (mid-range values) by the top rows (21-27).

**Spatial Grounding of Key Data Points:**

* **Highest Values (Orange, ~0.16+):** Concentrated in the `attn. k` column at rows 15-21 and in the `attn. o` column at rows 15-18. Also present at the very bottom of `attn. v` (rows 0-3).

* **Lowest Values (Dark Blue, ~0.11-0.12):** Found in the `attn. q` column at rows 0-3, the `attn. k` column at rows 3-6, and the `mlp. gate` column at rows 0-6.

* **Mid-Range Values (Light Blue/White, ~0.14):** Dominate the `mlp. up` and `mlp. down` columns across all rows, and appear in the upper sections of `attn. q` and `mlp. gate`.

### Key Observations

1. **Component-Specific Patterns:** Each of the seven network components exhibits a unique "signature" pattern of activation intensity across the 28 layers/positions.

2. **Attention vs. MLP Contrast:** The attention components (`attn. q/k/v/o`) show much higher variance and more extreme values (both high and low) compared to the MLP components (`mlp. up/down/gate`), which are more uniform and centered around the mid-range.

3. **Vertical Stratification:** There are horizontal bands of similar color across multiple columns, particularly around rows 15-18, where several attention components show elevated values simultaneously.

4. **Inverse Relationship:** `attn. q` and `mlp. gate` show roughly inverse trends; `attn. q` values increase with layer index, while `mlp. gate` values decrease.

### Interpretation

This heatmap likely visualizes a metric (e.g., activation magnitude, gradient norm, or importance score) across different components and layers of a deep transformer-based neural network. The 28 vertical indices probably correspond to the 28 layers in a model.

The data suggests that:

* **Information Processing Varies by Component:** The query (`q`), key (`k`), and output (`o`) projections of the attention mechanism are highly dynamic, with their activity peaking at specific layers. This could indicate stages where the model is performing intense relational reasoning or feature integration.

* **Stability in MLP Layers:** The relatively uniform, mid-range values in the MLP layers suggest a more consistent, perhaps stabilizing, role throughout the network's depth.

* **Layer-Specific Roles:** The band of high activity around layers 15-18 across multiple attention components might mark a critical "processing hub" in the network's hierarchy. The low initial values in `attn. q` and `mlp. gate` could indicate a warm-up phase in early layers.

* **Functional Grouping:** The distinct patterns for `q`, `k`, `v`, and `o` imply these sub-components, while part of the same attention block, are not redundant and likely specialize in different aspects of the transformation.

**In summary, the heatmap provides a diagnostic snapshot of a neural network's internal state, revealing how different functional units contribute variably across the model's depth, with attention mechanisms being the primary drivers of dynamic, layer-specific computation.**