\n

## Heatmap: Attention and MLP Layer Contributions

### Overview

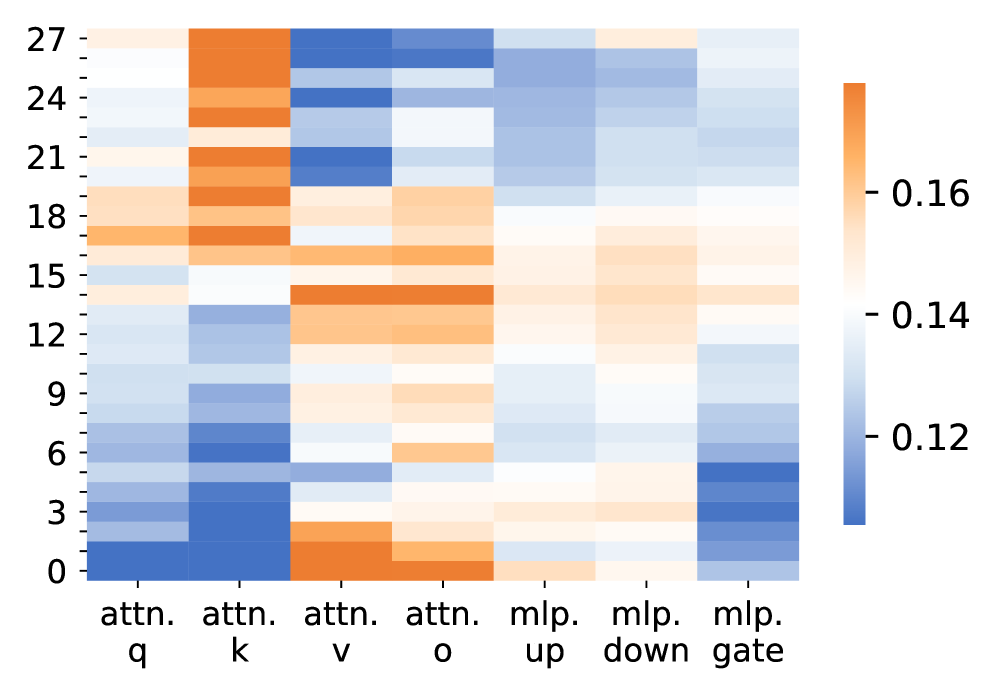

The image presents a heatmap visualizing the contribution of different attention and Multi-Layer Perceptron (MLP) layers across a range of indices, likely representing layers within a neural network. The heatmap uses a color gradient to represent the magnitude of the contribution, with warmer colors (orange/red) indicating higher contributions and cooler colors (blue) indicating lower contributions.

### Components/Axes

* **X-axis:** Represents different layers: "attn. q", "attn. k", "attn. v", "attn. o", "mlp. up", "mlp. down", "mlp. gate". These likely refer to Query, Key, Value, Output components of an attention mechanism, and Up, Down, Gate components of an MLP.

* **Y-axis:** Represents indices ranging from 0 to 27, in increments of 3. These likely correspond to layer numbers or positions within the network. The axis is labeled with numerical values.

* **Color Scale (Legend):** Located on the right side of the image, the color scale ranges from approximately 0.12 (blue) to 0.16 (red). This scale indicates the magnitude of the values represented by the heatmap colors.

### Detailed Analysis

The heatmap displays a pattern of varying contributions across layers and indices.

* **attn. q:** Shows a strong, consistent contribution across all indices, with values generally between 0.14 and 0.16 (orange). The contribution appears relatively uniform.

* **attn. k:** Exhibits a peak contribution around index 6, reaching approximately 0.16 (red). The contribution decreases towards both ends of the index range, falling to around 0.12 (blue) at indices 0 and 27.

* **attn. v:** Shows a moderate contribution, with a peak around index 3, reaching approximately 0.14 (orange). The contribution is generally lower than "attn. q" and "attn. k".

* **attn. o:** Displays a relatively low and consistent contribution across all indices, generally between 0.12 and 0.13 (light blue).

* **mlp. up:** Shows a moderate contribution, with a peak around index 24, reaching approximately 0.15 (orange). The contribution decreases towards lower indices.

* **mlp. down:** Exhibits a peak contribution around index 0, reaching approximately 0.15 (orange). The contribution decreases towards higher indices.

* **mlp. gate:** Shows a moderate contribution, with a peak around index 6, reaching approximately 0.14 (orange). The contribution is generally lower than "mlp. up" and "mlp. down".

Specifically, approximate values (with uncertainty of +/- 0.01) are:

| Layer | Index 0 | Index 3 | Index 6 | Index 9 | Index 12 | Index 15 | Index 18 | Index 21 | Index 24 | Index 27 |

| --------- | ------- | ------- | ------- | ------- | -------- | -------- | -------- | -------- | -------- | -------- |

| attn. q | 0.15 | 0.15 | 0.15 | 0.15 | 0.15 | 0.15 | 0.15 | 0.15 | 0.15 | 0.15 |

| attn. k | 0.12 | 0.13 | 0.16 | 0.14 | 0.13 | 0.14 | 0.14 | 0.14 | 0.13 | 0.12 |

| attn. v | 0.13 | 0.14 | 0.15 | 0.13 | 0.12 | 0.13 | 0.12 | 0.12 | 0.12 | 0.12 |

| attn. o | 0.12 | 0.12 | 0.12 | 0.12 | 0.12 | 0.12 | 0.12 | 0.12 | 0.12 | 0.12 |

| mlp. up | 0.13 | 0.13 | 0.13 | 0.14 | 0.14 | 0.14 | 0.14 | 0.15 | 0.15 | 0.14 |

| mlp. down | 0.15 | 0.14 | 0.13 | 0.12 | 0.12 | 0.12 | 0.12 | 0.13 | 0.13 | 0.13 |

| mlp. gate | 0.13 | 0.13 | 0.14 | 0.13 | 0.12 | 0.13 | 0.13 | 0.13 | 0.13 | 0.12 |

### Key Observations

* "attn. q" consistently exhibits the highest contribution across all indices.

* "attn. k" shows a distinct peak around index 6, suggesting a particularly important role for this layer at that position.

* "mlp. up" and "mlp. down" show opposing trends, with "mlp. up" increasing towards higher indices and "mlp. down" decreasing.

* "attn. o" consistently exhibits the lowest contribution.

### Interpretation

The heatmap suggests that the Query component of the attention mechanism ("attn. q") is consistently important throughout the network. The peak in "attn. k" at index 6 indicates a specific layer or position where the Key component plays a crucial role. The opposing trends in "mlp. up" and "mlp. down" suggest a complementary relationship between these layers, potentially representing an encoding and decoding process. The low contribution of "attn. o" might indicate that the output of the attention mechanism is less directly influential than its query, key, and value components.

This data could be used to analyze the relative importance of different layers in a neural network, potentially guiding pruning or optimization efforts. The heatmap provides a visual representation of layer contributions, allowing for quick identification of key components and potential areas for improvement. The observed patterns suggest a complex interplay between attention and MLP layers, highlighting the importance of considering both mechanisms when analyzing network behavior.