## Diagram: LLM Reasoning Strategies

### Overview

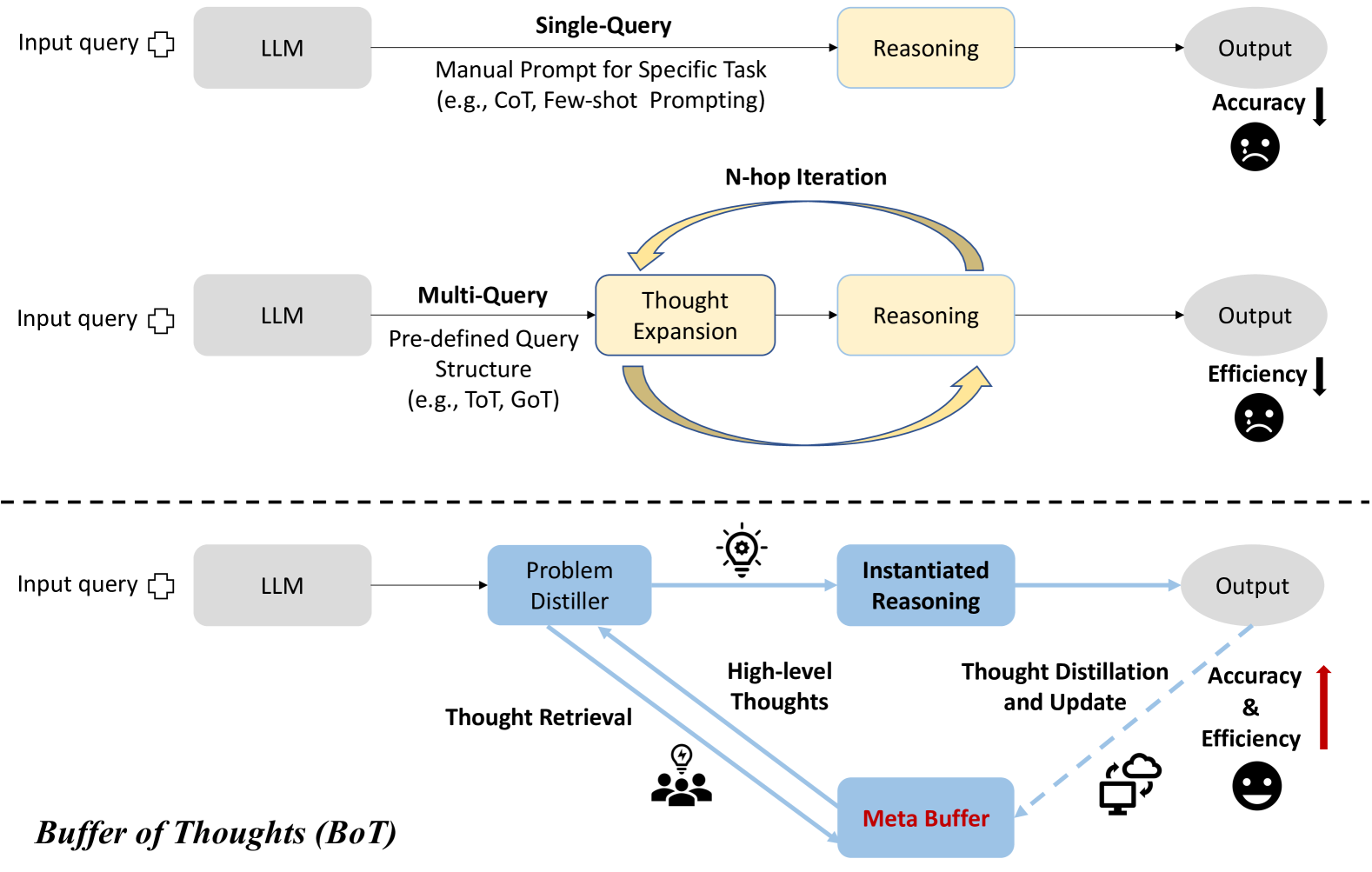

The image presents a comparative diagram illustrating three different strategies for Large Language Model (LLM) reasoning: Single-Query, Multi-Query (N-hop Iteration), and Buffer of Thoughts (BoT). Each strategy outlines a distinct approach to processing input queries and generating outputs, with varying implications for accuracy and efficiency.

### Components/Axes

**1. Single-Query:**

* **Input query:** Represents the initial prompt provided to the LLM.

* **LLM:** Denotes the Large Language Model processing the input.

* **Manual Prompt for Specific Task (e.g., CoT, Few-shot Prompting):** Describes the prompting technique used.

* **Reasoning:** Represents the reasoning process performed by the LLM.

* **Output:** The final result generated by the LLM.

* **Accuracy:** Indicates the level of correctness of the output, shown with a downward arrow and a sad face, suggesting lower accuracy.

**2. Multi-Query (N-hop Iteration):**

* **Input query:** Represents the initial prompt provided to the LLM.

* **LLM:** Denotes the Large Language Model processing the input.

* **Multi-Query:** Indicates the use of multiple queries.

* **Pre-defined Query Structure (e.g., ToT, GoT):** Describes the structure of the queries.

* **Thought Expansion:** Represents the process of expanding on initial thoughts.

* **Reasoning:** Represents the reasoning process performed by the LLM.

* **Output:** The final result generated by the LLM.

* **Efficiency:** Indicates the level of efficiency of the output, shown with a downward arrow and a sad face, suggesting lower efficiency.

**3. Buffer of Thoughts (BoT):**

* **Input query:** Represents the initial prompt provided to the LLM.

* **LLM:** Denotes the Large Language Model processing the input.

* **Problem Distiller:** Represents the component responsible for distilling the problem.

* **Instantiated Reasoning:** Represents the reasoning process performed by the LLM.

* **Output:** The final result generated by the LLM.

* **Thought Distillation and Update:** Represents the process of refining and updating thoughts.

* **Meta Buffer:** A buffer for storing meta-level thoughts.

* **High-level Thoughts:** Thoughts generated during the process.

* **Thought Retrieval:** The process of retrieving thoughts.

* **Accuracy & Efficiency:** Indicates the level of accuracy and efficiency of the output, shown with an upward arrow and a happy face, suggesting higher accuracy and efficiency.

### Detailed Analysis

**1. Single-Query:**

* The process starts with an input query fed into the LLM.

* The LLM uses a manual prompt tailored for a specific task, such as Chain-of-Thought (CoT) or Few-shot Prompting.

* The LLM performs reasoning based on the prompt and generates an output.

* The accuracy of this method is indicated to be lower.

**2. Multi-Query (N-hop Iteration):**

* The process starts with an input query fed into the LLM.

* The LLM uses a pre-defined query structure, such as Tree of Thoughts (ToT) or Graph of Thoughts (GoT).

* The LLM expands on the initial thoughts and performs reasoning iteratively.

* The output is generated after multiple iterations.

* The efficiency of this method is indicated to be lower.

**3. Buffer of Thoughts (BoT):**

* The process starts with an input query fed into the LLM.

* The LLM uses a Problem Distiller to refine the problem.

* Instantiated Reasoning is performed based on the distilled problem.

* High-level thoughts are generated and stored.

* Thought Retrieval is used to retrieve relevant thoughts.

* Thought Distillation and Update are performed to refine the thoughts.

* The Meta Buffer stores meta-level thoughts.

* The output is generated after the distillation and update process.

* The accuracy and efficiency of this method are indicated to be higher.

### Key Observations

* The Single-Query method is straightforward but may suffer from lower accuracy.

* The Multi-Query method involves iterative reasoning but may be less efficient.

* The Buffer of Thoughts method aims to improve both accuracy and efficiency through thought distillation and updating.

### Interpretation

The diagram illustrates a progression in LLM reasoning strategies, moving from simple, single-query approaches to more complex, iterative, and refined methods. The Single-Query method represents a basic approach, while the Multi-Query method attempts to improve reasoning through iteration. The Buffer of Thoughts (BoT) method represents a more advanced strategy that incorporates thought distillation and updating to enhance both accuracy and efficiency. The diagram suggests that more sophisticated methods like BoT are designed to overcome the limitations of simpler approaches, leading to better performance in complex tasks.