## Diagram: LLM Reasoning Framework Architecture

### Overview

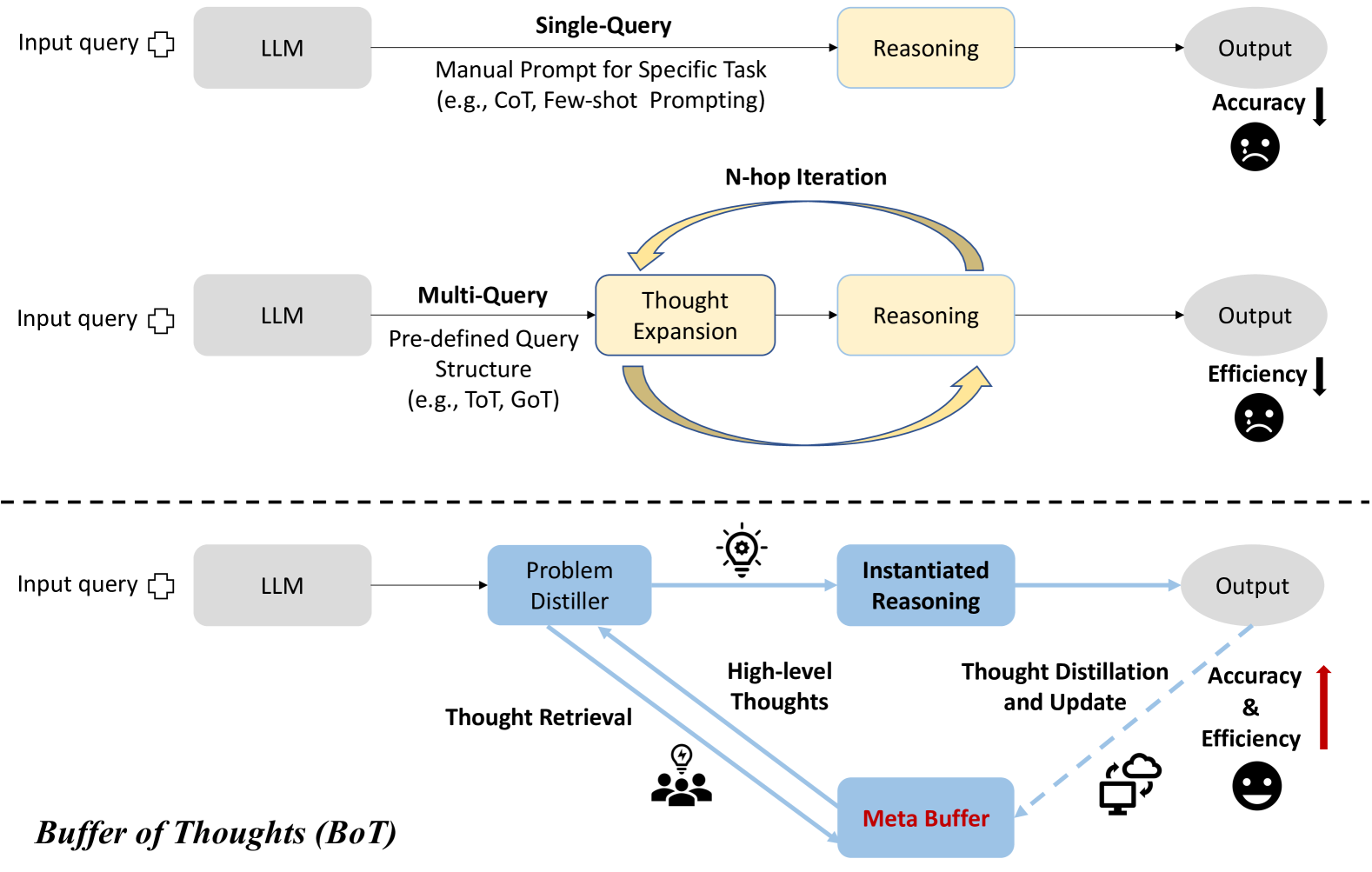

The diagram illustrates three progressive approaches to utilizing Large Language Models (LLMs) for reasoning tasks, showing increasing complexity from basic prompting to advanced thought management systems. Three main sections are depicted: Single-Query, Multi-Query, and Buffer of Thoughts (BoT), each with distinct components and evaluation metrics.

### Components/Axes

1. **Single-Query Section**

- Input query → LLM → Manual Prompt (CoT/Few-shot) → Reasoning → Output

- Evaluation: Accuracy (↓ sad face)

2. **Multi-Query Section**

- Input query → LLM → Pre-defined Query Structure (ToT/GoT) → Thought Expansion → Reasoning (loop)

- Evaluation: Efficiency (↓ sad face)

3. **Buffer of Thoughts (BoT) Section**

- Input query → LLM → Problem Distiller → Instantiated Reasoning → Output

- Meta Buffer ↔ Thought Distillation/Update ↔ Problem Distiller

- Thought Retrieval from Meta Buffer

- Evaluation: Accuracy & Efficiency (↑ happy face)

### Detailed Analysis

- **Single-Query**: Linear flow with explicit manual prompting. Output quality measured by accuracy alone.

- **Multi-Query**: Introduces iterative thought expansion with predefined structures. Focus on efficiency optimization.

- **BoT**: Most complex architecture with:

- Problem Distiller generating high-level thoughts

- Meta Buffer acting as persistent memory

- Bidirectional feedback loops for thought refinement

- Combined evaluation of accuracy and efficiency

### Key Observations

1. Evaluation metrics evolve from single-dimensional (accuracy) to dual-dimensional (accuracy + efficiency)

2. Complexity increases from linear (Single-Query) to cyclical (Multi-Query) to networked (BoT)

3. Meta Buffer introduces persistent memory capabilities absent in earlier approaches

4. Thought distillation mechanism enables continuous improvement in later stages

### Interpretation

The diagram demonstrates a progression from basic LLM prompting to sophisticated cognitive architectures. The introduction of the Meta Buffer in the BoT approach suggests a paradigm shift toward:

1. **Memory-augmented reasoning**: Persistent storage of intermediate thoughts enables deeper analysis

2. **Self-improving systems**: Feedback loops between distillation and reasoning components create iterative enhancement

3. **Multi-objective optimization**: Later stages balance both accuracy and efficiency, unlike earlier single-metric approaches

The sad/happy face indicators visually reinforce this progression, showing diminishing returns in early stages versus compounding benefits in advanced implementations. The BoT architecture appears to address fundamental LLM limitations in context retention and reasoning depth through its memory buffer and distillation mechanisms.