\n

## Diagram: Data Partitioning and Flow in a Federated Learning System

### Overview

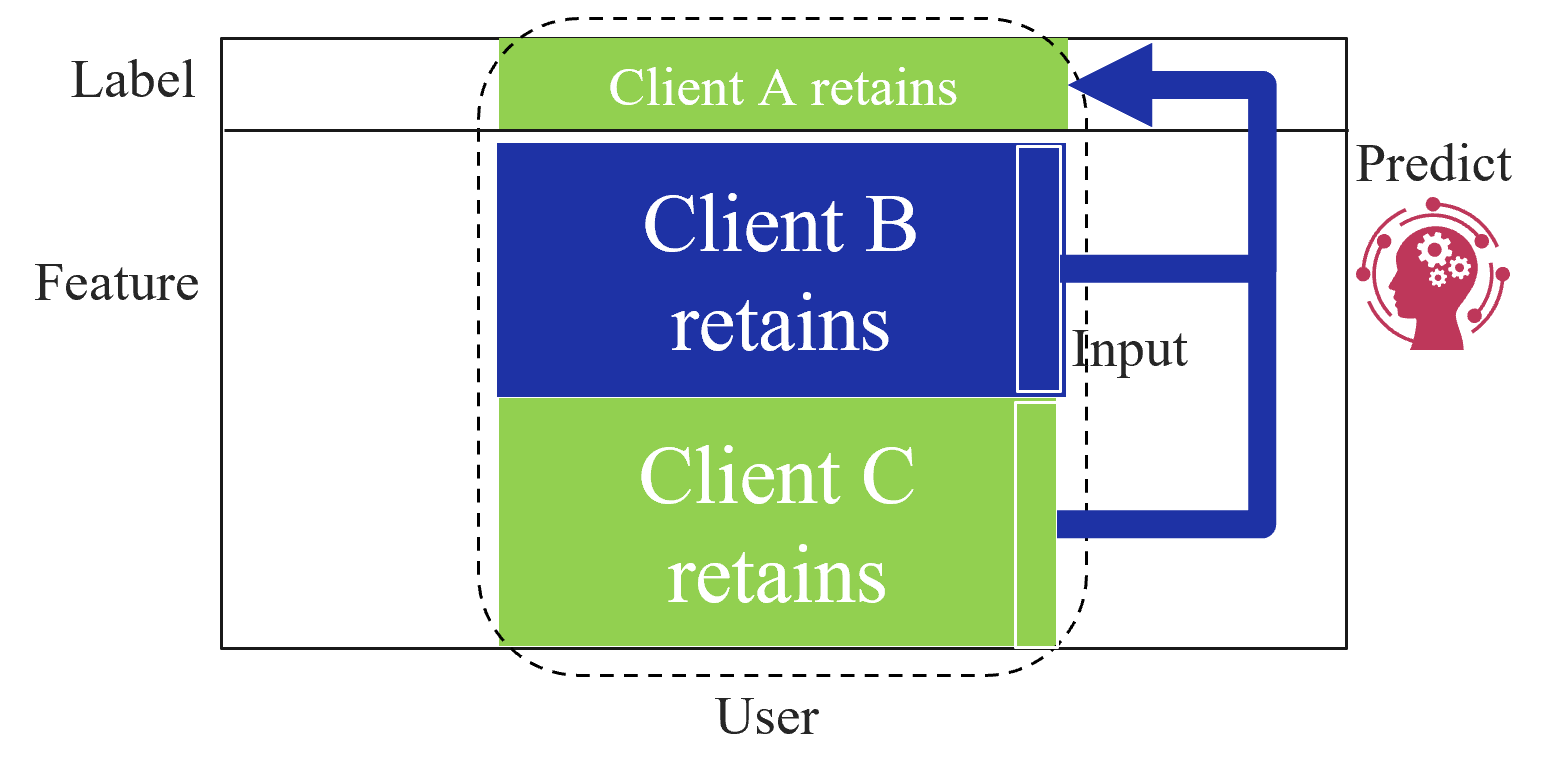

The image is a conceptual diagram illustrating a data partitioning and prediction workflow, likely within a federated or privacy-preserving machine learning context. It shows how different data components (Label, Feature, User) are retained by separate clients (A, B, C) and how selected data flows to a prediction model.

### Components/Axes

The diagram is structured within a large rectangular boundary. The primary components are:

1. **Left-Side Categorical Labels:** Three text labels are aligned vertically along the left edge, defining data categories:

* `Label` (Top)

* `Feature` (Middle)

* `User` (Bottom)

2. **Central Data Blocks (Clients):** Three colored rectangular blocks are stacked vertically in the center, each representing a client's retained data. They are enclosed by a dashed, rounded rectangular line.

* **Top Block (Green):** Contains the text `Client A retains`. This block is positioned adjacent to the `Label` category.

* **Middle Block (Blue):** Contains the text `Client B retains`. This block is positioned adjacent to the `Feature` category.

* **Bottom Block (Green):** Contains the text `Client C retains`. This block is positioned adjacent to the `User` category.

3. **Right-Side Prediction Component:**

* The text `Predict` is located in the upper-right area.

* Below the text is a red icon depicting a human head silhouette with gears inside, surrounded by a circular network of nodes and lines, symbolizing an AI or machine learning model.

4. **Data Flow Arrows:**

* A thick, dark blue arrow originates from the right side of the `Client B retains` block. It travels right, then turns upward, and finally points left into the `Client A retains` block.

* A second thick, dark blue arrow originates from the right side of the `Client C retains` block. It travels right and then turns upward to join the path of the first arrow before pointing into the `Client A retains` block.

* The label `Input` is placed near the point where the two arrows from Clients B and C converge.

### Detailed Analysis

* **Spatial Grounding & Color Matching:**

* The `Client A retains` block (green) is at the top-center, within the dashed boundary.

* The `Client B retains` block (blue) is in the center, within the dashed boundary.

* The `Client C retains` block (green) is at the bottom-center, within the dashed boundary.

* The two data flow arrows are the same dark blue color. The arrow from the blue `Client B` block and the arrow from the green `Client C` block are visually identical in color and style, confirming they represent the same type of data flow ("Input").

* The `Predict` icon is positioned to the right of the central blocks, outside the dashed boundary.

* **Flow Description:**

1. Data from `Client B` (Feature data) and `Client C` (User data) is sent as `Input`.

2. This combined input flows toward the `Predict` model.

3. The arrow's final direction points into the `Client A retains` block (Label data), indicating that the prediction is made *against* or *in conjunction with* the labels held by Client A.

### Key Observations

* **Data Siloing:** The dashed line around the three client blocks visually emphasizes that each client's data is retained locally and is separate.

* **Asymmetric Data Roles:** The clients hold different types of data: Client A has labels, Client B has features, and Client C has user data. This is a classic setup for **vertical federated learning**, where different entities hold different features for the same set of users.

* **Prediction Mechanism:** The model (`Predict`) does not directly access the raw data from Clients B and C. Instead, it receives an "Input" derived from their data. The prediction output is associated with or applied to the labels held by Client A.

* **Color Coding:** The use of green for Clients A and C and blue for Client B may imply a grouping or relationship, though the specific meaning is not defined in the diagram.

### Interpretation

This diagram illustrates the core principle of **privacy-preserving collaborative machine learning**. The key insight is that valuable insights (predictions) can be generated without centralizing sensitive data.

* **What it demonstrates:** The system allows multiple parties (Clients A, B, C) to contribute to a machine learning task while keeping their primary data assets (labels, features, user info) private and local. Client A possesses the ground truth (labels), while Clients B and C possess the explanatory data (features and user attributes). The "Input" arrow likely represents processed data, gradients, or encrypted parameters—not the raw data itself—being shared to train a model that can predict Client A's labels.

* **Relationships:** The relationship is transactional and purpose-built for the prediction task. Clients B and C are data providers for the input features, and Client A is the data owner for the target labels. The `Predict` model acts as a neutral arbiter or a jointly trained entity.

* **Notable Implications:** This architecture mitigates privacy risks and complies with data protection regulations (like GDPR) by design, as raw data never leaves the client's environment. The "outlier" in the flow is that the final arrow points *to* Client A's data block, suggesting the prediction result is delivered to or evaluated against the label holder, completing the learning loop. The diagram abstracts away the complex cryptographic protocols (like secure multi-party computation or homomorphic encryption) that would be required to make this flow secure in practice.