## Neural Network Architecture Diagram: Residual Block with Widening Factor and Branching

### Overview

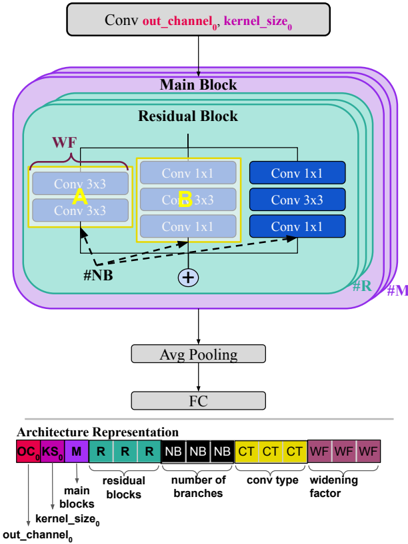

The image is a technical diagram illustrating a convolutional neural network (CNN) architecture. It details a hierarchical structure starting from an initial convolution layer, passing through a complex "Main Block" composed of multiple "Residual Blocks," and concluding with pooling and a fully connected (FC) layer. A key feature is the use of a "widening factor" (WF) and multiple branches (#NB) within the residual blocks. An "Architecture Representation" bar at the bottom provides a compact, color-coded encoding of the network's hyperparameters.

### Components/Axes

The diagram is organized vertically, representing the forward pass of data.

**1. Top Section (Input Processing):**

* A gray rectangular box labeled: `Conv out_channel₀ kernel_size₀`. This represents the initial convolutional layer with specified output channels and kernel size.

**2. Middle Section (Core Architecture - Main Block):**

* **Main Block:** A large, purple-outlined rounded rectangle containing the core processing units. It is labeled "Main Block" at the top.

* **Residual Block:** Inside the Main Block, a teal-colored rounded rectangle represents a single "Residual Block." The diagram implies multiple such blocks are stacked, indicated by the labels `#R` (number of residual blocks) and `#M` (likely number of main blocks or a multiplier) on the right edge.

* **Internal Structure of a Residual Block:**

* **Left Path (WF Branch):** A yellow-highlighted section labeled `WF` (Widening Factor). It contains two sequential `Conv 3x3` layers.

* **Middle Path (Standard Branch):** A blue-highlighted section containing a sequence: `Conv 1x1` -> `Conv 3x3` -> `Conv 1x1`.

* **Right Path (Alternative Branch):** Another blue-highlighted section with a different sequence: `Conv 1x1` -> `Conv 3x3` -> `Conv 1x1`. The middle and right paths appear to be parallel branches.

* **Branching Indicator:** A dashed arrow labeled `#NB` (Number of Branches) points from the output of the left (WF) path to the inputs of the middle and right paths, indicating a splitting or branching operation.

* **Combination:** A circle with a `+` symbol indicates the element-wise addition (skip connection) of the outputs from the different branches.

**3. Bottom Section (Output Processing):**

* A gray box labeled `Avg Pooling`.

* A final gray box labeled `FC` (Fully Connected layer).

**4. Architecture Representation Bar (Bottom of Image):**

* A horizontal bar divided into colored segments, each with a label and a corresponding explanatory text below.

* **Segments (from left to right):**

* `OC` (Red): `out_channel₀`

* `KS` (Purple): `kernel_size₀`

* `M` (Blue): `main blocks`

* `R` (Teal, repeated 3x): `residual blocks`

* `NB` (Green, repeated 3x): `number of branches`

* `CT` (Yellow, repeated 3x): `conv type`

* `WF` (Brown, repeated 3x): `widening factor`

### Detailed Analysis

* **Data Flow:** The flow is top-down: `Initial Conv` -> `Main Block (containing N Residual Blocks)` -> `Avg Pooling` -> `FC Layer`.

* **Residual Block Complexity:** Each residual block is not a simple chain but a multi-branch structure. The leftmost branch is widened by a factor `WF` and then splits into `#NB` parallel branches (the diagram shows two, but `#NB` is a variable). These branches have different internal convolution sequences ("conv types" or `CT`).

* **Hyperparameter Encoding:** The "Architecture Representation" bar is a crucial key. It shows that the network's configuration is defined by a sequence of hyperparameters: the initial output channels (`OC`), kernel size (`KS`), number of main blocks (`M`), number of residual blocks per main block (`R`), number of branches within a residual block (`NB`), the type of convolution sequence used in each branch (`CT`), and the widening factor applied (`WF`). The repetition of `R`, `NB`, `CT`, and `WF` suggests these can vary across different stages or blocks of the network.

### Key Observations

1. **Non-Linear Topology:** The architecture moves beyond a simple sequential stack of layers, incorporating parallel branches within each residual unit. This is a form of "network-in-network" or multi-path design.

2. **Explicit Widening Factor (WF):** The WF is applied to a dedicated initial branch before splitting, which likely increases the channel dimensionality to provide richer features for the subsequent parallel branches.

3. **Variable Branch Configuration:** The labels `#NB` and `CT` indicate that both the number of parallel paths and their internal layer compositions are configurable hyperparameters, allowing for significant architectural flexibility.

4. **Compact Notation:** The architecture representation bar provides a dense, symbolic way to describe a complex, potentially deep network, which is useful for research papers and configuration files.

### Interpretation

This diagram represents a sophisticated, manually-designed CNN architecture that incorporates several advanced concepts:

* **Residual Learning:** The core `+` addition signifies a residual connection, which helps in training very deep networks by mitigating the vanishing gradient problem.

* **Multi-Path/Inception-like Design:** The parallel branches (`#NB`) with different convolution types (`CT`) resemble the Inception module philosophy, allowing the network to capture features at different scales or complexities simultaneously within a single block.

* **Controlled Model Capacity:** The hyperparameters `WF` (widening factor) and `NB` (number of branches) are explicit knobs to control the model's width and representational power. Increasing `WF` or `NB` would typically increase the model's capacity and computational cost.

* **Modular and Hierarchical Design:** The nesting of `Residual Block` within `Main Block` suggests a hierarchical organization, where groups of residual blocks form higher-level functional units. This is common in state-of-the-art architectures for tasks like image classification.

The architecture appears designed for a balance between depth (via stacked residual blocks), width (via WF), and feature diversity (via multi-branch `CT`), aiming for high performance on complex pattern recognition tasks. The explicit encoding at the bottom suggests this diagram is from a research context where precise architectural specifications are critical for reproducibility.