## Line Chart: Model Performance Metrics vs. Iteration Steps

### Overview

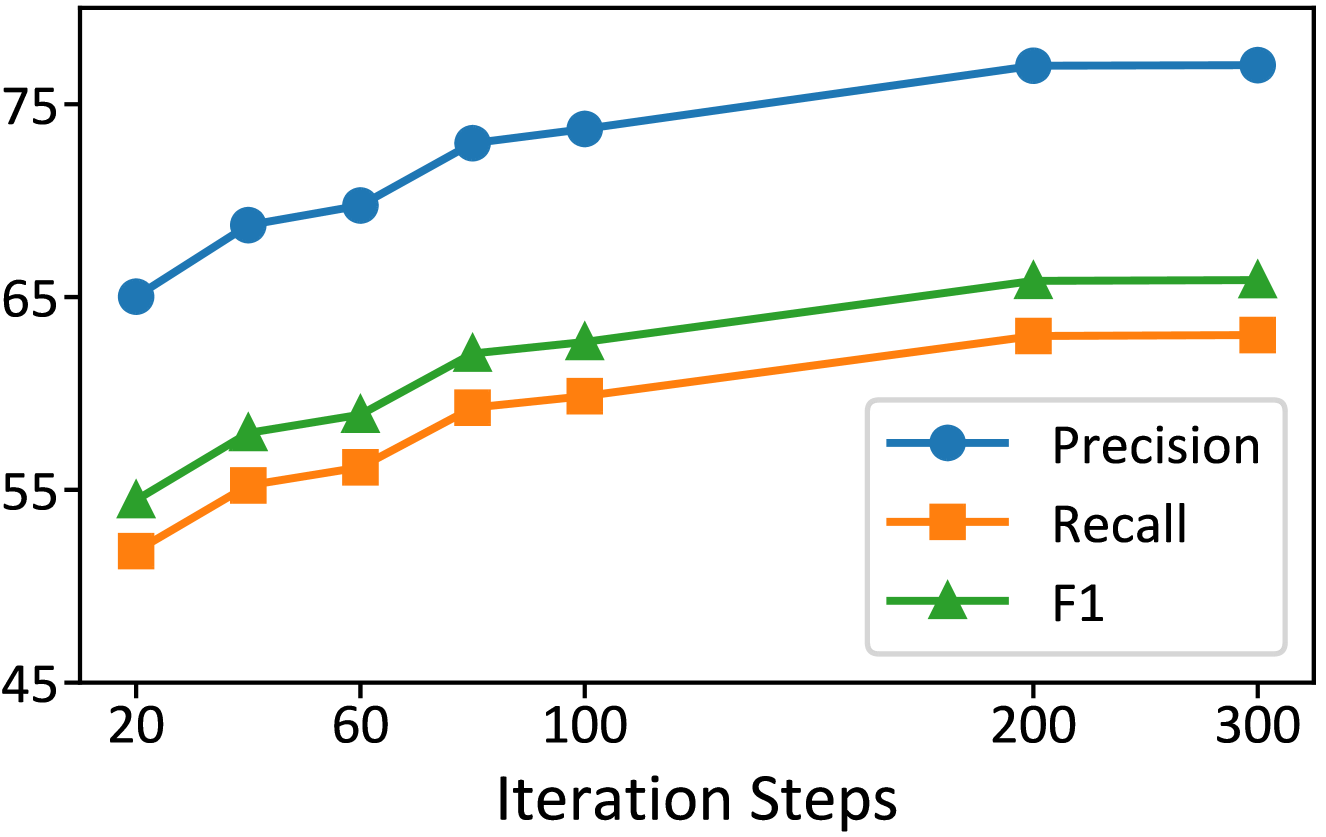

The image is a line chart displaying the performance of a machine learning model across three key metrics—Precision, Recall, and F1 score—as a function of training iteration steps. The chart shows all three metrics improving with more iterations before plateauing.

### Components/Axes

* **Chart Type:** Line chart with markers.

* **X-Axis:**

* **Label:** "Iteration Steps"

* **Scale:** Linear scale with major tick marks at 20, 60, 100, 200, and 300.

* **Y-Axis:**

* **Scale:** Linear scale with major tick marks at 45, 55, 65, and 75. The axis appears to represent a percentage or score.

* **Legend:**

* **Position:** Bottom-right corner of the plot area.

* **Entries:**

* Blue line with circle markers: **Precision**

* Orange line with square markers: **Recall**

* Green line with triangle markers: **F1**

### Detailed Analysis

**Data Series and Trends:**

1. **Precision (Blue line, circle markers):**

* **Trend:** Shows a steady, strong upward slope from the start, with the rate of increase slowing after 100 steps and plateauing after 200 steps.

* **Approximate Data Points:**

* At 20 steps: ~65

* At 60 steps: ~69

* At 100 steps: ~73

* At 200 steps: ~76

* At 300 steps: ~76 (plateau)

2. **Recall (Orange line, square markers):**

* **Trend:** Shows a consistent upward slope, similar in shape to Precision but at a lower absolute value. It also plateaus after 200 steps.

* **Approximate Data Points:**

* At 20 steps: ~52

* At 60 steps: ~56

* At 100 steps: ~60

* At 200 steps: ~63

* At 300 steps: ~63 (plateau)

3. **F1 (Green line, triangle markers):**

* **Trend:** Follows a trajectory between Precision and Recall, as expected for the harmonic mean of the two. It shows steady growth and plateaus after 200 steps.

* **Approximate Data Points:**

* At 20 steps: ~54

* At 60 steps: ~58

* At 100 steps: ~62

* At 200 steps: ~65

* At 300 steps: ~65 (plateau)

### Key Observations

* **Performance Hierarchy:** Precision is consistently the highest metric, followed by F1, with Recall being the lowest throughout the training process.

* **Convergence Point:** All three metrics show a clear plateau beginning at approximately 200 iteration steps, with negligible improvement between 200 and 300 steps.

* **Growth Pattern:** The most significant gains for all metrics occur between 20 and 100 iteration steps. The rate of improvement diminishes between 100 and 200 steps before stopping.

* **Metric Relationship:** The F1 score line is positioned between the Precision and Recall lines at every data point, visually confirming its role as a combined metric.

### Interpretation

This chart demonstrates the learning curve of a classification model. The data suggests that:

1. **Training Effectiveness:** The model is successfully learning from the data, as all performance metrics improve with more training iterations.

2. **Diminishing Returns:** There is a clear point of diminishing returns around 200 iteration steps. Further training beyond this point (to 300 steps) provides no measurable benefit to these key metrics, indicating the model has likely converged.

3. **Model Bias:** The consistent gap where Precision > Recall suggests the model, in its current state, is better at being correct when it makes a positive prediction (high precision) than it is at finding all actual positive instances (lower recall). This could indicate a class imbalance in the data or a model tuning that favors precision.

4. **Optimal Training Duration:** For efficiency, training could be stopped at or shortly after 200 steps without sacrificing performance, saving computational resources.