# Technical Document Extraction: Neural Network Architecture Diagram

This document provides a comprehensive extraction and analysis of the provided technical diagram, which illustrates a multi-block neural network architecture utilizing 1D and 2D Rational Units.

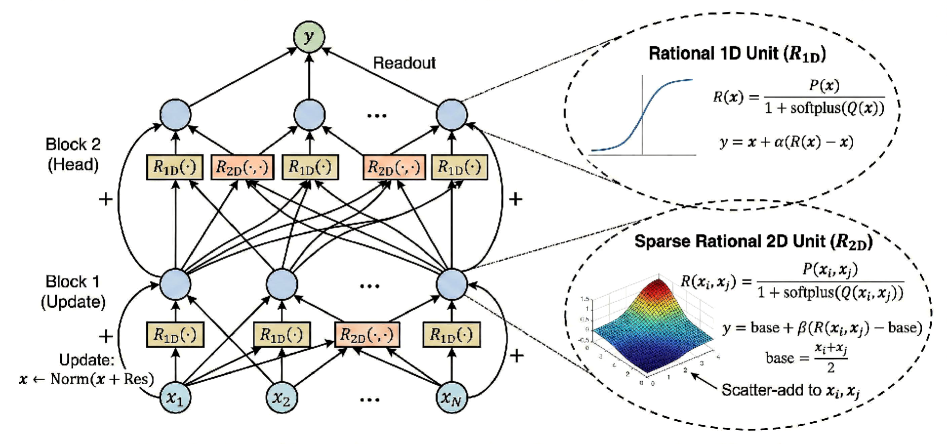

## 1. High-Level Architecture Overview

The image depicts a hierarchical neural network structure organized into sequential processing blocks, culminating in a readout layer. The flow of data is bottom-up, with residual connections (indicated by "+" symbols and curved arrows) bypassing the functional units within each block.

### Component Segmentation

* **Input Layer:** Represented by nodes $x_1, x_2, \dots, x_N$.

* **Block 1 (Update):** The first processing stage involving 1D and 2D rational functions.

* **Block 2 (Head):** The second processing stage, mirroring the structure of Block 1 but leading to the final output.

* **Readout Layer:** Aggregates the outputs of Block 2 into a single output node $y$.

* **Functional Units:** Detailed callouts for **Rational 1D Unit ($R_{1D}$)** and **Sparse Rational 2D Unit ($R_{2D}$)**.

---

## 2. Detailed Block Analysis

### Block 1 (Update)

* **Inputs:** Receives signals from $x_1, x_2, \dots, x_N$.

* **Processing Units:**

* **$R_{1D}(\cdot)$:** Yellow rectangular blocks. These appear to process individual node features.

* **$R_{2D}(\cdot, \cdot)$:** Orange rectangular blocks. These process interactions between two nodes (e.g., between $x_2$ and $x_N$).

* **Residual Connection:** A curved arrow with a **+** sign indicates a skip connection from the input $x$ to the output of the block.

* **Update Rule Text:** `Update: x ← Norm(x + Res)`

### Block 2 (Head)

* **Inputs:** Receives the normalized output from Block 1.

* **Processing Units:** Contains a mix of $R_{1D}(\cdot)$ and $R_{2D}(\cdot, \cdot)$ units.

* **Connectivity:** Shows a dense interaction pattern where outputs from Block 1 nodes are cross-combined into the $R_{2D}$ units of Block 2.

* **Residual Connection:** Similar to Block 1, a curved arrow with a **+** sign bypasses the functional units.

### Readout

* **Process:** Arrows point from the top blue nodes of Block 2 to a single green node labeled **$y$**.

* **Label:** "Readout"

---

## 3. Functional Unit Specifications (Callouts)

### Rational 1D Unit ($R_{1D}$)

* **Visual Representation:** A 2D line plot showing a sigmoid-like (S-shaped) curve.

* **Mathematical Definition:**

$$R(x) = \frac{P(x)}{1 + \text{softplus}(Q(x))}$$

* **Output Equation:**

$$y = x + \alpha(R(x) - x)$$

*(Note: This represents a weighted residual update where $\alpha$ scales the change.)*

### Sparse Rational 2D Unit ($R_{2D}$)

* **Visual Representation:** A 3D surface plot showing a localized peak (resembling a Gaussian or bell shape) over a 2D plane.

* **Mathematical Definition:**

$$R(x_i, x_j) = \frac{P(x_i, x_j)}{1 + \text{softplus}(Q(x_i, x_j))}$$

* **Output Equation:**

$$y = \text{base} + \beta(R(x_i, x_j) - \text{base})$$

$$\text{base} = \frac{x_i + x_j}{2}$$

* **Operational Note:** An arrow points to the 3D plot with the text: **"Scatter-add to $x_i, x_j$"**. This indicates that the result of the 2D unit is distributed back to the constituent input nodes.

---

## 4. Textual Transcriptions

| Location | Original Text |

| :--- | :--- |

| Top Center | $y$ |

| Top Right | Readout |

| Left (Top) | Block 2 (Head) |

| Left (Middle) | Block 1 (Update) |

| Left (Bottom) | Update: $x \leftarrow \text{Norm}(x + \text{Res})$ |

| Bottom Nodes | $x_1, x_2, \dots, x_N$ |

| Unit Labels | $R_{1D}(\cdot)$, $R_{2D}(\cdot, \cdot)$ |

| Callout 1 Title | Rational 1D Unit ($R_{1D}$) |

| Callout 2 Title | Sparse Rational 2D Unit ($R_{2D}$) |

| Callout 2 Note | Scatter-add to $x_i, x_j$ |

---

## 5. Summary of Logic and Flow

1. **Input** $x$ enters the system.

2. **Block 1** applies 1D transformations to individual features and 2D transformations to pairs of features. The result is added back to the original input (Residual) and Normalized.

3. **Block 2** repeats this process, potentially with different weights or "Head" specific parameters.

4. The **Readout** layer performs a final aggregation of all node features to produce the scalar or vector output $y$.

5. The **Rational Units** use a specific ratio of polynomials ($P/Q$) stabilized by a softplus function to learn non-linear activations.