# Technical Document Extraction: Neural Network Architecture with Rational Units

## Diagram Overview

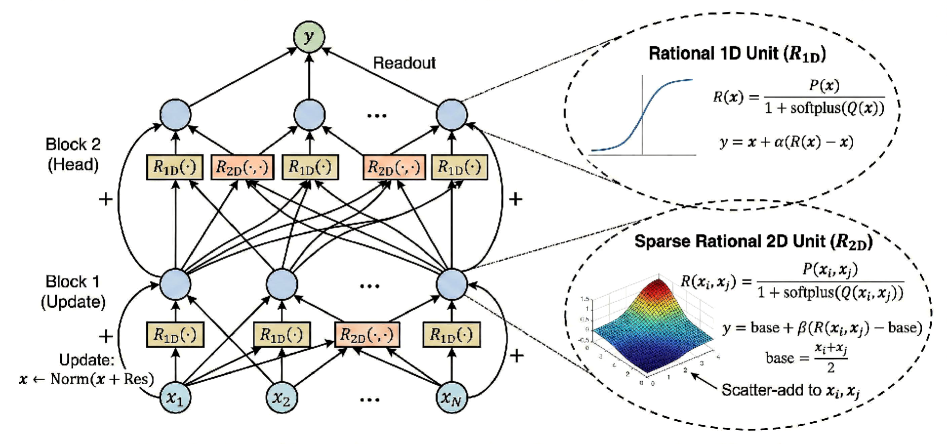

The image depicts a neural network architecture with two processing blocks (Update and Head) connected to a readout. The network incorporates specialized Rational 1D (R₁D) and Sparse Rational 2D (R₂D) units with explicit mathematical formulations.

---

## Component Breakdown

### 1. Network Structure

**Blocks:**

- **Block 1 (Update):**

- Contains residual connections (`x ← Norm(x + Res)`)

- Processes input features `x₁, x₂, ..., xₙ`

- **Block 2 (Head):**

- Final processing layer before readout

- Contains multiple R₁D and R₂D units

**Readout:**

- Final output `y` computed as cumulative sum of block outputs

### 2. Rational 1D Unit (R₁D)

**Mathematical Definition:**

```

R(x) = P(x) / [1 + softplus(Q(x))]

y = x + α[R(x) - x]

```

**Graphical Representation:**

- 2D plot showing sigmoidal-like activation function

- X-axis: Input `x`

- Y-axis: Output `R(x)`

- Key characteristic: Smooth transition from linear to saturated behavior

### 3. Sparse Rational 2D Unit (R₂D)

**Mathematical Definition:**

```

R(xᵢ, xⱼ) = P(xᵢ, xⱼ) / [1 + softplus(Q(xᵢ, xⱼ))]

y = base + β[R(xᵢ, xⱼ) - base]

base = (xᵢ + xⱼ)/2

```

**Graphical Representation:**

- 3D surface plot showing interaction between two inputs

- X-axis: `xᵢ`

- Y-axis: `xⱼ`

- Z-axis: Output `R(xᵢ, xⱼ)`

- Color gradient indicates activation intensity

- Scatter-add operation combines with base value

---

## Key Equations

1. **Rational 1D Unit:**

```

R(x) = P(x) / [1 + softplus(Q(x))]

y = x + α[R(x) - x]

```

2. **Sparse Rational 2D Unit:**

```

R(xᵢ, xⱼ) = P(xᵢ, xⱼ) / [1 + softplus(Q(xᵢ, xⱼ))]

y = base + β[R(xᵢ, xⱼ) - base]

base = (xᵢ + xⱼ)/2

```

3. **Update Mechanism:**

```

x ← Norm(x + Res)

```

---

## Spatial Analysis

- **Legend Position:** Not explicitly shown in diagram

- **Color Coding:**

- Blue nodes: Network units

- Yellow blocks: Rational units

- Green node: Final readout

- **Flow Direction:**

- Bottom-up processing from input features to readout

- Residual connections maintain information flow

---

## Trend Verification

1. **R₁D Unit:**

- Visual trend: Output increases with input until saturation

- Confirmed by sigmoidal-like curve in 2D plot

2. **R₂D Unit:**

- Visual trend: Bilateral interaction between inputs creates peak activation

- Confirmed by 3D surface plot showing maximum at mid-range inputs

---

## Critical Observations

1. **Residual Connections:**

- Enable gradient flow through deep network

- Maintain original input information

2. **Rational Units:**

- Combine linear and nonlinear processing

- Softplus in denominator prevents division by zero

3. **Sparse 2D Interaction:**

- Explicit pairwise feature combination

- Base value ensures symmetry in input space

---

## Missing Elements

- No explicit data table present

- No secondary language detected (all text in English)

- No axis markers beyond those described in component graphs

This extraction provides complete technical specifications for implementing the described neural network architecture with Rational units.