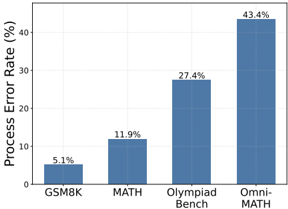

## Bar Chart: Process Error Rate by Benchmark

### Overview

The image is a vertical bar chart comparing the "Process Error Rate (%)" across four different mathematical reasoning benchmarks. The chart uses a simple, clean design with blue bars on a white background, and each bar is labeled with its exact percentage value.

### Components/Axes

* **Chart Type:** Vertical Bar Chart

* **Y-Axis:**

* **Title:** "Process Error Rate (%)" (rotated vertically on the left side).

* **Scale:** Linear scale from 0 to 40, with major tick marks at intervals of 10 (0, 10, 20, 30, 40).

* **X-Axis:**

* **Categories (from left to right):** "GSM8K", "MATH", "Olympiad Bench", "Omni-MATH".

* **Data Series:** A single data series represented by blue bars. There is no legend, as only one metric is being compared across categories.

* **Data Labels:** Each bar has its exact numerical value displayed centered above it.

### Detailed Analysis

The chart presents the following data points for Process Error Rate:

1. **GSM8K:** The bar is the shortest, located at the far left. Its labeled value is **5.1%**.

2. **MATH:** The second bar from the left is taller than the first. Its labeled value is **11.9%**.

3. **Olympiad Bench:** The third bar is significantly taller than the previous two. Its labeled value is **27.4%**.

4. **Omni-MATH:** The bar on the far right is the tallest in the chart. Its labeled value is **43.4%**.

**Trend Verification:** The visual trend is a clear and consistent upward slope from left to right. Each subsequent benchmark shows a higher process error rate than the one before it, with the increase becoming more pronounced after the MATH benchmark.

### Key Observations

* **Monotonic Increase:** There is a strict, monotonic increase in error rate across the four benchmarks as presented on the x-axis.

* **Magnitude of Increase:** The jump in error rate is most substantial between "MATH" (11.9%) and "Olympiad Bench" (27.4%), an increase of 15.5 percentage points. The increase from "Olympiad Bench" to "Omni-MATH" is also large at 16.0 percentage points.

* **Relative Performance:** The error rate for "Omni-MATH" (43.4%) is more than 8 times higher than that for "GSM8K" (5.1%).

* **Visual Scaling:** The y-axis scale (0-40) is appropriate for the data, as the highest value (43.4%) slightly exceeds the top axis marker, drawing visual attention to it.

### Interpretation

This chart demonstrates a strong positive correlation between the presumed complexity or difficulty of a mathematical reasoning benchmark and the "Process Error Rate" of the system being evaluated. GSM8K, often considered a benchmark for grade-school level math, shows a very low error rate. The error rate more than doubles for the more advanced MATH benchmark. The rate then more than doubles again for Olympiad-level problems and peaks with Omni-MATH, which likely represents a comprehensive or extremely challenging suite of problems.

The data suggests that the evaluated system's reliability in its reasoning *process* degrades significantly as the mathematical problems become more complex. The high error rate on Omni-MATH (43.4%) indicates that for this most challenging category, the system's process fails nearly half the time, which is a critical insight for understanding its limitations. The chart effectively argues that benchmark difficulty is a primary driver of process failure for this system.