## Diagram: Word Alignment Visualization

### Overview

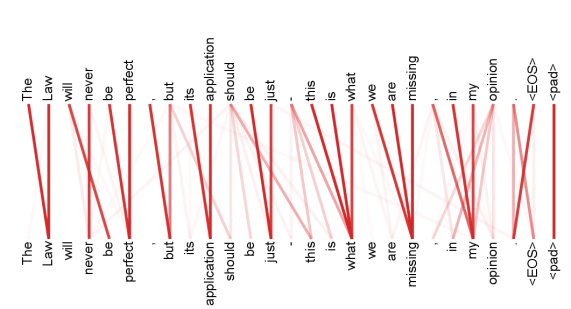

The image displays a visualization of word alignment or attention between two sequences of text. It consists of two horizontal rows of words, with red lines connecting corresponding words between the top and bottom rows. The diagram appears to illustrate a natural language processing (NLP) concept, such as sequence-to-sequence alignment, attention mechanisms, or error analysis in text generation.

### Components/Axes

* **Top Row (Source/Reference Sequence):** A complete English sentence with punctuation and special tokens.

* **Bottom Row (Target/Hypothesis Sequence):** A similar but incomplete sequence, missing some words from the top row.

* **Connecting Lines:** Red lines of varying thickness and opacity connect words from the top row to the bottom row. Thicker, more opaque lines indicate a stronger or more direct alignment.

* **Special Tokens:** Both sequences end with `<EOS>` (End Of Sequence) and `<pad>` (padding token), which are standard in machine learning for text processing.

### Detailed Analysis

**Text Transcription:**

* **Top Row (Left to Right):**

`The` `Law` `will` `never` `be` `perfect` `,` `but` `its` `application` `should` `be` `just` `.` `this` `is` `what` `we` `are` `missing` `.` `in` `my` `opinion` `<EOS>` `<pad>`

* **Bottom Row (Left to Right):**

`The` `Law` `will` `be` `perfect` `,` `but` `its` `application` `should` `be` `.` `this` `is` `what` `we` `are` `missing` `.` `in` `my` `opinion` `<EOS>` `<pad>`

**Alignment & Missing Words:**

The primary difference is that the bottom row is missing two words present in the top row:

1. The word **"never"** (4th token in top row) has no corresponding word or connection in the bottom row.

2. The word **"just"** (13th token in top row) has no corresponding word or connection in the bottom row.

**Connection Pattern:**

* Most words have a direct, one-to-one alignment shown by a red line (e.g., "The" to "The", "Law" to "Law").

* The lines for the missing words ("never", "just") are absent.

* The punctuation marks (`,`, `.`) are aligned.

* The special tokens (`<EOS>`, `<pad>`) are aligned at the end.

### Key Observations

1. **Omission Errors:** The diagram clearly highlights two specific omission errors in the bottom sequence compared to the top reference sequence.

2. **Structural Fidelity:** Despite the omissions, the overall grammatical structure and the majority of the content words are preserved and correctly aligned.

3. **NLP Context:** The use of `<EOS>` and `<pad>` tokens strongly suggests this is a visualization from a machine learning model's output, likely showing the alignment between a reference text and a model-generated hypothesis.

4. **Visual Emphasis:** The red lines draw immediate attention to the correspondences, making the missing links (the omissions) visually apparent by their absence.

### Interpretation

This diagram is a diagnostic tool used in natural language processing, specifically for tasks like machine translation, text summarization, or grammar correction. It visually answers the question: "How well does the generated text (bottom) match the reference text (top)?"

* **What it demonstrates:** It shows that the model or process generating the bottom text successfully captured most of the semantic content and structure but failed to include two key modifiers: "never" (which negates the perfection of the law) and "just" (which qualifies the desired nature of its application). These omissions significantly alter the meaning and nuance of the original statement.

* **Relationship between elements:** The top row serves as the ground truth. The bottom row is the output being evaluated. The lines represent the model's "attention" or the evaluation metric's alignment between the two sequences. The absence of lines is as informative as their presence.

* **Notable anomaly:** The most critical anomaly is the omission of "just." Its absence changes the sentence from "its application should be just" (a positive assertion about fairness) to "its application should be ." which is grammatically incomplete and semantically void. This suggests a potential failure mode in the generation process.

* **Underlying message:** The diagram argues that while the core message ("The Law will be perfect, but its application should be. this is what we are missing.") is partially conveyed, the precise, qualified argument of the original ("The Law will **never** be perfect, but its application should be **just**.") is lost. It visually underscores the importance of function words and modifiers in preserving meaning.