## Bar Chart: Generative Accuracy vs. Number of Generalizations

### Overview

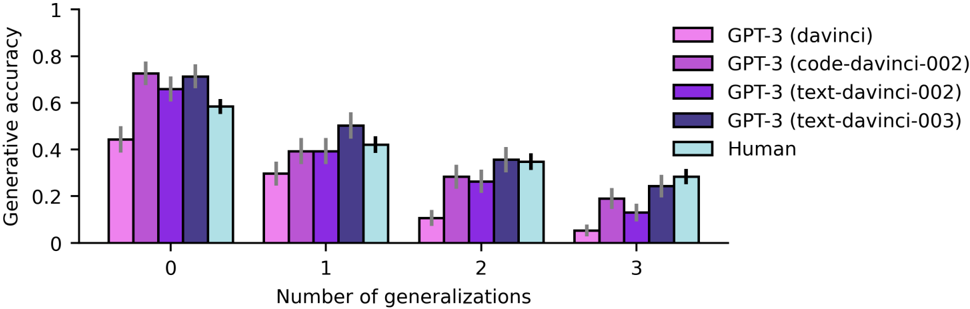

The image is a bar chart comparing the generative accuracy of different GPT-3 models (davinci, code-davinci-002, text-davinci-002, text-davinci-003) and humans across varying numbers of generalizations (0 to 3). The chart displays generative accuracy on the y-axis and the number of generalizations on the x-axis. Error bars are included on each bar.

### Components/Axes

* **Y-axis:** "Generative accuracy", ranging from 0 to 1 in increments of 0.2.

* **X-axis:** "Number of generalizations", with values 0, 1, 2, and 3.

* **Legend (Top-Right):**

* GPT-3 (davinci) - Light Pink

* GPT-3 (code-davinci-002) - Medium Pink/Purple

* GPT-3 (text-davinci-002) - Purple

* GPT-3 (text-davinci-003) - Dark Blue/Purple

* Human - Light Blue

### Detailed Analysis

The chart presents the generative accuracy for each model and humans at different levels of generalization.

**Number of Generalizations = 0:**

* GPT-3 (davinci) (Light Pink): Accuracy ~0.45

* GPT-3 (code-davinci-002) (Medium Pink/Purple): Accuracy ~0.73

* GPT-3 (text-davinci-002) (Purple): Accuracy ~0.67

* GPT-3 (text-davinci-003) (Dark Blue/Purple): Accuracy ~0.73

* Human (Light Blue): Accuracy ~0.60

**Number of Generalizations = 1:**

* GPT-3 (davinci) (Light Pink): Accuracy ~0.30

* GPT-3 (code-davinci-002) (Medium Pink/Purple): Accuracy ~0.40

* GPT-3 (text-davinci-002) (Purple): Accuracy ~0.40

* GPT-3 (text-davinci-003) (Dark Blue/Purple): Accuracy ~0.50

* Human (Light Blue): Accuracy ~0.43

**Number of Generalizations = 2:**

* GPT-3 (davinci) (Light Pink): Accuracy ~0.12

* GPT-3 (code-davinci-002) (Medium Pink/Purple): Accuracy ~0.28

* GPT-3 (text-davinci-002) (Purple): Accuracy ~0.30

* GPT-3 (text-davinci-003) (Dark Blue/Purple): Accuracy ~0.36

* Human (Light Blue): Accuracy ~0.36

**Number of Generalizations = 3:**

* GPT-3 (davinci) (Light Pink): Accuracy ~0.05

* GPT-3 (code-davinci-002) (Medium Pink/Purple): Accuracy ~0.15

* GPT-3 (text-davinci-002) (Purple): Accuracy ~0.13

* GPT-3 (text-davinci-003) (Dark Blue/Purple): Accuracy ~0.25

* Human (Light Blue): Accuracy ~0.28

### Key Observations

* All models and humans show a decrease in generative accuracy as the number of generalizations increases.

* At 0 generalizations, the GPT-3 (code-davinci-002) and GPT-3 (text-davinci-003) models have the highest accuracy, while GPT-3 (davinci) has the lowest.

* At 3 generalizations, all models have significantly lower accuracy compared to 0 generalizations.

* The human performance consistently decreases with an increasing number of generalizations.

### Interpretation

The data suggests that the generative accuracy of GPT-3 models and humans decreases as the number of generalizations required increases. This indicates that both AI models and humans find it more challenging to maintain accuracy when dealing with more abstract or generalized concepts. The GPT-3 (davinci) model appears to be the least accurate, especially as the number of generalizations increases, while the code-davinci-002 and text-davinci-003 models perform relatively better at lower generalization levels. The consistent decline in human performance highlights the inherent difficulty in generalizing across different contexts, a challenge that AI models also face.