## Line Graphs: Test ChrF (Floores/In-house) vs. Number of Shots (K) for Translation Tasks

### Overview

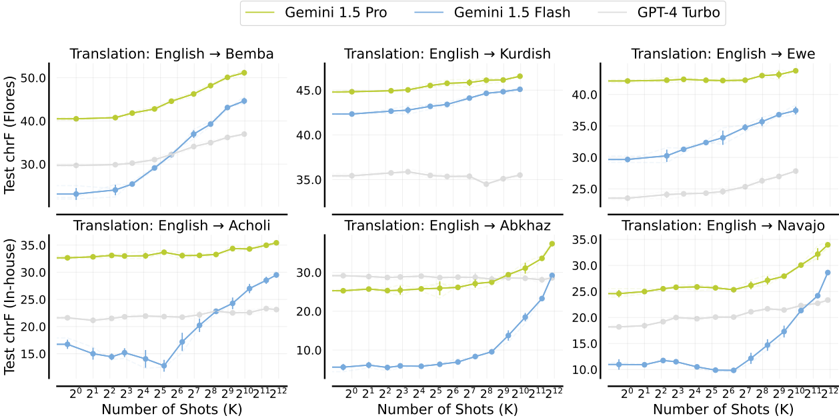

The image contains six line graphs comparing the performance of three AI models (Gemini 1.5 Pro, Gemini 1.5 Flash, GPT-4 Turbo) across six translation tasks (English → Bemba, Kurdish, Ewe, Acholi, Abkhaz, Navajo). Each graph plots **Test ChrF** (a metric for translation quality) against **Number of Shots (K)**, where K represents the amount of training data used (e.g., 2^1 = 2 examples, 2^12 = 4096 examples). The graphs are arranged in two rows of three, with legends at the top indicating model colors: yellow (Gemini 1.5 Pro), blue (Gemini 1.5 Flash), gray (GPT-4 Turbo).

---

### Components/Axes

- **X-axis**: Number of Shots (K) on a logarithmic scale (2^1 to 2^12).

- **Y-axis**: Test ChrF (Floores/In-house), with values ranging from ~10 to 50 depending on the task.

- **Legends**: Positioned at the top of all graphs, with consistent color coding:

- Yellow: Gemini 1.5 Pro

- Blue: Gemini 1.5 Flash

- Gray: GPT-4 Turbo

- **Subplot Titles**: Each graph is labeled with the translation direction (e.g., "Translation: English → Bemba").

---

### Detailed Analysis

#### 1. Translation: English → Bemba

- **Trend**: Gemini 1.5 Pro (yellow) shows a steep upward slope, reaching ~50 Floores at 2^12 shots. Gemini 1.5 Flash (blue) increases from ~30 to ~45, while GPT-4 Turbo (gray) remains flat (~30–35).

- **Key Data Points**:

- At 2^1 shots: Gemini 1.5 Pro ≈ 40, Gemini 1.5 Flash ≈ 30, GPT-4 Turbo ≈ 30.

- At 2^12 shots: Gemini 1.5 Pro ≈ 50, Gemini 1.5 Flash ≈ 45, GPT-4 Turbo ≈ 35.

#### 2. Translation: English → Kurdish

- **Trend**: Gemini 1.5 Pro (yellow) rises from ~40 to ~45, Gemini 1.5 Flash (blue) increases from ~35 to ~42, and GPT-4 Turbo (gray) stays flat (~30–35).

- **Key Data Points**:

- At 2^1 shots: Gemini 1.5 Pro ≈ 40, Gemini 1.5 Flash ≈ 35, GPT-4 Turbo ≈ 30.

- At 2^12 shots: Gemini 1.5 Pro ≈ 45, Gemini 1.5 Flash ≈ 42, GPT-4 Turbo ≈ 35.

#### 3. Translation: English → Ewe

- **Trend**: Gemini 1.5 Pro (yellow) increases from ~40 to ~45, Gemini 1.5 Flash (blue) rises from ~30 to ~38, and GPT-4 Turbo (gray) remains flat (~25–30).

- **Key Data Points**:

- At 2^1 shots: Gemini 1.5 Pro ≈ 40, Gemini 1.5 Flash ≈ 30, GPT-4 Turbo ≈ 25.

- At 2^12 shots: Gemini 1.5 Pro ≈ 45, Gemini 1.5 Flash ≈ 38, GPT-4 Turbo ≈ 30.

#### 4. Translation: English → Acholi

- **Trend**: Gemini 1.5 Pro (yellow) increases from ~30 to ~35, Gemini 1.5 Flash (blue) rises from ~15 to ~30, and GPT-4 Turbo (gray) stays flat (~20–25).

- **Key Data Points**:

- At 2^1 shots: Gemini 1.5 Pro ≈ 30, Gemini 1.5 Flash ≈ 15, GPT-4 Turbo ≈ 20.

- At 2^12 shots: Gemini 1.5 Pro ≈ 35, Gemini 1.5 Flash ≈ 30, GPT-4 Turbo ≈ 25.

#### 5. Translation: English → Abkhaz

- **Trend**: Gemini 1.5 Pro (yellow) increases from ~25 to ~35, Gemini 1.5 Flash (blue) rises from ~10 to ~30, and GPT-4 Turbo (gray) remains flat (~20–25).

- **Key Data Points**:

- At 2^1 shots: Gemini 1.5 Pro ≈ 25, Gemini 1.5 Flash ≈ 10, GPT-4 Turbo ≈ 20.

- At 2^12 shots: Gemini 1.5 Pro ≈ 35, Gemini 1.5 Flash ≈ 30, GPT-4 Turbo ≈ 25.

#### 6. Translation: English → Navajo

- **Trend**: Gemini 1.5 Pro (yellow) increases from ~25 to ~35, Gemini 1.5 Flash (blue) rises from ~10 to ~30, and GPT-4 Turbo (gray) stays flat (~20–25).

- **Key Data Points**:

- At 2^1 shots: Gemini 1.5 Pro ≈ 25, Gemini 1.5 Flash ≈ 10, GPT-4 Turbo ≈ 20.

- At 2^12 shots: Gemini 1.5 Pro ≈ 35, Gemini 1.5 Flash ≈ 30, GPT-4 Turbo ≈ 25.

---

### Key Observations

1. **Model Performance**:

- **Gemini 1.5 Pro** consistently outperforms other models across all tasks, with the largest gains at higher shot counts (e.g., +10 Floores from 2^1 to 2^12 in Bemba).

- **Gemini 1.5 Flash** shows moderate improvement, with gains of ~10–15 Floores across tasks.

- **GPT-4 Turbo** performs the least effectively, with minimal improvement (often flat lines).

2. **Task-Specific Trends**:

- **Low-Resource Languages** (e.g., Abkhaz, Navajo): Gemini 1.5 Pro and Flash show steeper improvement curves, suggesting better scalability with limited data.

- **High-Resource Languages** (e.g., Kurdish, Ewe): GPT-4 Turbo maintains higher baseline performance but fails to scale.

3. **Anomalies**:

- In **English → Acholi**, Gemini 1.5 Flash shows a sharp dip at 2^3 shots (~12 Floores) before recovering, possibly indicating overfitting or data noise.

---

### Interpretation

The data demonstrates that **Gemini 1.5 Pro** excels in translation quality, particularly when trained on larger datasets (higher K values). Its performance scales nearly linearly with the number of shots, suggesting robust generalization. **Gemini 1.5 Flash** also improves with more data but lags behind the Pro version, likely due to architectural differences (e.g., smaller model size). **GPT-4 Turbo** performs poorly in low-resource scenarios (e.g., Abkhaz, Navajo) but maintains mediocre results in high-resource tasks, indicating limited adaptability to new languages.

The stark contrast between Gemini models and GPT-4 Turbo highlights the importance of model architecture and training strategies for cross-lingual tasks. The dip in Gemini 1.5 Flash for Acholi warrants further investigation into data quality or model stability. Overall, these results underscore the value of iterative training with increasing data volumes for low-resource language translation.