## Flowchart: Agent Workflow and Evaluation System

### Overview

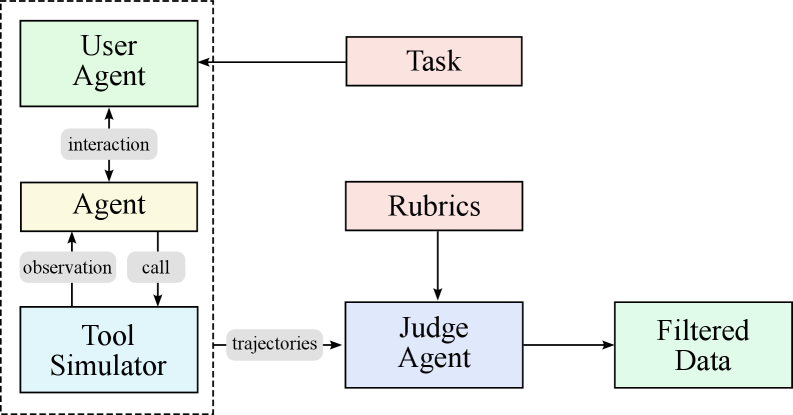

The diagram illustrates a multi-component system for agent interaction, task execution, and data validation. It features two primary sections: a left-side agent workflow and a right-side evaluation process, connected through data trajectories.

### Components/Axes

1. **Left Section (Agent Workflow)**

- **User Agent** (green box): Initiates interactions

- **Agent** (yellow box): Core processing unit

- **Tool Simulator** (blue box): Execution environment

- Arrows indicate:

- `interaction` (User Agent → Agent)

- `observation` (Agent → Tool Simulator)

- `call` (Agent → Tool Simulator)

2. **Right Section (Evaluation Process)**

- **Task** (red box): Defined objectives

- **Rubrics** (red box): Evaluation criteria

- **Judge Agent** (purple box): Validation component

- **Filtered Data** (green box): Output

- Arrows indicate:

- `trajectories` (Tool Simulator → Judge Agent)

- Evaluation flow (Rubrics → Judge Agent → Filtered Data)

3. **Connectivity**

- Task box connects to User Agent

- Judge Agent receives trajectories from Tool Simulator

- Filtered Data is the final output

### Detailed Analysis

- **Agent Workflow**:

- User Agent initiates task interactions

- Agent observes tool simulator outputs and makes calls

- Tool Simulator executes actions based on agent instructions

- **Evaluation Process**:

- Task definition originates from User Agent

- Rubrics provide evaluation framework

- Judge Agent analyzes trajectories against rubrics

- Filtered Data represents validated results

### Key Observations

1. **Modular Architecture**: Clear separation between execution (left) and evaluation (right)

2. **Data Flow**: Trajectories from tool execution feed directly into judgment

3. **Validation Layer**: Judge Agent acts as quality control between raw data and final output

4. **Color Coding**:

- Green = User-facing components (User Agent, Filtered Data)

- Red = Task definition and evaluation criteria

- Blue/Purple = Technical components (Tool Simulator, Judge Agent)

### Interpretation

This system implements a closed-loop validation framework where:

1. The User Agent defines tasks and receives final outputs

2. The Agent executes actions through a simulated environment

3. All trajectories are independently validated by the Judge Agent using predefined rubrics

4. The separation of execution and evaluation ensures objective assessment

5. Filtered Data represents both task completion and quality assurance

The architecture suggests a focus on reliable AI agent systems where outputs must meet explicit criteria before being considered valid results. The use of separate evaluation criteria (rubrics) implies potential applications in educational technology, automated grading systems, or quality control for AI-generated content.