## Diagram: Comparison of Question Answering Methods Over Incomplete Knowledge Graphs

### Overview

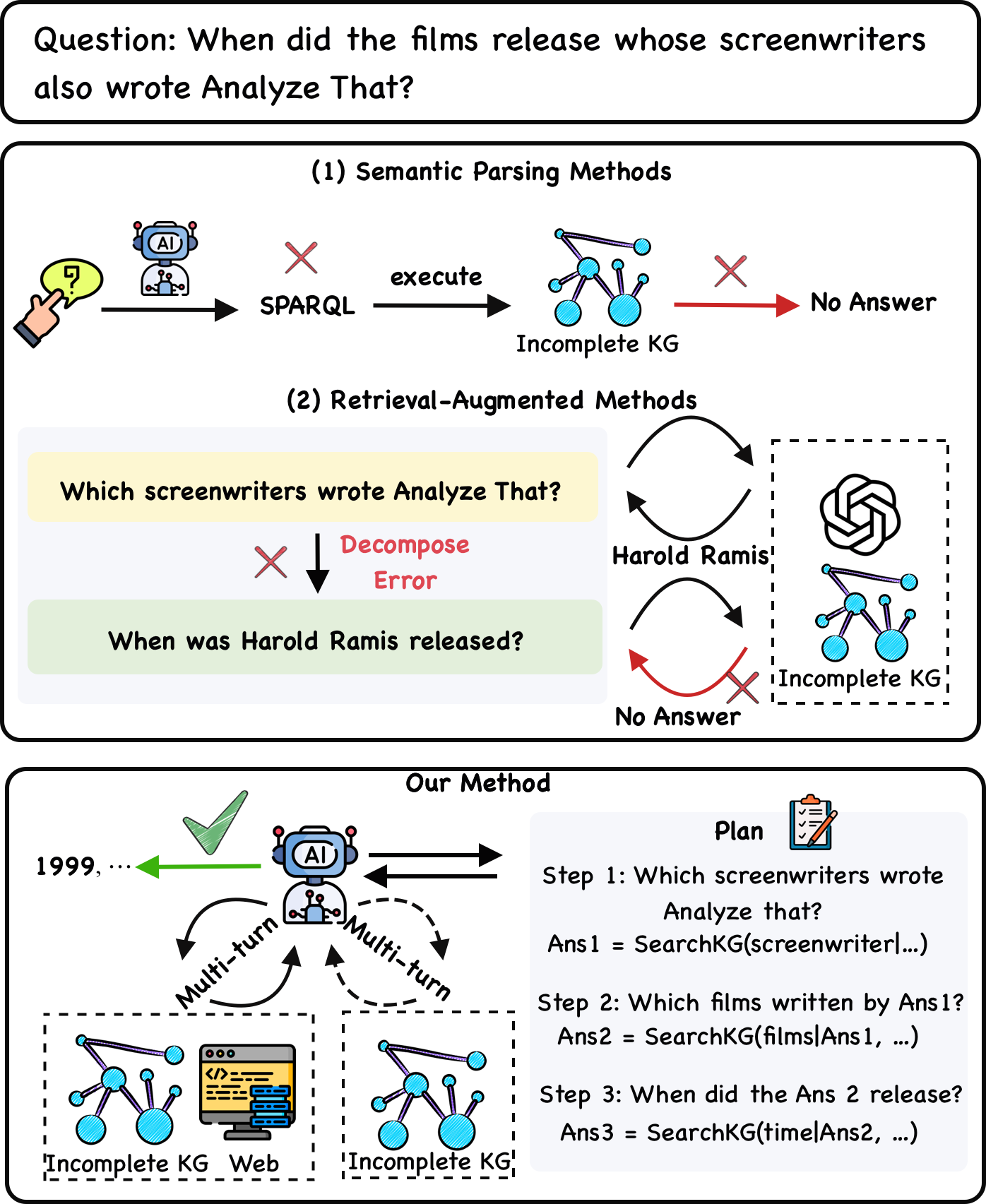

The image is a technical diagram comparing three different approaches to answering a complex, multi-hop natural language question using a knowledge graph (KG). The central example question is: **"When did the films release whose screenwriters also wrote Analyze That?"** The diagram illustrates the failure modes of two existing methods and proposes a new, successful method.

### Components/Axes

The diagram is divided into three main rectangular panels, stacked vertically.

1. **Top Panel (Question):** Contains the primary question in a rounded rectangle.

2. **Middle Panel (Existing Methods):** Contains two sub-sections:

* **(1) Semantic Parsing Methods:** A linear flow from a user query to a failed outcome.

* **(2) Retrieval-Augmented Methods:** A cyclical flow showing a decomposition error leading to a failed outcome.

3. **Bottom Panel (Our Method):** Depicts a successful, multi-turn interactive process with a planning component.

**Key Visual Components & Icons:**

* **User/Query Icon:** A hand pointing at a speech bubble with a question mark (top-left of Semantic Parsing).

* **AI Agent Icon:** A blue robot head labeled "AI". Appears in Semantic Parsing and Our Method.

* **Knowledge Graph (KG) Icon:** A network of connected blue nodes. Labeled "Incomplete KG" in all instances.

* **Red "X" Mark:** Indicates a failure, error, or dead end in the process.

* **Green Checkmark:** Indicates a successful outcome (in "Our Method").

* **Web/Database Icon:** A computer monitor with code and a database symbol (in "Our Method").

* **Plan Icon:** A clipboard with a checklist (in "Our Method").

* **Arrows:** Black arrows indicate process flow. Red arrows indicate a failed path. Green arrow indicates successful output. Dashed arrows indicate multi-turn interaction.

### Detailed Analysis

#### **Section 1: Semantic Parsing Methods**

* **Flow:** User Query → AI Agent → **SPARQL** (with a red X above it, indicating generation failure) → execute → **Incomplete KG** → (red arrow) → **No Answer**.

* **Text Transcription:** "execute", "SPARQL", "Incomplete KG", "No Answer".

* **Interpretation:** This method attempts to directly translate the natural language question into a formal SPARQL query. The red "X" suggests this translation fails, or the generated query fails when executed against an incomplete knowledge graph, resulting in no answer.

#### **Section 2: Retrieval-Augmented Methods**

* **Flow:** This section shows a two-step decomposition that contains a logical error.

1. **Step 1 (Yellow Box):** "Which screenwriters wrote Analyze That?"

2. **Error:** A red "X" and the text **"Decompose Error"** point to the next step.

3. **Step 2 (Green Box):** "When was Harold Ramis released?" (This is an incorrect sub-question; it should ask for films, not the release date of a person).

4. **Retrieval Loop:** The process then enters a loop between an LLM icon (OpenAI logo) and the "Incomplete KG". It retrieves "Harold Ramis" but then fails (red X) to find the answer to the malformed second question, leading to **"No Answer"**.

* **Text Transcription:** "Which screenwriters wrote Analyze That?", "Decompose Error", "When was Harold Ramis released?", "Harold Ramis", "Incomplete KG", "No Answer".

* **Interpretation:** This method tries to break the complex question into simpler sub-questions. However, it makes a critical decomposition error by mis-framing the second sub-question, which leads to an unrecoverable failure when querying the incomplete KG.

#### **Section 3: Our Method**

* **Flow:** This depicts a successful, iterative process.

1. **Central Agent:** The AI agent icon is at the center, engaging in **"Multi-turn"** interaction (labeled on dashed arrows) with two resources: an **"Incomplete KG"** and the **"Web"**.

2. **Planning Component (Right Side):** A "Plan" box outlines a correct three-step decomposition:

* **Step 1:** "Which screenwriters wrote Analyze that?" → `Ans1 = SearchKG(screenwriter|...)`

* **Step 2:** "Which films written by Ans1?" → `Ans2 = SearchKG(films|Ans1, ...)`

* **Step 3:** "When did the Ans 2 release?" → `Ans3 = SearchKG(time|Ans2, ...)`

3. **Successful Output:** A green arrow points from the AI agent to the final answer: **"1999, ..."** with a green checkmark above it.

* **Text Transcription:** "Our Method", "Plan", "Step 1: Which screenwriters wrote Analyze that?", "Ans1 = SearchKG(screenwriter|...)", "Step 2: Which films written by Ans1?", "Ans2 = SearchKG(films|Ans1, ...)", "Step 3: When did the Ans 2 release?", "Ans3 = SearchKG(time|Ans2, ...)", "Multi-turn", "Incomplete KG", "Web", "1999, ...".

* **Interpretation:** The proposed method uses a planning module to correctly decompose the complex question into a logical sequence of sub-questions. The AI agent then interactively gathers information from both an incomplete KG and the web in a multi-turn fashion to answer each sub-question in order, ultimately producing the correct answer (e.g., "1999" for the release year of a film like *Analyze That*).

### Key Observations

1. **Consistent Failure Symbolism:** The red "X" is used uniformly to denote points of failure across different methods.

2. **Spatial Grounding of Legend:** The "Incomplete KG" icon is consistently placed at the point of data retrieval in all three methods. The "AI" agent is positioned as the central processor in the first and third methods.

3. **Critical Error Highlighted:** The "Decompose Error" in the Retrieval-Augmented method is explicitly called out in red text, identifying it as the root cause of failure for that approach.

4. **Answer Specificity:** The successful output "1999, ..." implies the answer is a list of release years, with "1999" being the first and most prominent (likely for *Analyze That* itself or a related film).

### Interpretation

This diagram is an argument for a specific architectural approach to complex question answering over imperfect data. It demonstrates that:

* **Direct translation (Semantic Parsing)** is brittle and fails with incomplete data.

* **Naive decomposition (Retrieval-Augmented)** can introduce logical errors that derail the entire process.

* **The proposed solution** combines **explicit, correct planning** with **interactive, multi-source retrieval**. The key innovation is the agent's ability to iteratively consult both a structured (but incomplete) KG and the unstructured web, guided by a coherent plan, to compensate for the KG's shortcomings. The diagram suggests that robustness in QA systems comes not from a perfect knowledge base, but from a resilient process that can plan, decompose, and gather evidence from multiple sources dynamically. The final answer "1999, ..." serves as proof of concept for the method's efficacy.