# Technical Diagram Analysis

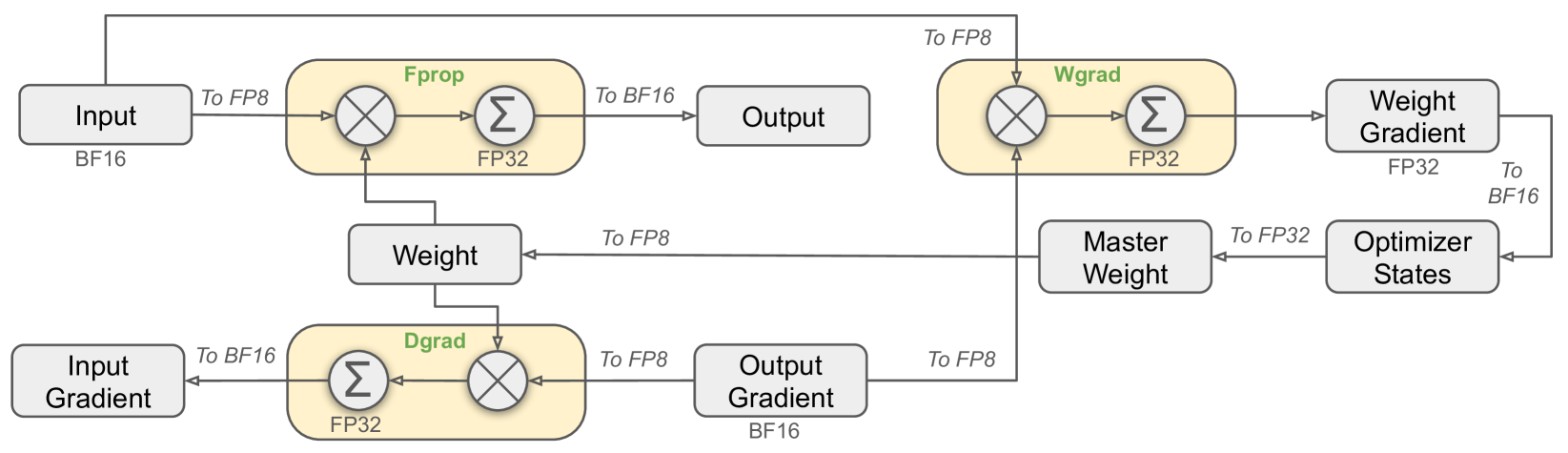

## Components and Data Flow

1. **Input**

- Data Type: `BF16`

- Flow: `To FP8`

2. **Fprop (Forward Propagation)**

- Data Type: `FP32`

- Operations:

- `Σ` (Summation)

- `⊗` (Element-wise multiplication)

- Connections:

- `To BF16` (Output)

- `To FP8` (Weight)

3. **Output**

- Data Type: `BF16`

- Source: Fprop

4. **Weight**

- Data Type: `FP32`

- Connections:

- `To FP8` (Wgrad)

- `To FP32` (Master Weight)

5. **Wgrad (Weight Gradient)**

- Data Type: `FP32`

- Operations: `Σ`

- Connections:

- `To FP8` (Master Weight)

- `To BF16` (Optimizer States)

6. **Master Weight**

- Data Type: `FP32`

- Connections:

- `To FP32` (Optimizer States)

7. **Optimizer States**

- Data Type: `BF16`

- Source: Master Weight

8. **Input Gradient**

- Data Type: `BF16`

- Flow: `To FP32`

9. **Dgrad (Gradient Descent)**

- Data Type: `FP32`

- Operations:

- `Σ` (Summation)

- `⊗` (Element-wise multiplication)

- Connections:

- `To FP8` (Output Gradient)

10. **Output Gradient**

- Data Type: `BF16`

- Source: Dgrad

## Key Observations

- **Precision Handling**:

- `BF16` (Brain Floating Point 16-bit) used for Input/Output/Optimizer States.

- `FP32` (Single-precision Floating Point) used for intermediate computations (Fprop, Wgrad, Dgrad).

- **Data Flow Paths**:

- Forward pass: `Input (BF16) → FP8 → Fprop (FP32) → Output (BF16)`.

- Backward pass: `Input Gradient (BF16) → FP32 → Dgrad (FP32) → Output Gradient (BF16)`.

- Weight updates: `Weight (FP32) → FP8 → Wgrad (FP32) → Master Weight (FP32) → Optimizer States (BF16)`.

## Diagram Structure

- **Blocks**:

- Rectangular nodes represent computational units (e.g., Fprop, Wgrad).

- Oval nodes represent data types (e.g., BF16, FP32).

- **Arrows**:

- Indicate data flow direction.

- Labels specify precision (`To FP8`, `To BF16`, etc.).

- **Operations**:

- `Σ`: Summation across channels.

- `⊗`: Element-wise multiplication (e.g., input × weights).

## Missing Elements

- No explicit legend or colorbar present.

- No numerical data or statistical trends (pure architectural diagram).