# Technical Data Extraction: Comparison of SOTA Bounds for Loss Functions

This document provides a comprehensive extraction of the data contained in the provided technical table, which compares algorithmic upper bounds (Previous and New State of the Art) against theoretical Lower Bounds for various loss function categories in a machine learning context.

## 1. Table Structure and Metadata

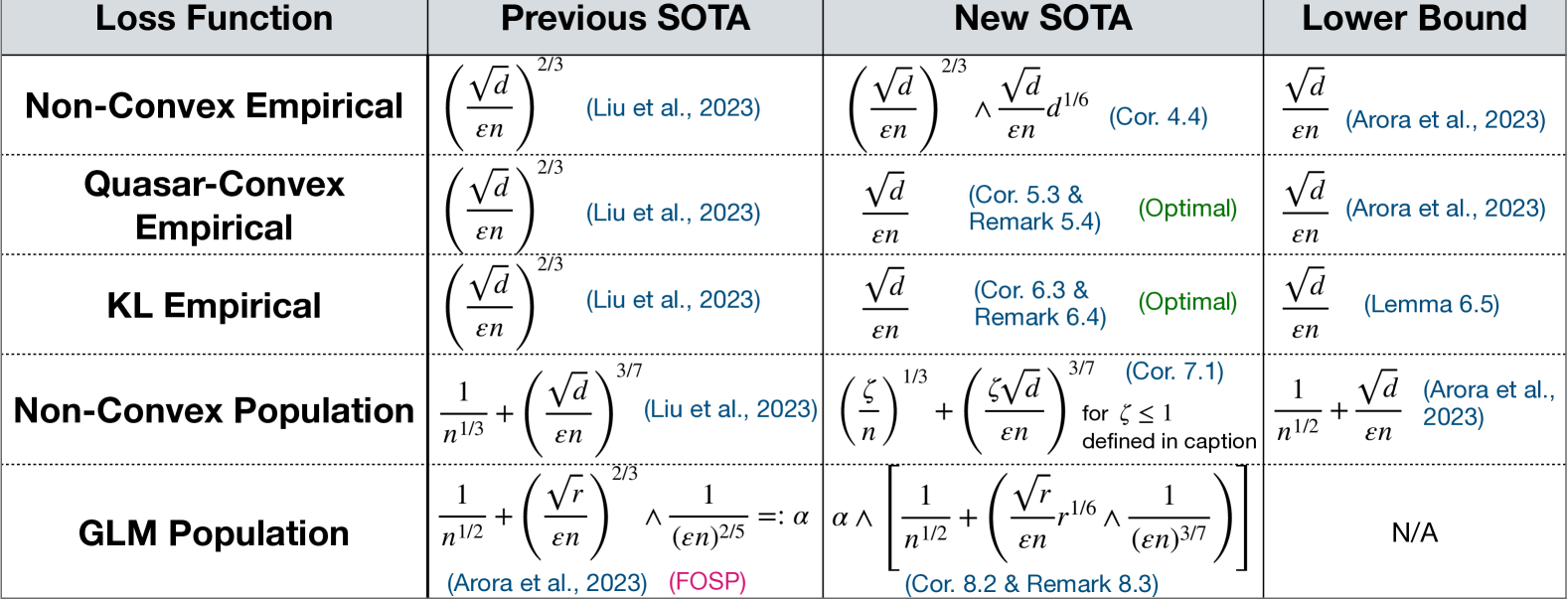

The image is a structured data table consisting of a header row and five data rows.

* **Language:** English

* **Primary Variables:** $d$ (dimension), $\varepsilon$ (privacy or error parameter), $n$ (sample size), $\zeta$ (defined in caption), $r$ (rank).

* **Color Coding:**

* **Blue Text:** Citations and internal references (e.g., Corollaries, Remarks).

* **Green Text:** Status indicators (e.g., "(Optimal)").

* **Pink Text:** Specific algorithm/methodology labels (e.g., "(FOSP)").

---

## 2. Data Content Extraction

| Loss Function | Previous SOTA | New SOTA | Lower Bound |

| :--- | :--- | :--- | :--- |

| **Non-Convex Empirical** | $\left( \frac{\sqrt{d}}{\varepsilon n} \right)^{2/3}$ <br> (Liu et al., 2023) | $\left( \frac{\sqrt{d}}{\varepsilon n} \right)^{2/3} \wedge \frac{\sqrt{d}}{\varepsilon n} d^{1/6}$ <br> (Cor. 4.4) | $\frac{\sqrt{d}}{\varepsilon n}$ <br> (Arora et al., 2023) |

| **Quasar-Convex Empirical** | $\left( \frac{\sqrt{d}}{\varepsilon n} \right)^{2/3}$ <br> (Liu et al., 2023) | $\frac{\sqrt{d}}{\varepsilon n}$ <br> (Cor. 5.3 & Remark 5.4) <br> **(Optimal)** | $\frac{\sqrt{d}}{\varepsilon n}$ <br> (Arora et al., 2023) |

| **KL Empirical** | $\left( \frac{\sqrt{d}}{\varepsilon n} \right)^{2/3}$ <br> (Liu et al., 2023) | $\frac{\sqrt{d}}{\varepsilon n}$ <br> (Cor. 6.3 & Remark 6.4) <br> **(Optimal)** | $\frac{\sqrt{d}}{\varepsilon n}$ <br> (Lemma 6.5) |

| **Non-Convex Population** | $\frac{1}{n^{1/3}} + \left( \frac{\sqrt{d}}{\varepsilon n} \right)^{3/7}$ <br> (Liu et al., 2023) | $\left( \frac{\zeta}{n} \right)^{1/3} + \left( \frac{\zeta \sqrt{d}}{\varepsilon n} \right)^{3/7}$ <br> (Cor. 7.1) <br> for $\zeta \leq 1$ defined in caption | $\frac{1}{n^{1/2}} + \frac{\sqrt{d}}{\varepsilon n}$ <br> (Arora et al., 2023) |

| **GLM Population** | $\frac{1}{n^{1/2}} + \left( \frac{\sqrt{r}}{\varepsilon n} \right)^{2/3} \wedge \frac{1}{(\varepsilon n)^{2/5}} =: \alpha$ <br> (Arora et al., 2023) (FOSP) | $\alpha \wedge \left[ \frac{1}{n^{1/2}} + \left( \frac{\sqrt{r}}{\varepsilon n} r^{1/6} \wedge \frac{1}{(\varepsilon n)^{3/7}} \right) \right]$ <br> (Cor. 8.2 & Remark 8.3) | N/A |

---

## 3. Component Analysis and Trends

### Row-by-Row Trend Verification:

1. **Non-Convex Empirical:** The New SOTA introduces a minimum operator ($\wedge$) with a term involving $d^{1/6}$, showing an improvement over the previous $2/3$ power law in certain regimes, though it has not yet reached the Lower Bound.

2. **Quasar-Convex Empirical:** The New SOTA improves the exponent from $2/3$ to $1$ (linear in $\frac{\sqrt{d}}{\varepsilon n}$), matching the Lower Bound exactly. This is explicitly labeled as **Optimal**.

3. **KL Empirical:** Similar to Quasar-Convex, the New SOTA improves the exponent to match the Lower Bound, achieving **Optimality**.

4. **Non-Convex Population:** The New SOTA introduces a parameter $\zeta$ (where $\zeta \leq 1$). This suggests a refinement of the bound based on specific properties of the distribution or function, moving closer to the $n^{-1/2}$ dependence seen in the Lower Bound.

5. **GLM Population:** The New SOTA provides a more complex bound that is the minimum of the previous SOTA ($\alpha$) and a new expression featuring $r^{1/6}$ and a $3/7$ exponent. This indicates a tighter bound for Generalized Linear Models (GLM) based on rank $r$.

### Key Observations:

* **Convergence to Optimality:** For Empirical Quasar-Convex and KL loss functions, the gap between the algorithmic upper bound and the theoretical lower bound has been closed.

* **Complexity of Population Bounds:** Population bounds (Non-Convex and GLM) are significantly more complex than Empirical bounds, involving additive terms related to the sample size $n$ (e.g., $n^{-1/3}$ or $n^{-1/2}$).

* **Notation:** The symbol $\wedge$ is used as a "minimum" operator between two mathematical expressions.