## Heatmap and Bar Chart Comparison: Type I Error, Power Estimation, and Efficiency Metrics

### Overview

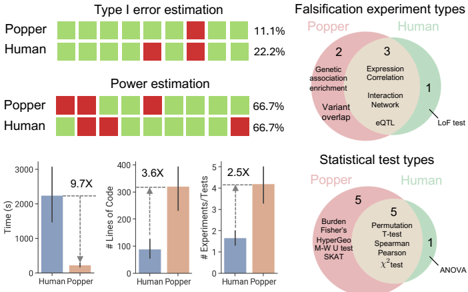

The image compares the performance of "Popper" and "Human" across three analytical dimensions: Type I error rates, power estimation accuracy, and computational efficiency. It includes heatmaps for error/power metrics, bar charts for time/code/experiment ratios, and Venn diagrams illustrating methodological overlaps in falsification and statistical testing.

---

### Components/Axes

1. **Top-Left Heatmaps**:

- **Type I Error Estimation**:

- X-axis: 10 experimental conditions (labeled 1–10).

- Y-axis: Two groups: "Popper" (green) and "Human" (red).

- Values: Popper (11.1% average), Human (22.2% average).

- **Power Estimation**:

- Same axes as Type I error.

- Values: Both groups show 66.7% power across all conditions.

2. **Bar Charts (Bottom Section)**:

- **X-axis**: Two categories: "Human" (blue) and "Popper" (orange).

- **Y-axes**:

- Time (s): Human = ~2,200s, Popper = ~200s (9.7X faster).

- Lines of Code: Human = ~80, Popper = ~300 (3.6X more code).

- # Experiments/Tests: Human = ~1.5, Popper = ~4 (2.5X more tests).

3. **Venn Diagrams (Right Section)**:

- **Falsification Experiment Types**:

- Overlap (3): "Expression Correlation," "Interaction Network," "eQTL."

- Popper-only (2): "Genetic association enrichment," "Variant overlap."

- Human-only (1): "LoF test."

- **Statistical Test Types**:

- Overlap (5): "Permutation T-test," "Spearman," "Pearson," "χ² test," "ANOVA."

- Popper-only (5): "Burden," "Fisher’s," "HyperGeo," "M-W U test," "SKAT."

---

### Detailed Analysis

1. **Type I Error & Power**:

- Popper demonstrates **lower Type I error** (11.1% vs. 22.2%) but matches Human in power estimation (66.7%).

- All power estimates are uniformly distributed across conditions.

2. **Efficiency Metrics**:

- **Time**: Popper is **9.7× faster** (200s vs. 2,200s).

- **Code Complexity**: Popper uses **3.6× more lines of code** (300 vs. 80).

- **Experiments/Tests**: Popper conducts **2.5× more tests** (4 vs. 1.5).

3. **Methodological Overlap**:

- **Falsification**: 3 shared methods (eQTL, Interaction Network, Expression Correlation).

- **Statistical Tests**: 5 shared tests (ANOVA, χ², etc.), with Popper using more specialized tests (e.g., SKAT).

---

### Key Observations

- **Trade-offs**: Popper excels in speed and error control but requires more code and tests.

- **Human Limitations**: Humans show higher error rates but use fewer computational resources.

- **Methodological Divergence**: Popper employs unique tests (e.g., SKAT), while Humans rely on foundational methods (ANOVA).

---

### Interpretation

The data suggests **Popper is optimized for high-throughput, low-error analysis** but at the cost of increased code complexity. Humans, while slower and more error-prone, use simpler, broadly applicable methods. The overlap in falsification and statistical tests indicates shared foundational approaches, but Popper’s unique tests (e.g., SKAT) may address niche scenarios. The 9.7× speed advantage of Popper could enable large-scale studies, though its higher code complexity might limit accessibility. The higher Type I error in Humans underscores the value of automated tools for precision-critical tasks.