TECHNICAL ASSET FINGERPRINT

599269747873bcca1082d8b3

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Chart Type: Multiple Line Graphs of Attention Weights

### Overview

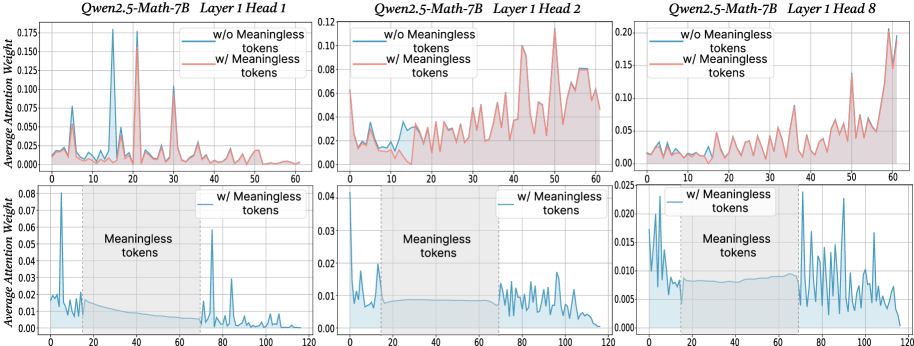

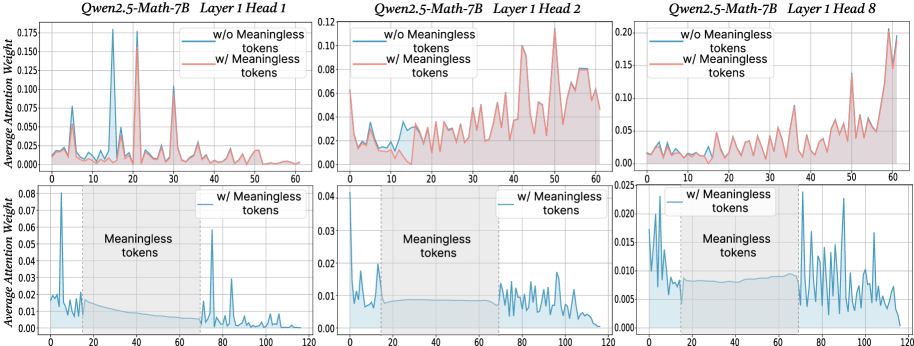

The image presents six line graphs arranged in a 2x3 grid. Each graph displays the average attention weight of a model (Qwen2.5-Math-7B) across different tokens, with and without "meaningless" tokens. The graphs are grouped by layer head (1, 2, and 8), with two graphs per head showing different ranges of tokens (0-60 and 0-120).

### Components/Axes

**General Components:**

* **Titles:** Each of the three columns has a title indicating the model and layer head: "Qwen2.5-Math-7B Layer 1 Head 1", "Qwen2.5-Math-7B Layer 1 Head 2", and "Qwen2.5-Math-7B Layer 1 Head 8".

* **Legends:** Each of the six graphs has a legend in the top-right corner indicating two data series: "w/o Meaningless tokens" (blue line) and "w/ Meaningless tokens" (red line). The bottom three graphs only show the "w/ Meaningless tokens" (blue line).

* **Grid:** All graphs have a light gray grid in the background.

**Axes (Top Row):**

* **Y-axis:** "Average Attention Weight" with a scale from 0.000 to 0.175 (Head 1), 0.00 to 0.12 (Head 2), and 0.00 to 0.20 (Head 8).

* **X-axis:** Token index, ranging from 0 to 60.

**Axes (Bottom Row):**

* **Y-axis:** "Average Attention Weight" with a scale from 0.00 to 0.08 (Head 1), 0.00 to 0.04 (Head 2), and 0.00 to 0.025 (Head 8).

* **X-axis:** Token index, ranging from 0 to 120.

* **Shaded Region:** A shaded light-blue region is present from approximately token 20 to token 70, labeled "Meaningless tokens". This region is bounded by vertical dotted lines at x=20 and x=70.

### Detailed Analysis

**Qwen2.5-Math-7B Layer 1 Head 1:**

* **Top Graph (Tokens 0-60):**

* **w/o Meaningless tokens (blue):** The line starts around 0.02, peaks sharply around token 5 (approx. 0.07), then fluctuates between 0.01 and 0.03.

* **w/ Meaningless tokens (red):** The line starts around 0.02, peaks sharply around token 20 (approx. 0.175), then fluctuates between 0.01 and 0.03.

* **Bottom Graph (Tokens 0-120):**

* **w/ Meaningless tokens (blue):** The line starts high (approx. 0.08), drops sharply to around 0.01 by token 20, remains relatively flat within the "Meaningless tokens" region, and then fluctuates between 0.00 and 0.01 for the remaining tokens.

**Qwen2.5-Math-7B Layer 1 Head 2:**

* **Top Graph (Tokens 0-60):**

* **w/o Meaningless tokens (blue):** The line fluctuates between 0.01 and 0.03.

* **w/ Meaningless tokens (red):** The line fluctuates between 0.01 and 0.10, with a peak around token 60 (approx. 0.12).

* **Bottom Graph (Tokens 0-120):**

* **w/ Meaningless tokens (blue):** The line starts high (approx. 0.04), drops sharply to around 0.01 by token 20, remains relatively flat within the "Meaningless tokens" region, and then fluctuates between 0.00 and 0.01 for the remaining tokens.

**Qwen2.5-Math-7B Layer 1 Head 8:**

* **Top Graph (Tokens 0-60):**

* **w/o Meaningless tokens (blue):** The line fluctuates between 0.01 and 0.04.

* **w/ Meaningless tokens (red):** The line fluctuates between 0.01 and 0.10, with a sharp peak around token 60 (approx. 0.20).

* **Bottom Graph (Tokens 0-120):**

* **w/ Meaningless tokens (blue):** The line starts high (approx. 0.02), drops sharply to around 0.005 by token 20, remains relatively flat within the "Meaningless tokens" region, and then fluctuates between 0.005 and 0.015 for the remaining tokens.

### Key Observations

* The "w/ Meaningless tokens" data series (red in the top graphs) generally shows higher attention weights than the "w/o Meaningless tokens" data series (blue in the top graphs), especially in the top graphs.

* In the bottom graphs, the attention weight for "w/ Meaningless tokens" (blue) drops significantly after the initial tokens and remains low within the "Meaningless tokens" region (tokens 20-70).

* The attention weights for Head 1 are generally lower than those for Heads 2 and 8 in the top graphs.

* The bottom graphs show a clear distinction in attention weight before, during, and after the "Meaningless tokens" region.

### Interpretation

The graphs illustrate how the presence of "meaningless" tokens affects the attention weights of the Qwen2.5-Math-7B model. The higher attention weights observed in the top graphs when "meaningless" tokens are included suggest that the model may be allocating more attention to these tokens, especially around specific token indices (e.g., token 20 for Head 1, token 60 for Heads 2 and 8).

The bottom graphs provide further insight into the model's behavior. The sharp drop in attention weight after the initial tokens, followed by a sustained low attention weight within the "Meaningless tokens" region, indicates that the model may be effectively ignoring these tokens. The subsequent fluctuations in attention weight after the "Meaningless tokens" region suggest that the model is re-engaging with the remaining tokens.

The differences in attention weights across different heads (1, 2, and 8) suggest that different attention heads may be specialized for processing different types of tokens or features within the input sequence.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Line Chart: Attention Weights with and without Meaningless Tokens

### Overview

The image presents three sets of line charts, each comparing the average attention weight with and without "meaningless tokens" for different layers and heads of the Qwen2.5-Math-7B model. Each set contains two charts: one showing attention weights for the entire sequence and another focusing on the region where meaningless tokens are present.

### Components/Axes

Each chart shares the following components:

* **X-axis:** "Tokens" - ranging from 0 to approximately 60-120, depending on the chart.

* **Y-axis:** "Average Attention Weight" - ranging from 0 to approximately 0.20.

* **Legend:**

* "w/o Meaningless tokens" - represented by a reddish-orange line.

* "w/ Meaningless tokens" - represented by a teal line.

* **Titles:** Each chart is titled with "Qwen2.5-Math-7B", "Layer [number]", and "Head [number]".

* **Shaded Region:** A light green shaded region indicates the presence of "Meaningless tokens" in the lower charts.

The three sets of charts correspond to:

1. Layer 1, Head 1

2. Layer 1, Head 2

3. Layer 1, Head 8

### Detailed Analysis or Content Details

**Chart 1: Layer 1, Head 1**

* **Top Chart:** The reddish-orange line ("w/o Meaningless tokens") exhibits several peaks and valleys, fluctuating between approximately 0.01 and 0.15. The teal line ("w/ Meaningless tokens") shows a generally lower attention weight, mostly below 0.05, with some peaks around 0.08.

* **Bottom Chart:** The teal line ("w/ Meaningless tokens") shows a slight increase in attention weight within the shaded region (tokens 20-60), peaking around 0.06, then decreasing again.

**Chart 2: Layer 1, Head 2**

* **Top Chart:** The reddish-orange line ("w/o Meaningless tokens") fluctuates between approximately 0.01 and 0.12. The teal line ("w/ Meaningless tokens") is generally lower, mostly below 0.04, with some peaks around 0.08.

* **Bottom Chart:** The teal line ("w/ Meaningless tokens") shows a slight increase in attention weight within the shaded region (tokens 40-120), peaking around 0.03.

**Chart 3: Layer 1, Head 8**

* **Top Chart:** The reddish-orange line ("w/o Meaningless tokens") fluctuates between approximately 0.01 and 0.20. The teal line ("w/ Meaningless tokens") is generally lower, mostly below 0.025, with some peaks around 0.05.

* **Bottom Chart:** The teal line ("w/ Meaningless tokens") shows a slight increase in attention weight within the shaded region (tokens 40-120), peaking around 0.02.

### Key Observations

* In all charts, the attention weights are generally higher when meaningless tokens are *not* present ("w/o Meaningless tokens").

* The presence of meaningless tokens ("w/ Meaningless tokens") appears to slightly increase attention weight in the region where they are located, but the overall attention weight remains lower compared to the case without meaningless tokens.

* The magnitude of attention weights varies significantly between different heads (Head 1, Head 2, Head 8). Head 8 shows the highest overall attention weights.

* The fluctuations in attention weights suggest that the model attends to different parts of the input sequence at different times.

### Interpretation

The data suggests that the Qwen2.5-Math-7B model assigns lower attention weights to meaningless tokens compared to meaningful tokens. This is expected, as the model is likely designed to focus on relevant information. The slight increase in attention weight within the region of meaningless tokens could be due to the model attempting to process or filter out these tokens.

The differences in attention weight magnitudes between different heads indicate that different heads may be responsible for attending to different aspects of the input sequence. Head 8, with its higher attention weights, may be more sensitive to the overall input or may be responsible for capturing more important features.

The fluctuations in attention weights highlight the dynamic nature of the attention mechanism, which allows the model to adapt its focus based on the input sequence. The charts provide insights into how the model processes information and how it handles meaningless tokens. The consistent pattern across all three heads suggests a robust behavior of the model.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Attention Weight Analysis: Qwen2.5-Math-7B Model

### Overview

The image displays a 2x3 grid of six line charts analyzing the "Average Attention Weight" across token positions for different attention heads in the Qwen2.5-Math-7B model. The analysis compares model behavior with and without the inclusion of "Meaningless tokens" in the input sequence.

### Components/Axes

* **Titles:** Each of the six subplots has a title specifying the model and attention head:

* Top Row (Left to Right): `Qwen2.5-Math-7B Layer 1 Head 1`, `Qwen2.5-Math-7B Layer 1 Head 2`, `Qwen2.5-Math-7B Layer 1 Head 8`

* Bottom Row (Left to Right): `Qwen2.5-Math-7B Layer 1 Head 1`, `Qwen2.5-Math-7B Layer 1 Head 2`, `Qwen2.5-Math-7B Layer 1 Head 8`

* **Y-Axis:** All six charts share the same y-axis label: `Average Attention Weight`. The scale varies per chart.

* **X-Axis:** The x-axis represents token position index. The top row charts range from 0 to 60. The bottom row charts range from 0 to 120.

* **Legends:**

* **Top Row Charts:** Each contains a legend in the top-right corner with two entries:

* `w/o Meaningless tokens` (Blue line)

* `w/ Meaningless tokens` (Red line)

* **Bottom Row Charts:** Each contains a legend in the top-right corner with one entry:

* `w/ Meaningless tokens` (Blue line)

* **Annotations:** The bottom row charts contain a shaded gray region labeled `Meaningless tokens`, indicating the span of token positions occupied by these tokens. Vertical dashed lines mark the start and end of this region.

### Detailed Analysis

**Top Row: Comparison of Attention With/Without Meaningless Tokens**

1. **Layer 1 Head 1 (Top-Left):**

* **Trend (w/o, Blue):** Shows several sharp, high-magnitude peaks. The highest peak is at approximately token position 15, reaching an average attention weight of ~0.175. Other major peaks occur near positions 25 and 30.

* **Trend (w/, Red):** The attention pattern is significantly more diffuse and lower in magnitude. The sharp peaks are replaced by broader, lower humps. The highest point is around position 25, reaching only ~0.10.

* **Interpretation:** The inclusion of meaningless tokens dramatically smooths and redistributes the attention for this head, eliminating its sharp, focused peaks.

2. **Layer 1 Head 2 (Top-Center):**

* **Trend (w/o, Blue):** Attention is relatively low and stable for the first ~20 tokens, then shows a gradual, noisy increase, peaking around position 50 at ~0.08.

* **Trend (w/, Red):** Follows a similar overall shape to the blue line but with consistently higher magnitude, especially in the latter half. It peaks around position 50 at ~0.12.

* **Interpretation:** For this head, meaningless tokens amplify the existing attention pattern, particularly for later tokens in the sequence, without fundamentally changing its shape.

3. **Layer 1 Head 8 (Top-Right):**

* **Trend (w/o, Blue):** Attention is very low and flat for the first ~40 tokens, then exhibits a few moderate peaks between positions 40-60, the highest being ~0.08.

* **Trend (w/, Red):** Shows a dramatically different pattern. Attention is elevated across the entire sequence, with a pronounced, jagged increase starting around position 30 and culminating in a very high peak of ~0.20 near position 60.

* **Interpretation:** This head's behavior is most radically altered. Meaningless tokens cause it to become highly active, especially towards the end of the sequence, suggesting it may be attending to the structure or presence of these tokens themselves.

**Bottom Row: Attention Pattern with Meaningless Tokens (Extended Sequence)**

These charts show the `w/ Meaningless tokens` condition (blue line) over a longer sequence (0-120), with the `Meaningless tokens` region highlighted.

1. **Layer 1 Head 1 (Bottom-Left):**

* **Pattern:** High attention at the very start (position 0). Attention drops within the `Meaningless tokens` region (approx. positions 15-70), showing a low, decaying trend. After the meaningless tokens end, attention spikes sharply again around position 75 and shows several subsequent peaks.

* **Key Data Points:** Initial peak ~0.08. Post-meaningless token peak ~0.06.

2. **Layer 1 Head 2 (Bottom-Center):**

* **Pattern:** Similar to Head 1 but with lower overall magnitude. A peak at the start (~0.04), a low plateau during the `Meaningless tokens` region, and a resurgence of noisy, moderate attention after position 70.

* **Key Data Points:** Initial peak ~0.04. Post-meaningless token activity fluctuates between 0.01-0.02.

3. **Layer 1 Head 8 (Bottom-Right):**

* **Pattern:** Distinct from the other two heads. Shows high, volatile attention at the start. Within the `Meaningless tokens` region, attention is moderate and relatively stable. After the region ends (position ~70), attention becomes extremely volatile with very high peaks.

* **Key Data Points:** Initial peaks ~0.025. Post-meaningless token peaks reach up to ~0.025, with significant variance.

### Key Observations

1. **Differential Impact:** The effect of meaningless tokens is not uniform across attention heads. Head 1 is smoothed, Head 2 is amplified, and Head 8 is fundamentally reconfigured.

2. **Temporal Focus:** In the extended sequence (bottom row), all heads show a pattern of high initial attention, a suppressed or stable period during the meaningless token span, and a resurgence of activity afterward. This suggests the model may "reset" or change processing mode after a block of non-informative tokens.

3. **Head 8 Anomaly:** Head 8 (Layer 1) exhibits the most extreme behavior, with the highest recorded attention weight (~0.20) occurring in the presence of meaningless tokens, indicating a potential specialization or sensitivity to this type of input.

### Interpretation

This visualization provides a technical investigation into how a large language model's internal attention mechanism reacts to the insertion of "Meaningless tokens." The data suggests these tokens are not simply ignored.

* **Mechanism Disruption:** The tokens actively alter attention distributions. For some heads (Head 1), they act as a "smoothing" agent, breaking up sharp focus. For others (Head 8), they act as a strong attractor or catalyst for high attention.

* **Processing Phases:** The bottom-row charts imply a potential three-phase processing sequence for inputs containing such tokens: 1) Initial engagement, 2) A distinct processing phase for the meaningless block (characterized by lower or stable attention), and 3) A return to (or heightened) engagement with subsequent meaningful content.

* **Model Robustness & Vulnerability:** The findings are relevant for understanding model robustness. If meaningless tokens can so drastically rewire attention patterns, they could potentially be used to manipulate model behavior or, conversely, could be a vector for adversarial attacks. The model appears to dedicate significant computational resources (high attention) to processing these tokens, which may represent an inefficiency.

**Language:** All text in the image is in English.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Graphs: Qwen2.5-Math-7B Layer 1 Head Attention Weights

### Overview

The image contains six line graphs comparing attention weight distributions across token positions (0-120) for three attention heads (Head 1, Head 2, Head 8) in a Qwen2.5-Math-7B model. Each graph contrasts two conditions:

- **Blue line**: Attention weights without meaningless tokens

- **Red line**: Attention weights with meaningless tokens

The graphs are split into two sections:

1. **Top row**: Full token position range (0-120)

2. **Bottom row**: Zoomed-in view of the "Meaningless tokens" region (20-40)

---

### Components/Axes

- **X-axis**: Token Position (0-120)

- **Y-axis**: Average Attention Weight (0.00-0.175)

- **Legends**:

- Blue: "w/o Meaningless tokens"

- Red: "w/ Meaningless tokens"

- **Shaded areas**: Confidence intervals (bottom row only)

---

### Detailed Analysis

#### Head 1 (Top Left)

- **Blue line**: Sharp peaks at token positions ~20 and ~40 (attention weights ~0.15-0.175).

- **Red line**: Broader, less pronounced peaks (max ~0.125).

- **Bottom graph**: Blue line shows concentrated peaks in 20-40 range; red line has diffuse, lower peaks.

#### Head 2 (Top Center)

- **Blue line**: Gradual rise to ~0.125 at token 60, then decline.

- **Red line**: Noisy baseline (~0.05-0.08) with minor spikes.

- **Bottom graph**: Blue line remains stable in 20-40 range; red line shows erratic fluctuations.

#### Head 8 (Top Right)

- **Blue line**: Sparse peaks at ~10, ~50, and ~100 (weights ~0.10-0.15).

- **Red line**: Continuous noise with occasional spikes (max ~0.12).

- **Bottom graph**: Blue line has minimal activity in 20-40 range; red line shows sporadic peaks.

---

### Key Observations

1. **Head 1**:

- Meaningless tokens (red) reduce peak sharpness and magnitude compared to the no-meaningless-token condition (blue).

- Attention is more distributed in the meaningless-token region.

2. **Head 2**:

- Meaningless tokens introduce noise, flattening the attention curve.

- No meaningful peaks in the 20-40 range for either condition.

3. **Head 8**:

- Meaningless tokens disrupt the sparse, periodic peaks seen in the no-meaningless-token condition.

- Attention becomes more erratic in the meaningless-token region.

---

### Interpretation

The data suggests that **meaningless tokens disrupt attention patterns** in all three heads:

- **Head 1**: Focuses on specific tokens (e.g., 20, 40) but loses precision with meaningless tokens.

DECODING INTELLIGENCE...